vmware

Deploying the ASUSTOR DRIVESTOR 4 Pro Gen 2 | AS3304T v2

For years, my trusty DRIVESTOR 2 Pro was the heartbeat of our home. From kids navigating online school to adults working from home, it served as our essential, daily hub for family backups. Unfortunately, a sudden power event had other plans for it.

While I was lucky enough to have my data backed up elsewhere. In this post, I’ll walk you through how these two models compare, share some photos of the new hardware, and show you exactly how I got my new setup up and running.

Device Compare

| ASUSTOR AS3302T (Gen 1) | ASUSTOR AS3304T v2 (Gen 2) |

|---|---|

| Drive Bays 2 x 3.5″/2.5″ SATA | Drive Bays 4 x 3.5″/2.5″ SATA |

| Processor Realtek RTD1296 (1.4GHz Quad-Core) | Processor Realtek RTD1619B (1.7GHz Quad-Core) |

| Memory (RAM) 2GB DDR4 (Non-expandable) | Memory (RAM) 2GB DDR4 (Non-expandable) |

| Networking 1 x 2.5-Gigabit Ethernet | Networking 1 x 2.5-Gigabit Ethernet |

| USB Ports 3 x USB 3.2 Gen 1 | USB Ports 3 x USB 3.2 Gen 1 |

| Btrfs Support No (EXT4 only) | Btrfs Support Yes (Snapshots supported) |

| RAID OptionsSingle, JBOD, RAID 0, 1 | RAID OptionsSingle, JBOD, RAID 0, 1, 5, 6, 10 |

| FAN/Size 1 x 70mm Standby/Idle Noise 19db Active Operation Noise 32db | FAN/Size 1 x 120mm Standby/Idle Noise 19.7db Active Operation Noise 32db |

| Power Consumption 12.3W (Operation) | Power Consumption 25.1W (Operation) |

| Dimensions 170(H) x 114(W) x 230(D) mm | Dimensions 170(H) x 174(W) x 230(D) mm |

Key Improvements in the AS3304T v2

- Processor & GPU: The v2 uses the newer RTD1619B chip. It is roughly 20% faster in clock speed and features an upgraded iGPU, which provides significantly better 4K transcoding for media players like Plex.

- Modern File System (Btrfs): A major software upgrade for the v2 models is the addition of Btrfs support. This enables Snapshots, which protect your data against accidental deletion or ransomware by allowing you to “roll back” to a previous state.

- Storage Flexibility: Beyond having twice the bays, the AS3304T v2 supports RAID 5 and 6. These configurations offer a better balance of high capacity and data redundancy compared to the RAID 1 limit of the 2-bay AS3302T

Product Photo

Initial Setup

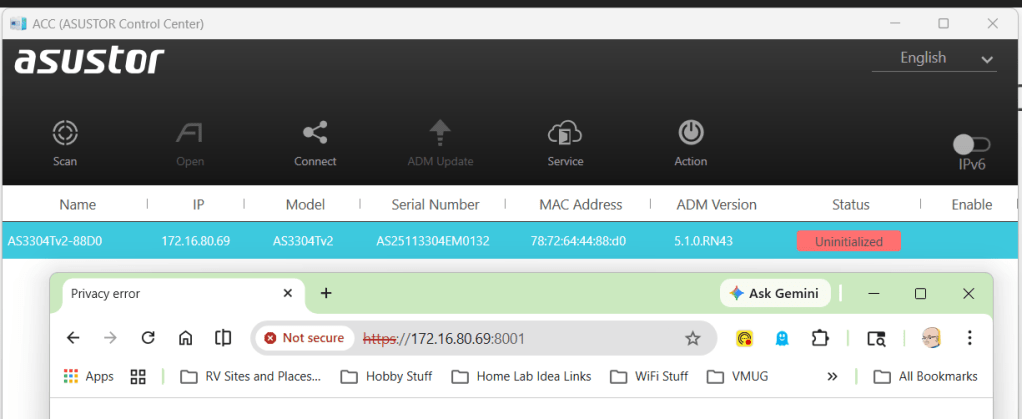

I plugged in the power and the network cable into the device and allowed it to get a DCHP address. To find the address assigned I downloaded and scanned my network with the ASUSTOR Control Center (acc.asustor.com). One I knew the IP address I went to it on port 8001.

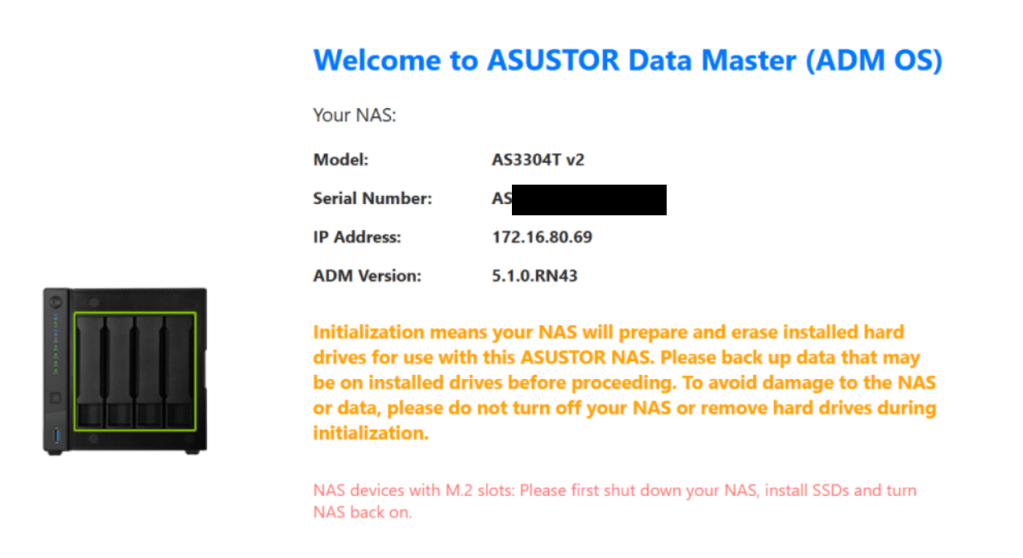

When I first entered the web interface I was welcomed by the main screen. One important note is the orange initialization statement. It notes your drive will be erased and a warning to backup your data.

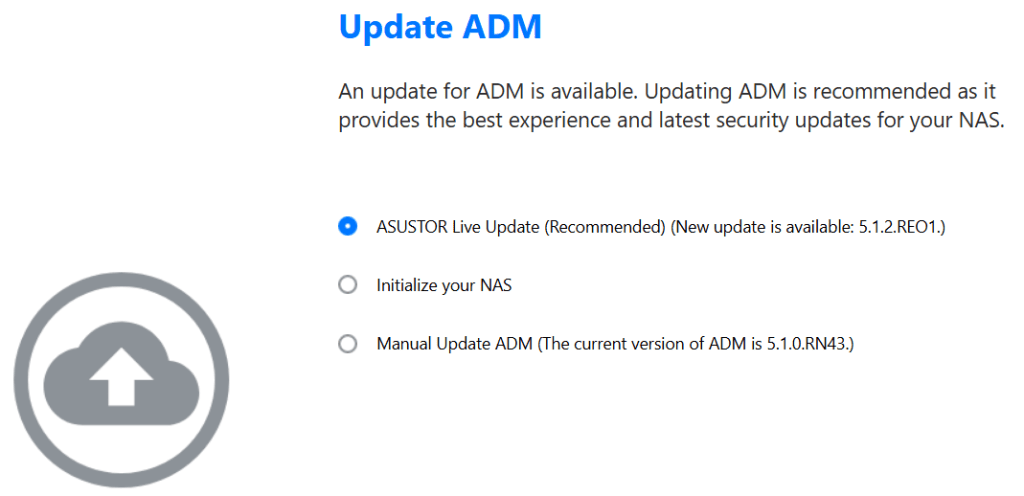

One thing I like about the initial setup is the ability to update the ADM (ASUSTOR Data Master) version. I allowed it to update as I was doing my install.

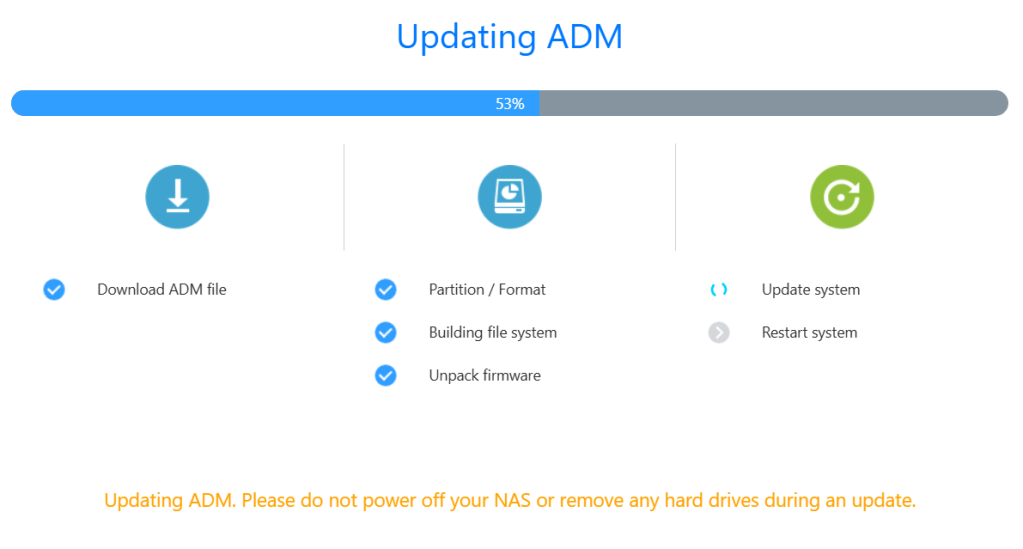

After I made all my selections, then ADM completes it pre-tasks.

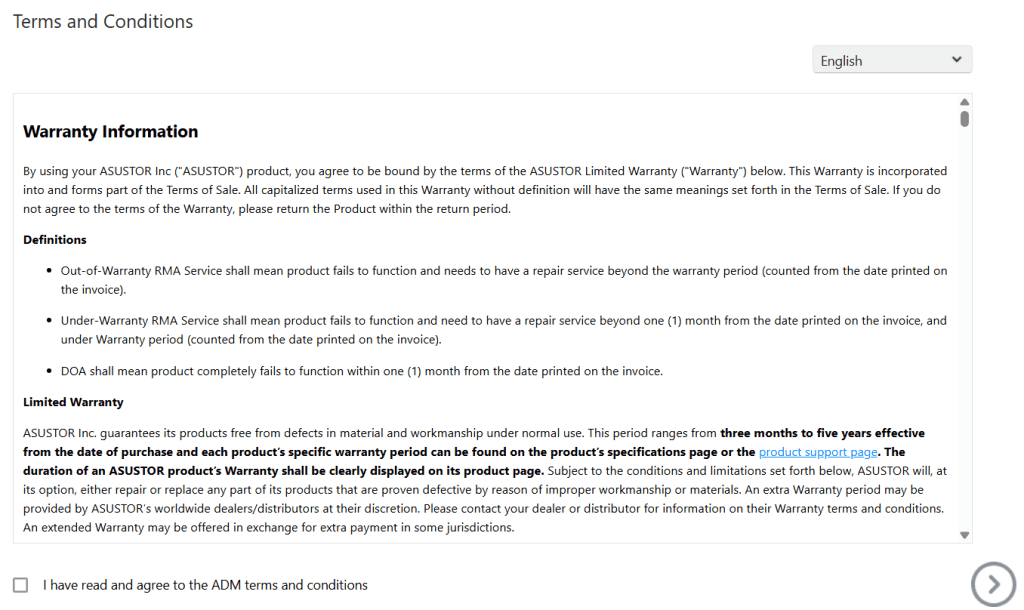

Next, the T&Cs are presented.

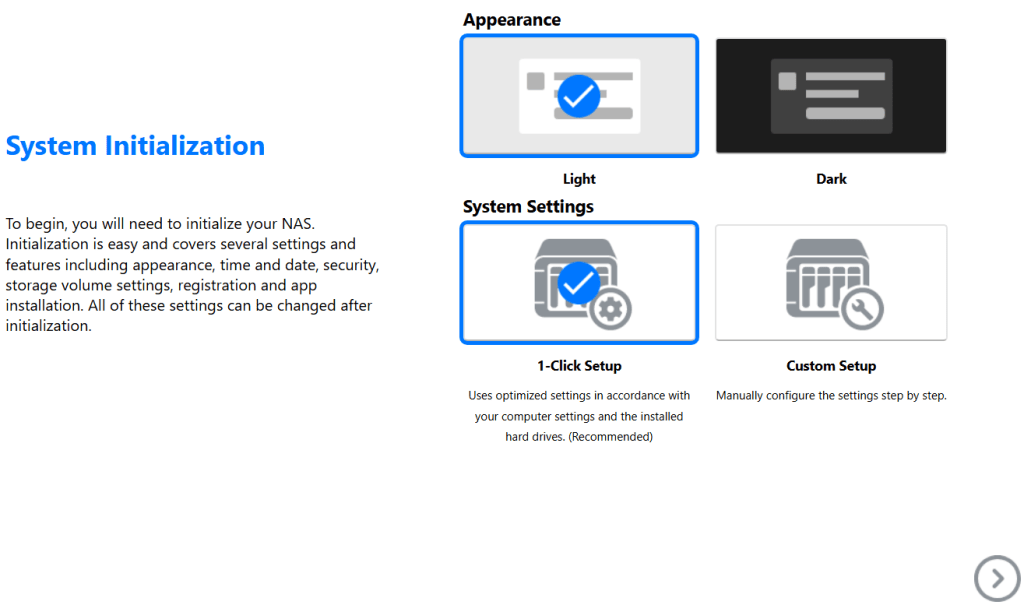

I get to choose my system appearance.

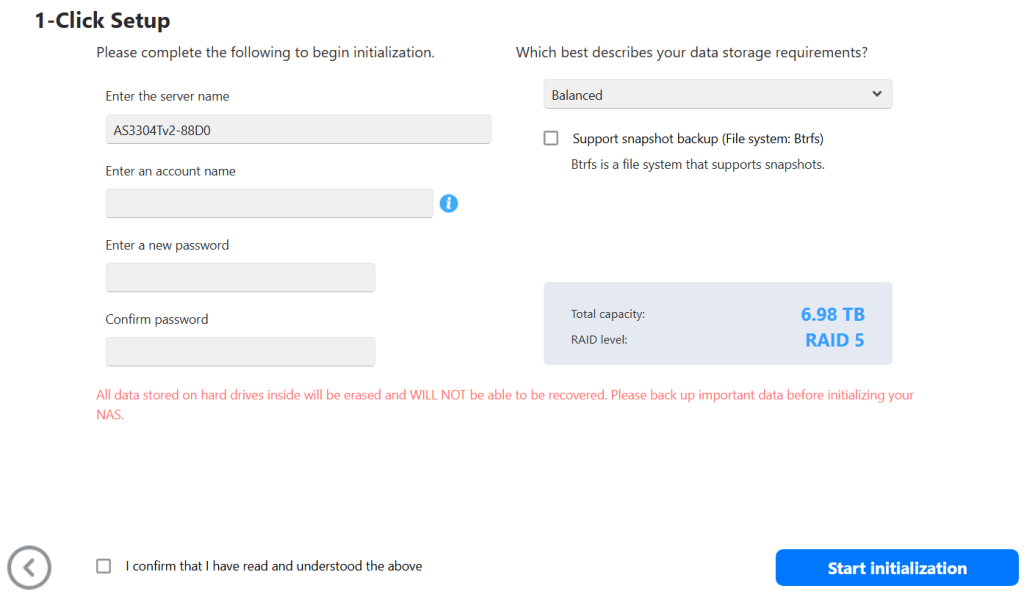

I entered my information.

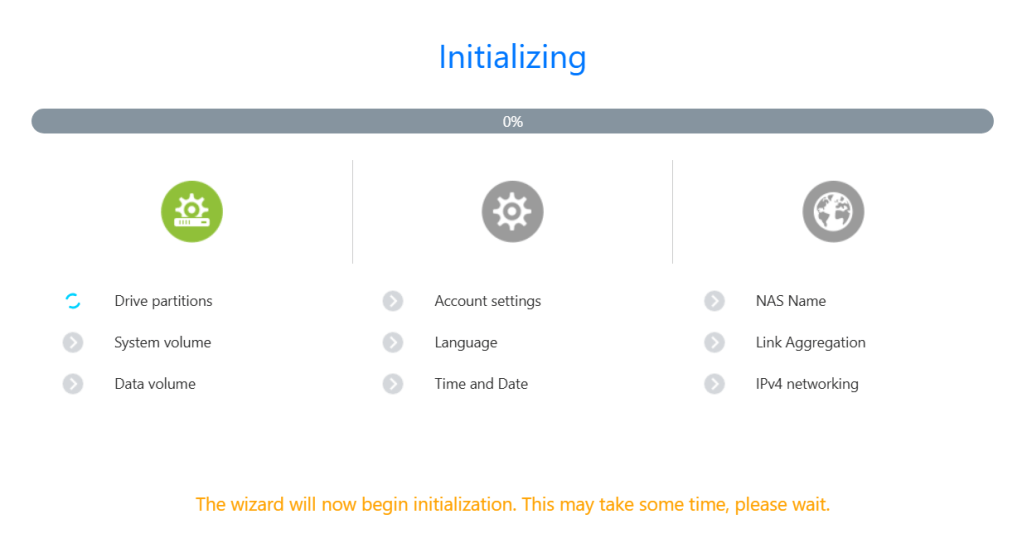

The system does its final initialization.

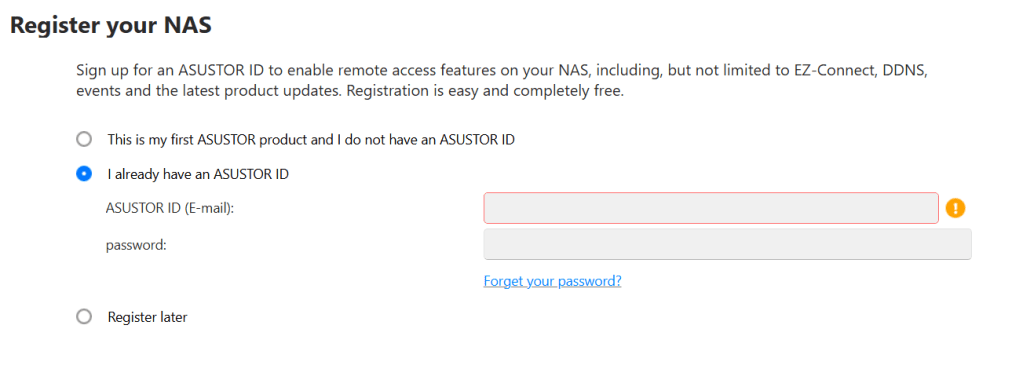

I enter in my ASUSTOR ID and then on next page put in your Contact information, region, and language

All done!

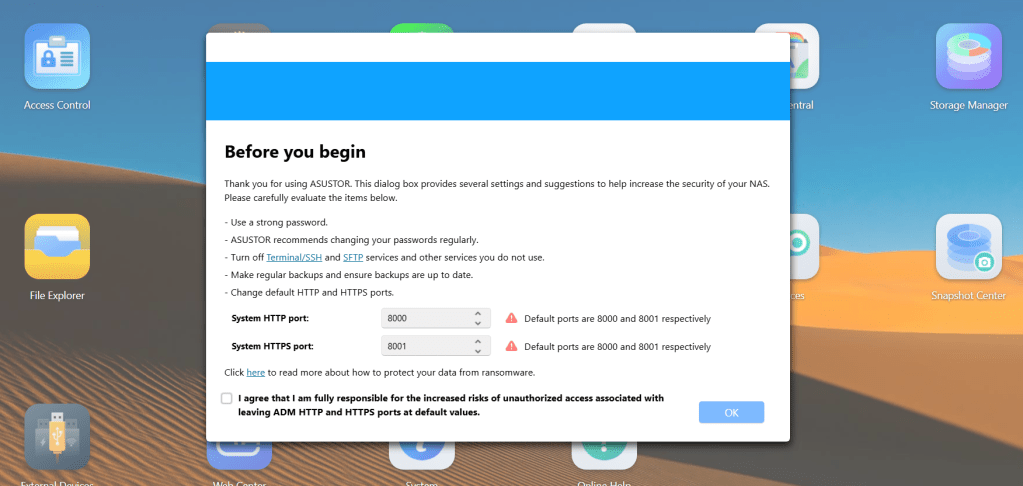

Back in the web interface, I’m prompted to adjust my http and https ports. I change them from the defaults of 8000 and 8001.

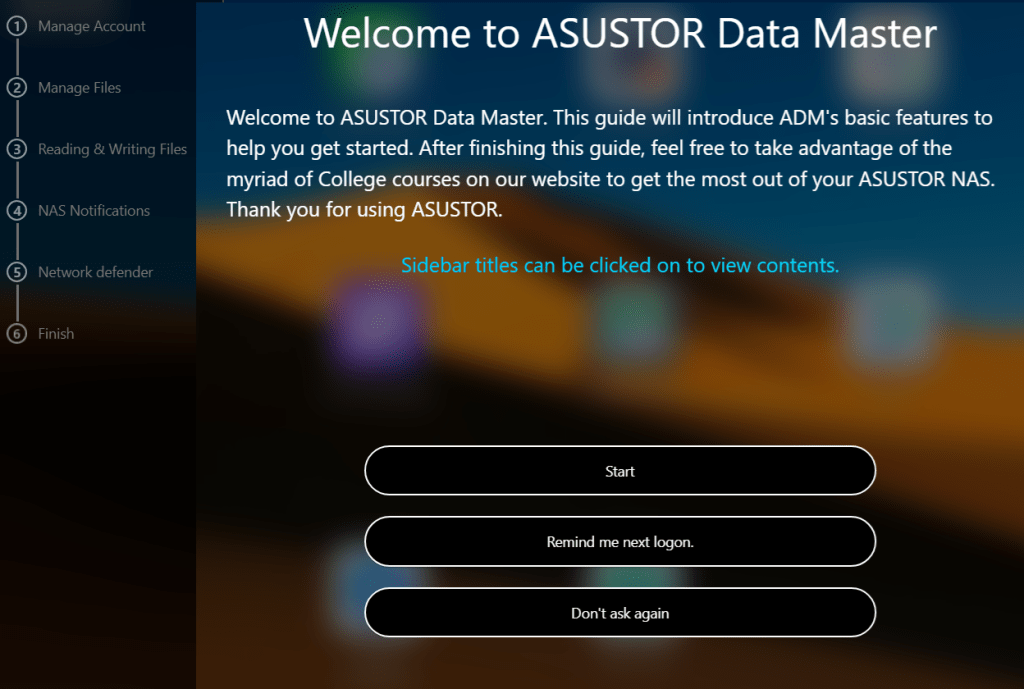

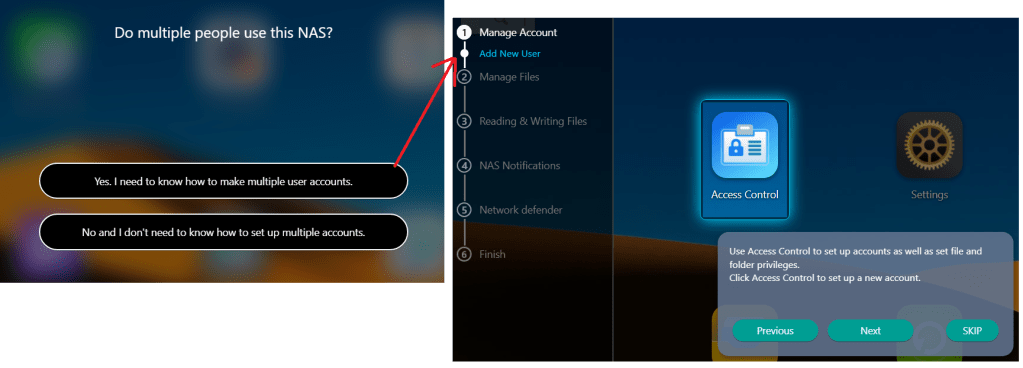

The ADM guide appears and it is a good way to learn where things are. I’m not going through all 6 steps but just know its a very hand tool.

Here is what the first one looks like.

It actually takes you step by step.

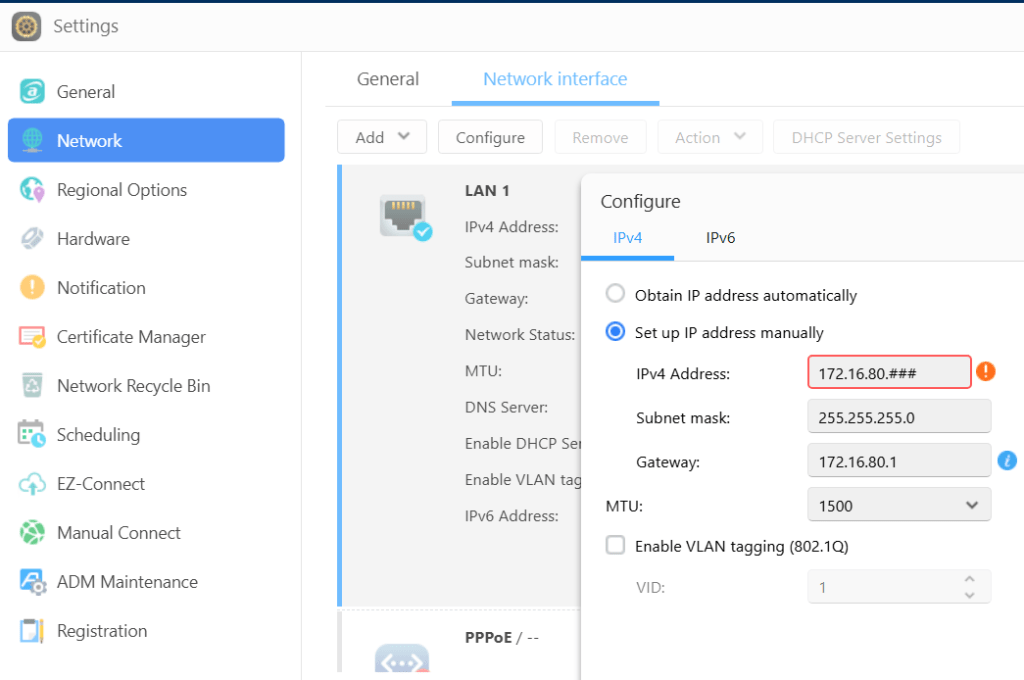

Once I exit the ADM guide, I first set up my network address. Settings > Network > change to manual IP > enter my IP and save it. The web interface reboots into the new IP. Additionally, I disabled IPv6.

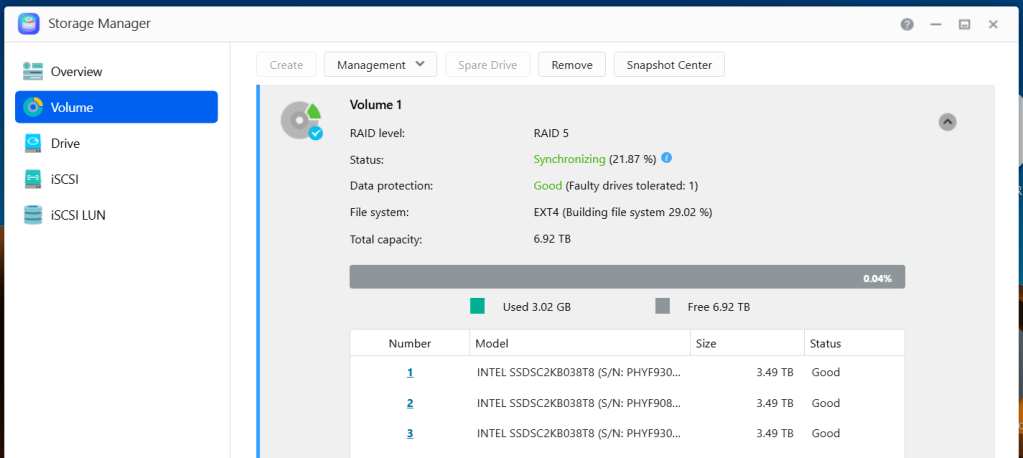

Next I go into the Storage Manager and validate my disks. It also gives me a status of the RAID 5 initialization.

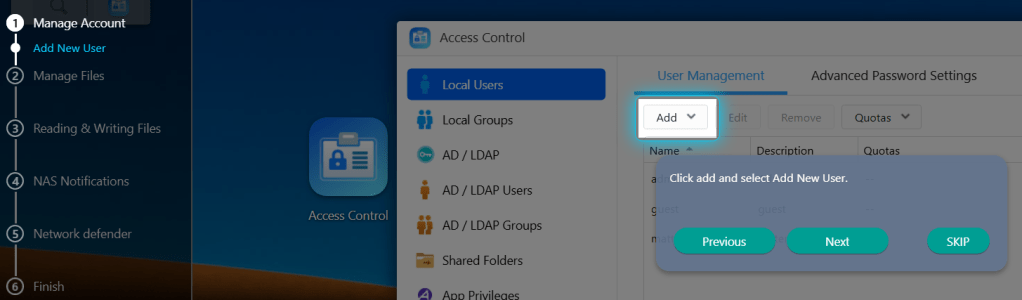

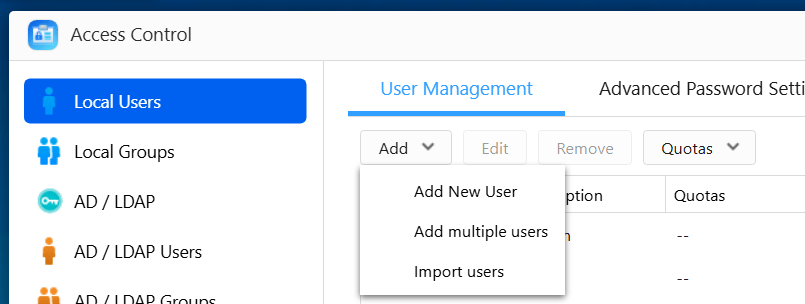

Then in Access Control I’ll setup a few user accounts.

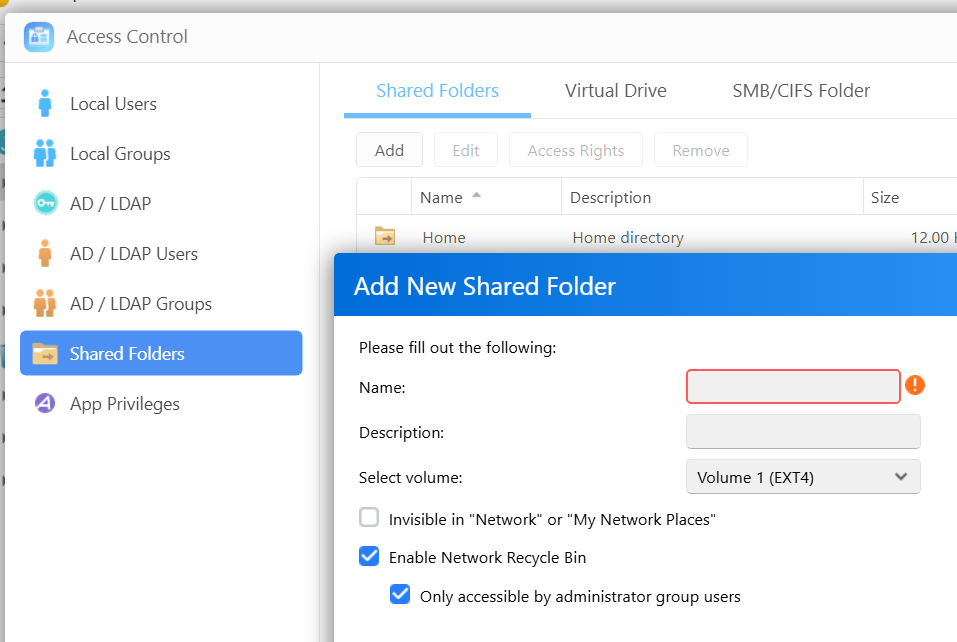

While in Access Control, I setup shared folder. The add shared folder window appears, I name my share, select access ‘by user’, choose the user and their access rights, chose to encrypt of not, and click on finish to complete the process.

From my experience ADM is a very intuitive and easy to learn. Speaking of learning, ASUSTOR has a FREE NAS Tutorial that explains how to use it! I’ve used it quite a bit is worth a look.

While losing my original DRIVESTOR 2 Pro was a headache, the jump to the DRIVESTOR 4 Pro Gen 2 has been a massive silver lining. With the extra drive redundancy of RAID 5, I feel much more secure about our family’s digital files. It’s faster, quieter, and handles our school and work-from-home needs without breaking a sweat. If you’re looking for a NAS that balances power with a genuinely easy learning curve, ASUSTOR remains my top recommendation.

Using PowerShell to setup AV exceptions for Workstation 25H2u1 and Windows 11

Adding AV exceptions to your Workstation deployment on Windows 11 can really improve the overall performance. In this blog post I’ll share my exclusion script recently used on my environment.

NOTE: You cannot just cut, paste, and run the script below. There are parts of the script that have to be configured for the intended system.

What the script does.

- For Windows 11 users with Microsoft’s Virus & threat protection enabled this script will tell AV to not scan specific file types, folders, and processes related to VMware Workstation.

- It will ignore exceptions that already exist.

- It will display appropriate messages (Successful, or Already Exists) as it completes tasks.

What is the risk?

- Adding an exception (or exclusion) to antivirus (AV) software, while sometimes necessary for application functionality, significantly lowers the security posture of a device. The primary risk is creating a security blind spot where malicious code can be downloaded, stored, and executed without being detected.

- Use at your own risk. This code is for my personal use.

What will the code do?

It will add several exclusions listed below.

- File Type: Exclude these specific VMware file types from being scanned:

- .vmdk: Virtual machine disk files (the largest and most I/O intensive).

- .vmem: Virtual machine paging/memory files.

- .vmsn: Virtual machine snapshot files.

- .vmsd: Metadata for snapshots.

- .vmss: Suspended state files.

- .lck: Disk consistency lock files.

- .nvram: Virtual BIOS/firmware settings.

- Folder: Unique to my deployment it will exclude the following directories to prevent your antivirus from interfering with VM operations

- VMware Installation folder

- VM Storage Folders: Exclude the main directory where I store my virtual machines.

- Installation Folder: Exclude the VMware Workstation installation path ((default: C:\Program Files (x86)\VMware\VMware Workstation).

- Process:

- vmware.exe: The main Workstation interface.

- vmware-vmx.exe: The core process that actually runs each virtual machine.

- vmnat.exe: Handles virtual networking (NAT).

- vmnetdhcp.exe: Handles DHCP for virtual networks.

The Script

Under the section ‘#1. Define your exclusions ‘ is where I adapted this code to match my environment

# Check for Administrator privileges

if (-not ([Security.Principal.WindowsPrincipal][Security.Principal.WindowsIdentity]::GetCurrent()).IsInRole([Security.Principal.WindowsBuiltInRole]::Administrator)) {

Write-Warning "Please run this script as an Administrator."

break

}

# 1. Define your exclusions

# This is where you put in YOUR folder exclusions

$folders = @("C:\Program Files (x86)\VMware\VMware Workstation", "D:\Virtual Machines", "F:\Virtual Machines", "G:\Virtual Machines", "H:\Virtual Machines", "I:\Virtual Machines", "J:\Virtual Machines", "K:\Virtual Machines", "L:\Virtual Machines")

# These are the common process exclusions

$processes = @("C:\Program Files (x86)\VMware\VMware Workstation\vmware.exe", "C:\Program Files (x86)\VMware\VMware Workstation\x64\vmware-vmx.exe", "C:\Program Files (x86)\VMware\VMware Workstation\vmnat.exe", "C:\Program Files (x86)\VMware\VMware Workstation\vmnetdhcp.exe")

# These are the common extension exclusions

$extensions = @(".vmdk", ".vmem", ".vmsd", ".vmss", ".lck", ".nvram")

# Retrieve current settings once for efficiency

$currentPrefs = Get-MpPreference

Write-Host "Checking and applying Windows Defender Exclusions..." -ForegroundColor Cyan

# --- Validate and Add Folders ---

foreach ($folder in $folders) {

if ($currentPrefs.ExclusionPath -contains $folder) {

Write-Host "Note: Folder exclusion already exists, skipping: $folder" -ForegroundColor Yellow

} else {

Add-MpPreference -ExclusionPath $folder

Write-Host "Successfully added folder: $folder" -ForegroundColor Green

}

}

# --- Validate and Add Processes ---

foreach ($proc in $processes) {

if ($currentPrefs.ExclusionProcess -contains $proc) {

Write-Host "Note: Process exclusion already exists, skipping: $proc" -ForegroundColor Yellow

} else {

Add-MpPreference -ExclusionProcess $proc

Write-Host "Successfully added process: $proc" -ForegroundColor Green

}

}

# --- Validate and Add Extensions ---

foreach ($ext in $extensions) {

if ($currentPrefs.ExclusionExtension -contains $ext) {

Write-Host "Note: Extension exclusion already exists, skipping: $ext" -ForegroundColor Yellow

} else {

Add-MpPreference -ExclusionExtension $ext

Write-Host "Successfully added extension: $ext" -ForegroundColor Green

}

}

Write-Host "`nAll exclusion checks complete." -ForegroundColor Cyan

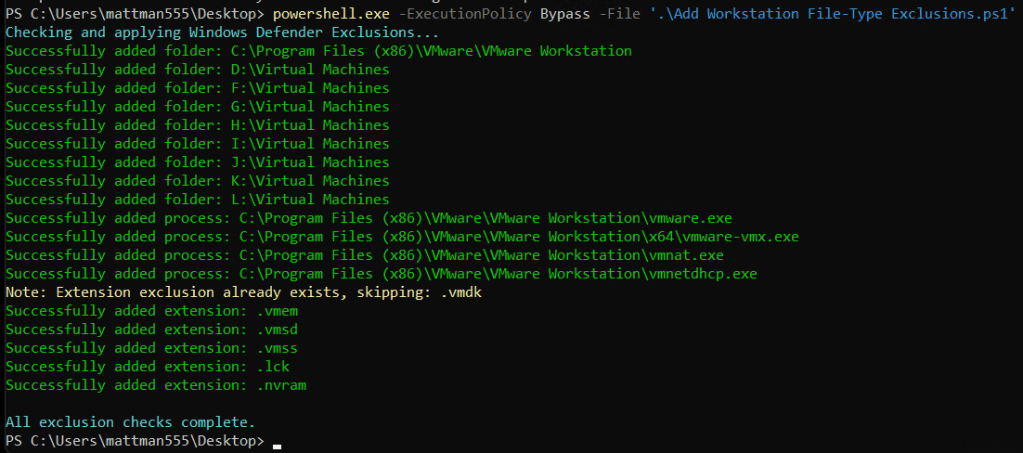

The Output

In the output below, when the script creates an item successfully it will show in green. If it detects a duplicate it will output a message in yellow. I ran the script with a .vmdk exclusion already existing to test it out.

When its complete the AV exclusions in Windows should looks similar to the partial screenshot below.

To view the exclusions, in Win 11 open ‘Virus & Threat Protection’ > Manage Settings > Under Exclusions chose ‘Add or remove exclusion’

Upgrading Workstation 25H2 to 25Hu1

Last February 26 2026 VMware released Workstation Pro 25H2u1. It’s an update that does repair a few bugs and security patches. In this blog I’ll cover how to upgrade it.

Helpful links

- Release Notes: VMware Workstation Pro 25H2u1 Release Notes

- Free Download: Download 25H2U1 < Recommend logging into support.broadcom.com first, then paste this link into your browser.

- Documentation: VMware Workstation Pro 25H2 Documentation

Meet the Requirements:

When installing/upgrading Workstation on Windows most folks seem to overlook the requirements for Workstation and just install the product. You can review the requirements here.

There are a couple of items folks commonly miss when installing Workstation.

- The number one issue is Processor Requirements for Host Systems . It’s either they have a CPU that is not supported or they simply did not enable Virtualization support in the BIOS.

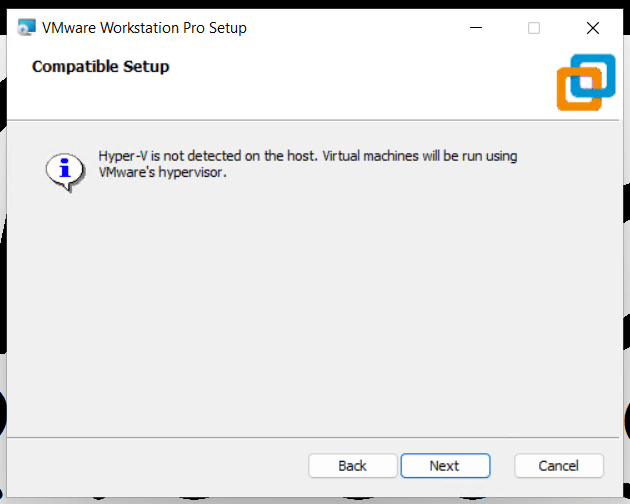

- The second item is Microsoft Hyper-V enabled systems. Workstation supports a system with Hyper-V enabled but for the BEST performance its best to just disable these features.

- Next, if you’ve upgraded your OS but never reinstall Workstation, then its advised to uninstall then install Workstation.

- Lastly, if doing a fresh OS install ensuring drivers are updated and DirectX is at a supportable version.

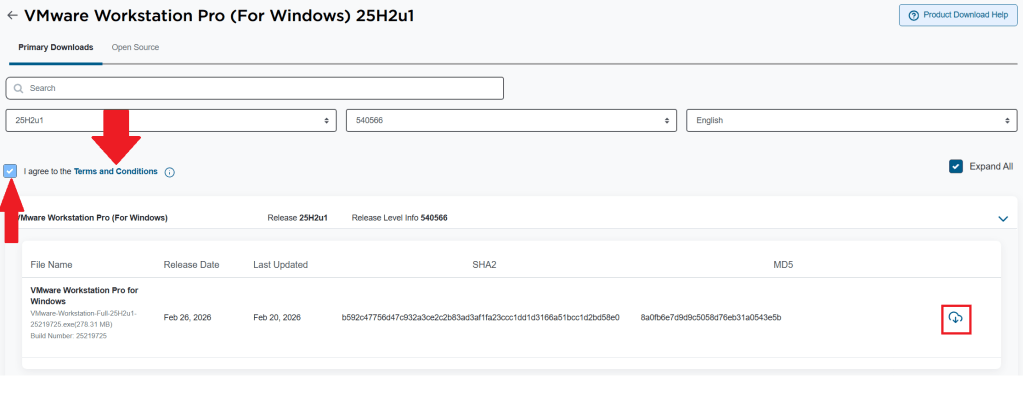

How to download Workstation

Download the Workstation Pro 25H2u1 for Windows. Make sure you click on the ‘Terms and Conditions’ AND check the box. Only then can you click on the download icon.

Choose your install path

Before you do a fresh install:

- Comply with the requirements

- For Accelerated 3D Graphics, ensure DirectX and Video card drivers are updated

- Review: Install Workstation Pro on a Windows Host

If you are upgrading Workstation, review the following:

- Ensure your environment is compatible with the requirements

- If you have existing VMs:

- Document your network settings

- Shut down your current version of Workstation

- Review: Upgrading Workstation Pro

Note: If you are upgrading the Windows OS or in the past have done a Windows Upgrade (Win 10 to 11), you must uninstall Workstation first, and then reinstall Workstation. More information here.

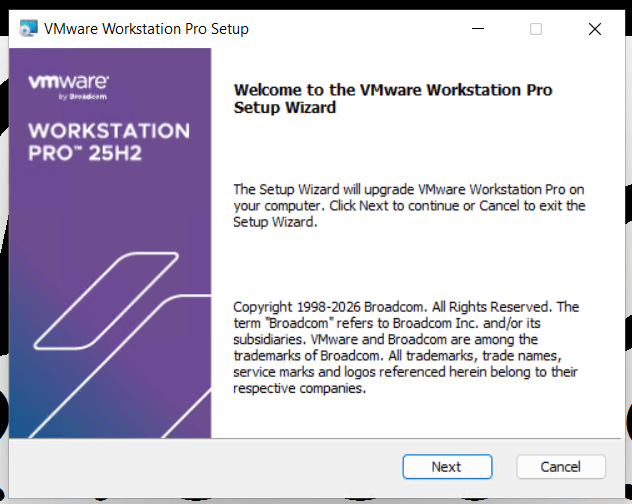

Upgrading Workstation 25H2 to 25Hu1

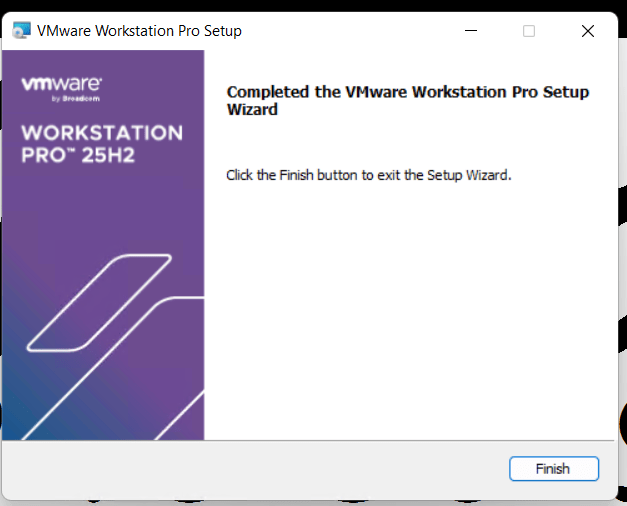

Run the download file, wait a min for it to confirm space requirements, then click Next

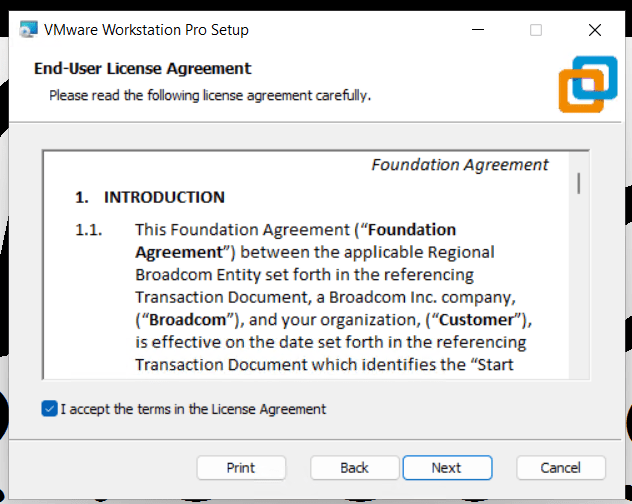

Accept the EULA.

Compatible setup check

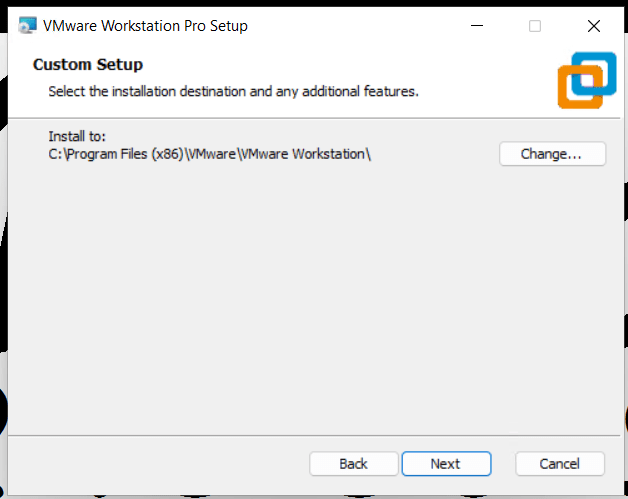

Confirm install directory

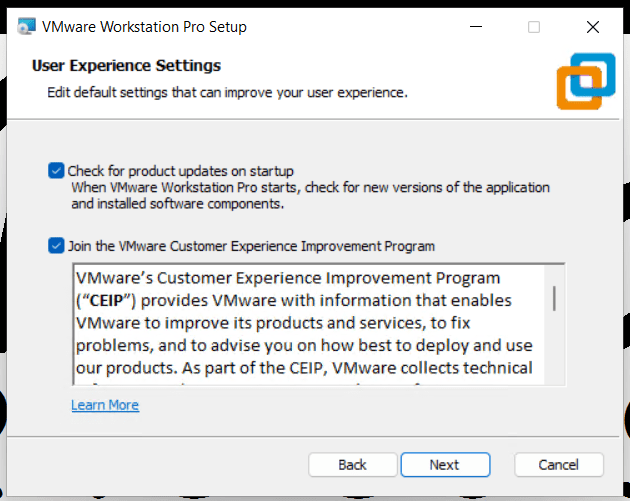

Check for updates and join the CEIP program.

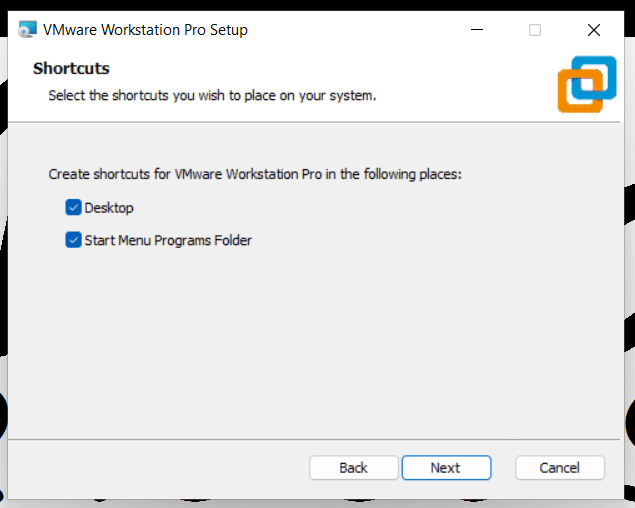

Allow it to create shortcuts

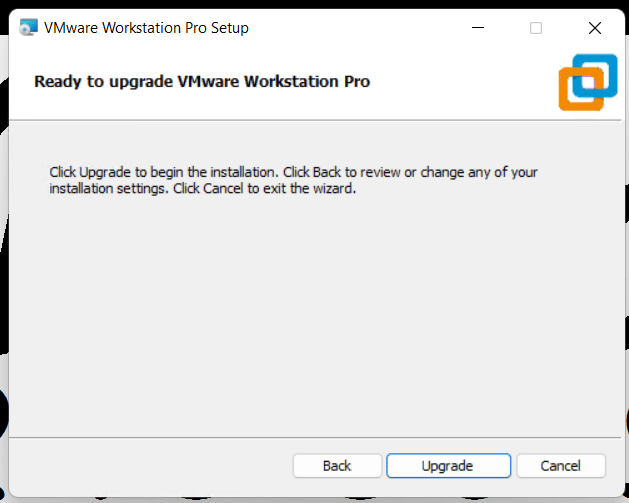

Click Upgrade to complete the upgrade

Click on Finish to complete the Wizard.

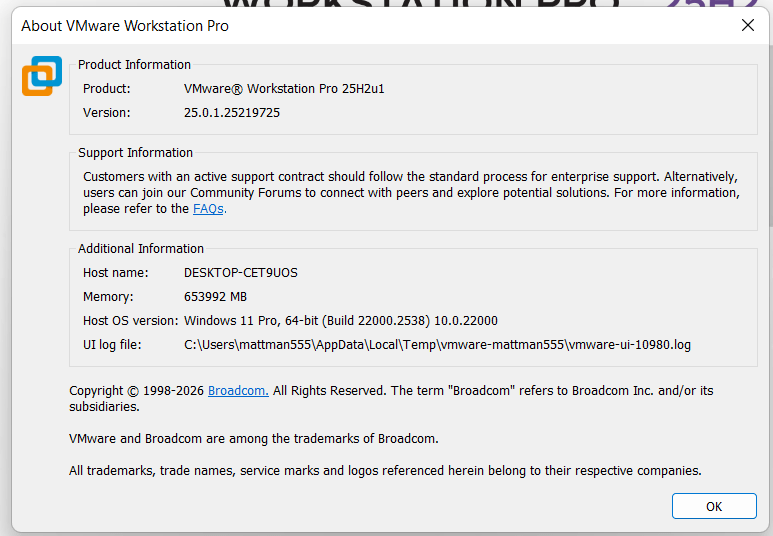

Lastly, check the version number of Workstation. Open Workstation > Help > About

My Silicon Treasures: Mapping My Home Lab Motherboards Since 2009

I’ve been architecting home labs since the 90s—an era dominated by bare-metal Windows Servers and Cisco products. In 2008, my focus shifted toward virtualization, specifically building out VMware-based environments. What began as repurposing spare hardware for VMware Workstation quickly evolved. As my resource requirements scaled, I transitioned to dedicated server builds. Aside from a brief stint with Gen8 enterprise hardware, my philosophy has always been “built, not bought,” favoring custom component selection over off-the-shelf rack servers. I’ve documented this architectural evolution over the years, and in this post, I’m diving into the the specific motherboards that powered my past home labs.

Gen 1: 2009-2011 GA-EP43-UD3L Workstation 7 | ESX 3-4.x

Back in 2009, I was working for a local hospital in Phoenix and running the Phoenix VMUG. I deployed a Workstation 7 Home lab on this Gigabyte motherboard. Though my deployment was simple, I was able deploy ESX 3.5 – 4.x with only 8GB of RAM and attach it to an IOMega ix4-200d. I used it at our Phoenix VMUG meetings to teach others about home labs. I found the receipt for the CPU ($150) and motherboard ($77), wow price sure have changed.

REF Link – Home Lab – Install of ESX 3.5 and 4.0 on Workstation 7

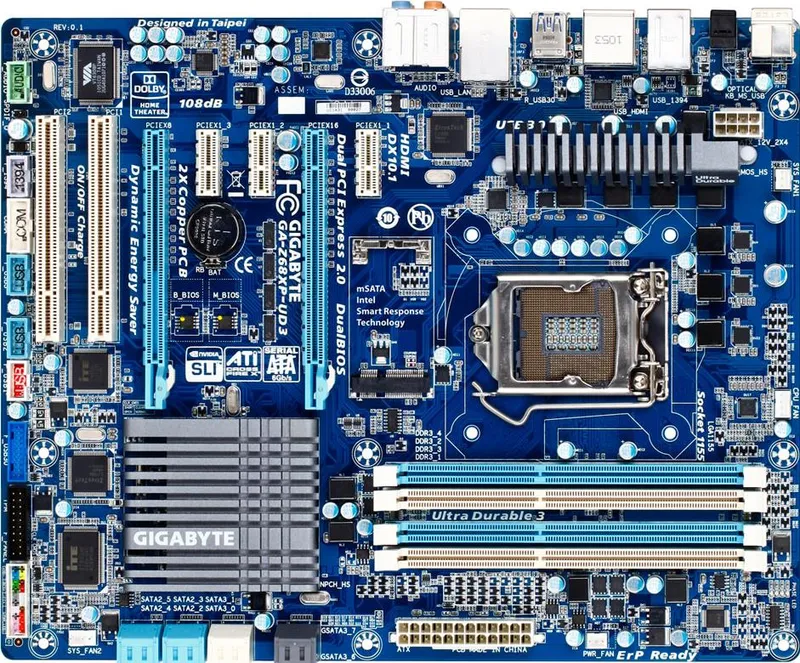

Gen2: 2011-2013 Gigabyte GA-Z68XP-UD3 Workstation 8 | ESXi 4-5

Gen1 worked quite well for what I needed but it was time to expand as my I started working for VMware as a Technical Account Manager. I needed to keep my skills sharp and deploy more complex home lab environments. Though I didn’t know it back then, this was the start of my HOME LABS: A DEFINITIVE GUIDE. I really started to blog about the plan to update and why I was making different choices. I ran into a very unique issues that even Gigabyte or Hitachi could figure out, I blogged about here.

Deployed with an i7-2600 ($300), Gigabyte GA-Z68XP-UD3 ($150), and 16GB DDR3 RAM

REF Link: Update to my Home Lab with VMware Workstation 8 – Part 1 Why

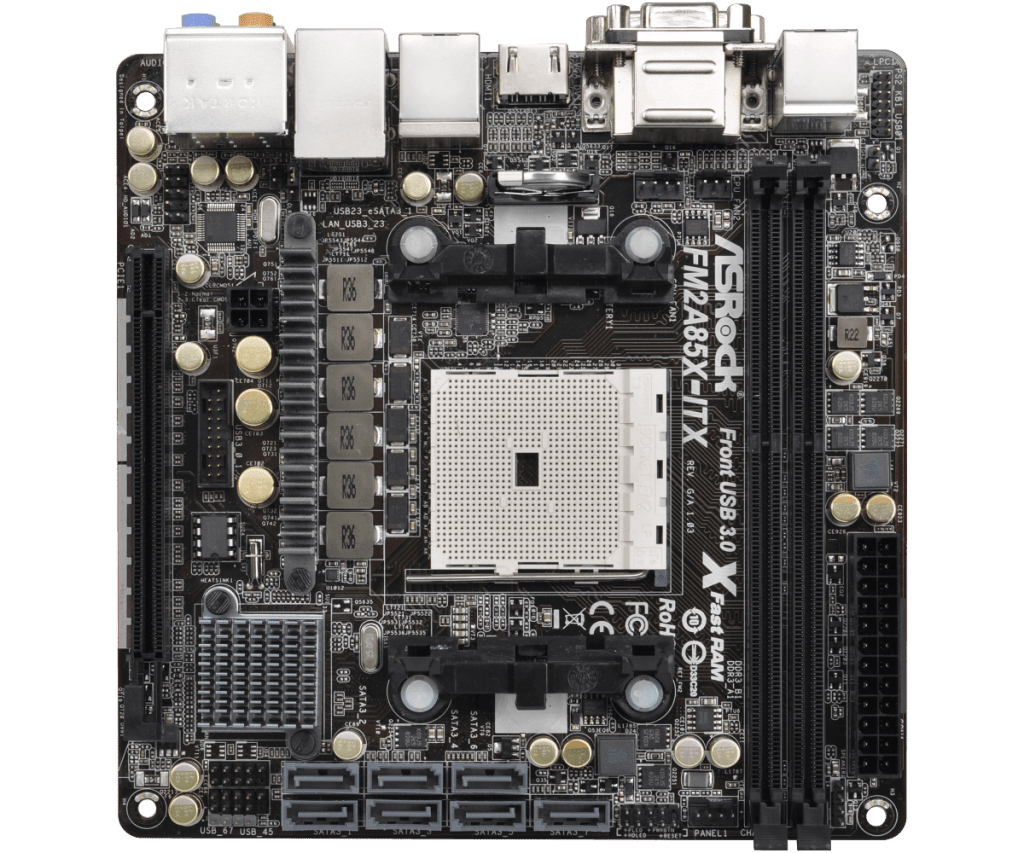

Gen2: Zotac M880G-ITX then the ASRock FM2A85X-ITX | FreeNAS Sever

Back in the day I needed better performance from my shared storage as the IOMega had reached its limits. Enter the short lived FreeNAS server to my home lab. Yes it did preform better but man it was full of bugs and issues. Some due to the Zotac Motherboard and some with FreeNAS. I was happy to be moving on to vSAN with Gen3.

REF: Home Lab – freeNAS build with LIAN LI PC-Q25, and Zotac M880G-ITX

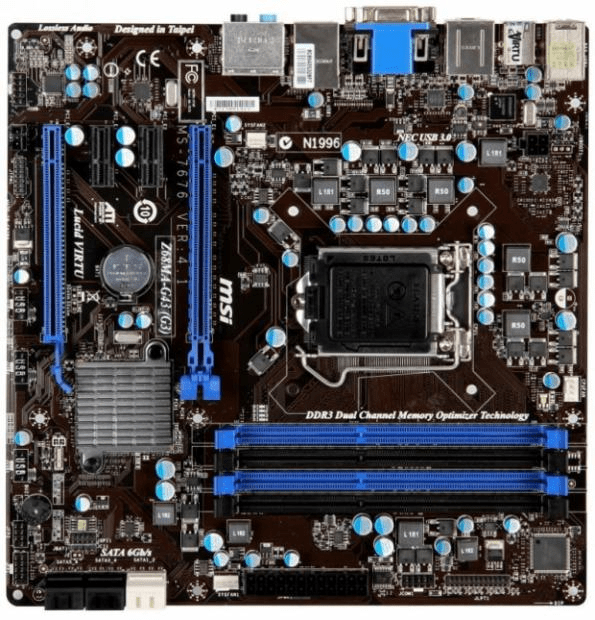

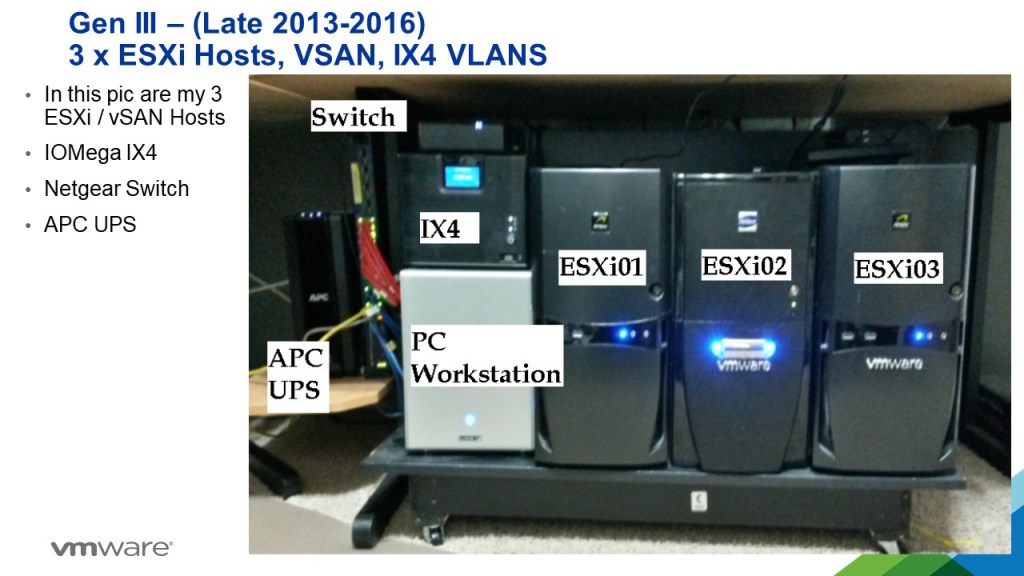

Gen3: 2012-2016 MSI Z68MA-G45 (B3) | ESXi 5-6

I needed to expand my home lab into dedicated hosts. Enter the MSI Z68MA-G45 (B3). It would become my workhorse expanding it from one server with the Gen 2 Workstation to 3 dedicated hosts running vSAN.

REF: VSAN – The Migration from FreeNAS

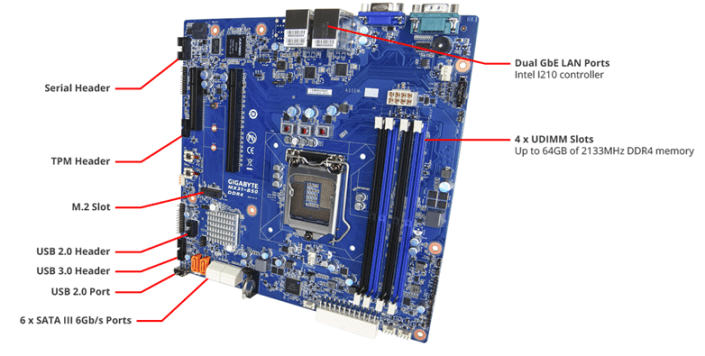

Gen4: 2016-2019 Gigabyte MX31-BS0

This mobo was used in my ‘To InfiniBand and beyond’ blog series. It had some “wonkiness” about its firmware updates but other then that it was a solid performer. Deployed with a E3-1500 and 32GB RAM

REF: Home Lab Gen IV – Part I: To InfiniBand and beyond!

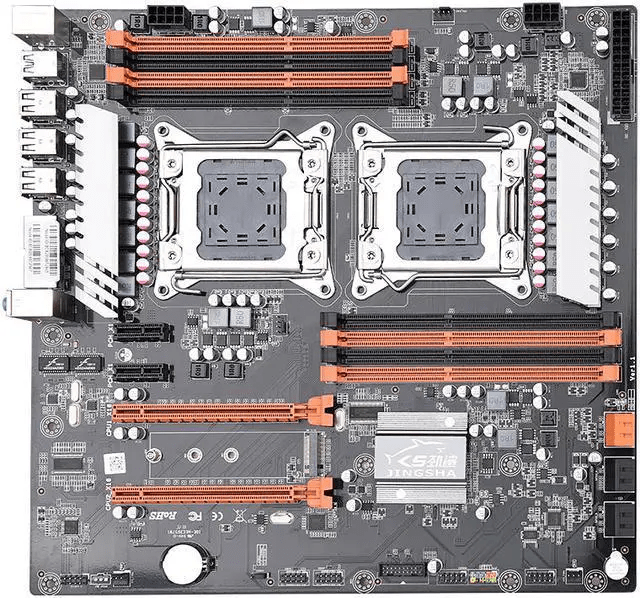

Gen 5: 2019-2020 JINGSHA X79

I had maxed out Gen 4 and really needed to expand my CPU cores and RAM. Hence the blog series title – ‘The Quest for More Cores!’. Deployed with 128GB RAM and Xeon E5-2640 v2 8 Cores it fit the bill. This series is where I started YouTube videos and documenting my builds per my design guides. Though this mobo was good for its design its lack of PCIe slots made it short lived.

REF: Home Lab GEN V: The Quest for More Cores! – First Look

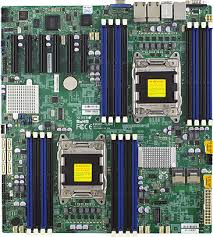

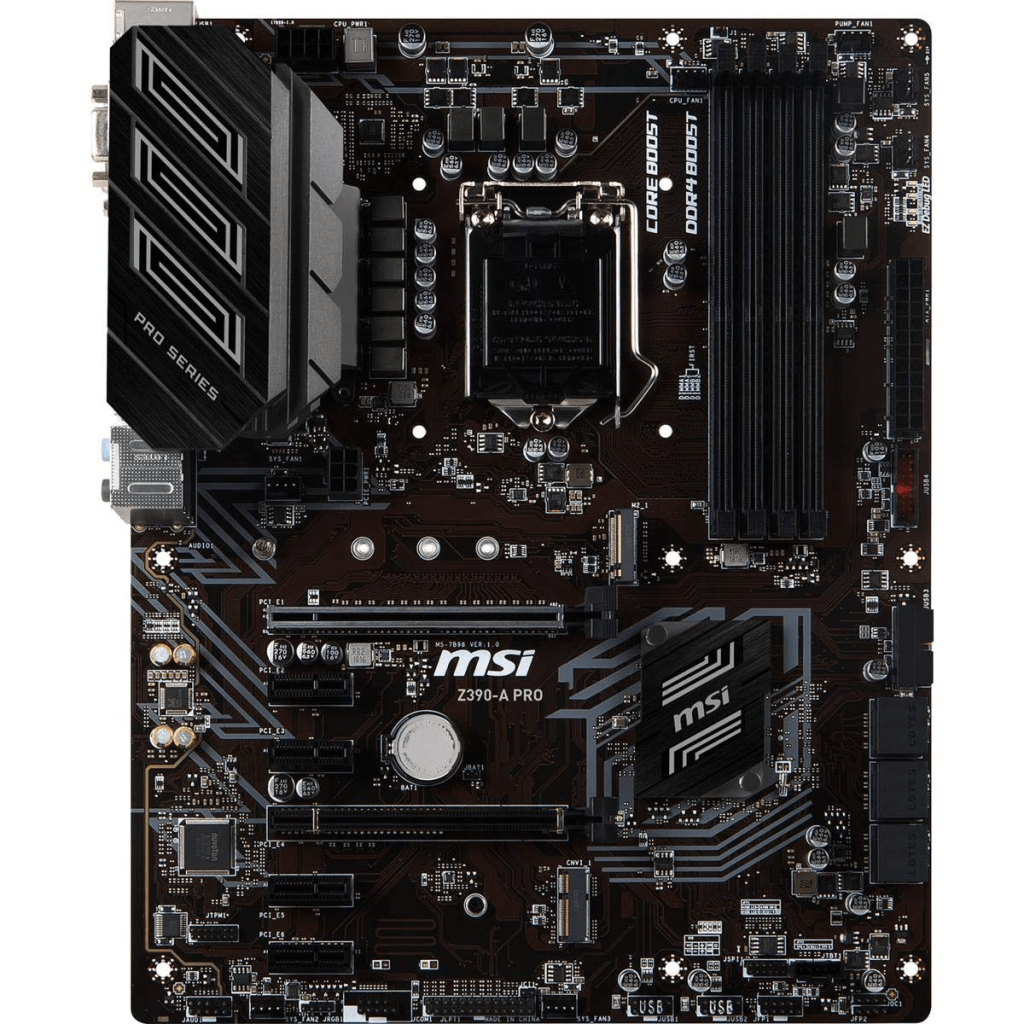

Gen 7: 2020-2023: Supermicro X9DRD-7LN4F-JBOD and the MSI PRO Z390-A PRO

Gen 5 motherboard fell short when I wanted to deploy and all flash vSAN based on NVMe. With this Supermicro motherboard I had no issues with IO and deploying it as all Flash vSAN. It also gathered the attention of Intel to which they offered me their Optane drives to create an All Flash Optane system. More on that in Gen 8.

The MSI motherboard was a needed update to my VMware Workstation system. I built it up as a Workstation / Plex server and it did this job quite well.

This generation is when I started to align my Gen#s to vSphere releases. Makes it much easier to track.

REF: Home Lab Generation 7: Updating from Gen 5 to Gen 7

Gen 8: 2023-2024 Dell T7820 VMware Dedicated Hosts

With some support from Intel I was able to uplift my 3 x Dell T7820 workstations into a great home lab. They supplied Engineering Samples CPUs, RAM, and Optane Disks. Plus I was able to coordinate the distribution of Optane disks to vExperts Globally. It was a great homelab and I leaned a ton!

REF: Home Lab Generation 8 Parts List (Part 2)

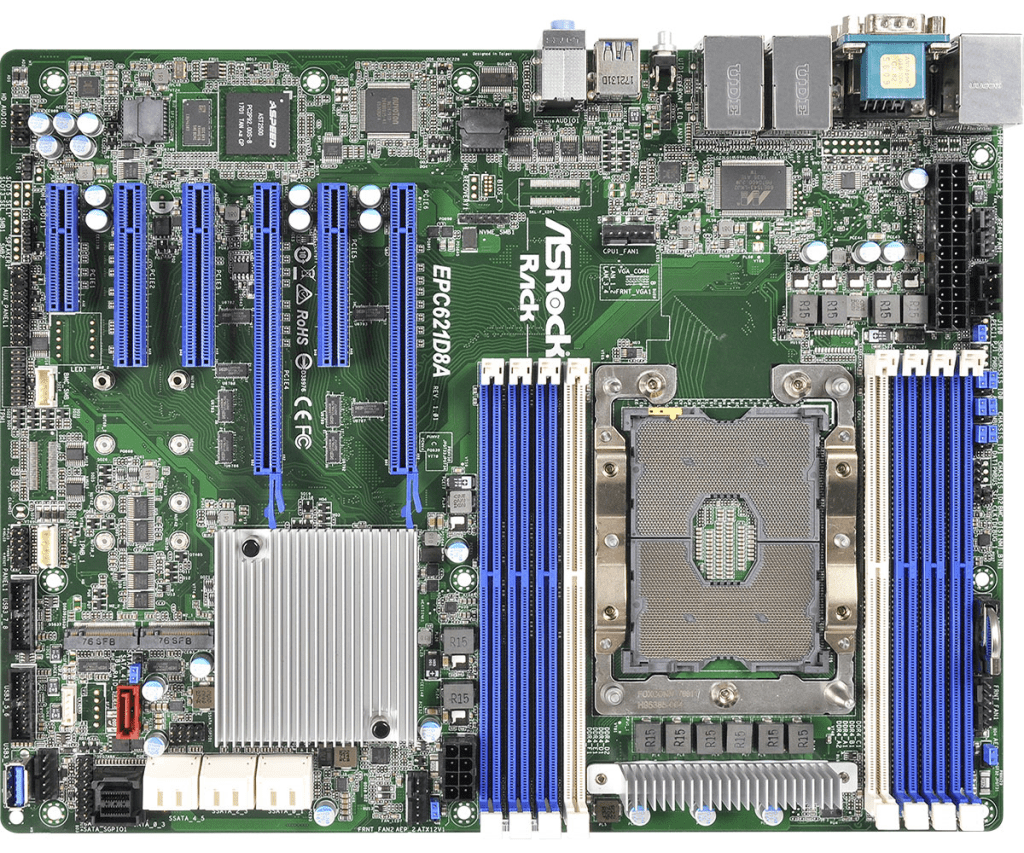

Gen 8-9: 2023-2026 ASRack Rock EPC621D8A VMware Workstation Motherboard

Evolving my Workstation PC I used this ASRack Rock motherboard. It was the perfect solution for running nested clusters of ESXi VMs with vSAN ESA. It was until most recently a really solid mobo and I even got it to run nested VCF 9 simple install.

REF: Announcing my Generation 8 Super VMware Workstation!

Gen 9: 2024 – Current

As of this date its still under development. See my Home Lab BOM for more information. However, I’m moving my home lab to only nested VCF 9 deployment on Workstation and not dedicated servers.

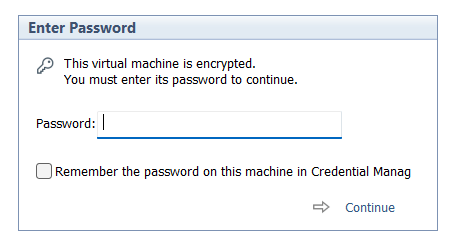

Windows 11 Workstation VM asking for encryption password that you did not explicitly set

I had created a Windows 11 VM on Workstation 25H2 and then moved it to a new deployment of Workstation. Upon powerup it the VM stated I must supply a password (fig-1) as the VM was encrypted. In this post I’ll cover why this happened and how I got around it.

Note: Disabling TPM/Secure Boot is not recommended for any system. Additionally, bypassing security leaves systems open for attack. If you are curious around VMware system Hardening check out this great video by Bob Plankers.

(Fig-1)

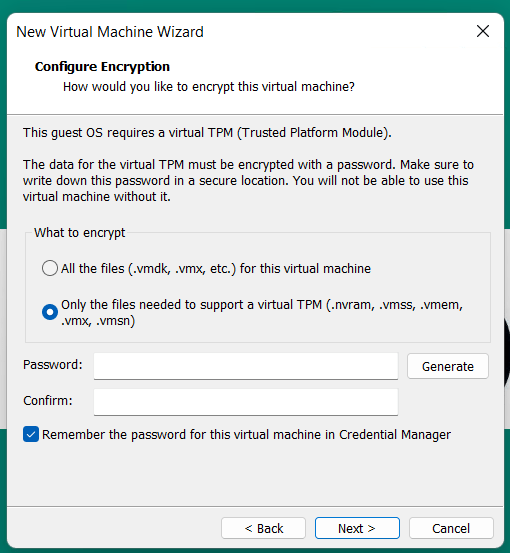

Why did this happen? As of VMware Workstation 17 encryption is required with a TPM 2.0 device, which is a requirement for Windows 11. When you create a new Windows 11×64 VM, the New VM Wizard (fig-2) asks you to set an encryption password or auto-generated one. This enables the VM to support Windows 11 requirements for TPM/Secure boot.

(Fig-2)

I didn’t set a password, where is the auto-generated password kept? If you allowed VMware to “auto-generate” the password, it is likely stored in your host machine’s credential manager. For Windows, open the Windows Credential Manager (search for “Credential Manager” in the Start Menu). Look for an entry related to VMware, specifically something like “VMware Workstation”.

I don’t have access to the PC where the auto-generated password was kept, how did I get around this? All I did was edit the VMs VMX configuration file commenting out the following. Then added the VM back into Workstation. Note: this will remove the vTPM device from the virtual hardware, not recommended.

# vmx.encryptionType

# encryptedVM.guid

# vtpm.ekCSR

# vtpm.ekCRT

# vtpm.present

# encryption.keySafe

# encryption.data

How could I avoid this going forward? 2 Options

Option 1 – When creating the VM, set and record the password.

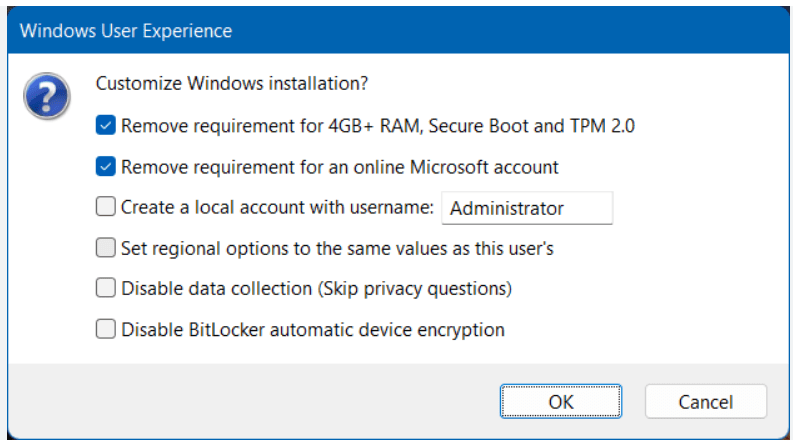

Option 2 – To avoid this all together, use Rufus to create a new VM without TPM/Secure boot enabled.

- Use Rufus to create a bootable USB drive with Windows 11. When prompted choose the options to disable Secure Boot and TPM 2.0.

- Once the USB is created create a new Windows 11×64 VM in Workstation.

- For creation options choose Typical > choose I will install the OS later > choose Win11x64 for the OS > chose a name/location > note the encryption password > Finish

- When the VM is completed, edit its settings > remove the Trusted Platform Module > then go to Options > Access Control > Remove Encryption > put in the password to remove it > OK

- Now attach the Rufus USB to the VM and boot to it.

- From there install Windows 11.

Wrapping this up — Bypassing security allowed me to access my VM again. However, it leaves the VM more vulnerable to attack. In the end, I enabled security on this vm and properly recorded its password.

VMware Workstation Gen 9: FAQs

I complied a list of frequently asked questions (FAQs) around my Gen 9 Workstation build. I’ll be updating it from time to time but do feel free to reach out if you have additional questions.

Last Update: 03/31/2026

General FAQs

Why Generation 9? Starting with the Gen 7 build, the Gen Number aligns to the version of vSphere it was designed for. So, Gen 9 = VCF 9. It also helps my readers to track the Generations that interests them the most.

Why are you running Workstation vs. dedicated ESX servers? I’m pivoting my home lab strategy. I’ve moved from a complex multi-server setup to a streamlined, single-host configuration using VMware Workstation. Managing multiple hosts, though it gives real world experience, wasn’t meeting my needs when it came to quick system recovery or testing different software versions. With Workstation, I can run/deploy multiple types of home labs and do simple backup/recovery, plus Workstation’s snapshot manager allow me to roll back labs quite quickly. I find Workstation more adaptable, and making my lab time about learning rather than maintenance.

What are your goals with Gen 9? To develop and build a platform that is able to run the stack of VCF 9 product for Home Lab use. See Gen 9 Part 1 for more information on goals.

Where can I find your Gen 9 Workstation Build series? All of my most popular content, including the Gen 9 Workstation builds can be found under Best of VMX.

What version of Workstation are you using? Currently, VMware Workstation 25H2, this may change over time see my Home Lab BOM for more details.

How performant is running VCF 9 on Workstation? In my testing I’ve had adequate success with a simple VCF install on BOM1. Clicks through out the various applications didn’t seem to lag. I plan to expand to a full VCF install under BOM2 and will do some performance testing soon.

What core services are needed to support this VCF Deployment? Core Services are supplied via Windows Server. They include AD, DNS, NTP, RAS, and DHCP. DNS, NTP, and RAS being the most important.

BOM FAQs

Where can I find your Bill of Materials (BOM)? See my Home Lab BOM page.

Why 2 BOMs for Gen 9? Initially, I started with the hardware I had, this became BOM1. It worked perfectly for a simple VCF install. Eventually, I needed to expand my RAM to support the entire VCF stack. I had 32GB DDR4 modules on hand but the BOM1 motherboard was fully populated. It was less expensive to buy a motherboard that had enough RAM slots plus I could add in a 2nd CPU. This upgrade became BOM2. Additionally, It gives my readers some ideas of different configurations that might work for them.

What topics does the BOM1 Series cover? The BOM1 series provides a comprehensive, step-by-step guide for deploying VMware Cloud Foundation (VCF) 9.0.1 in a nested lab environment. The series covers the full lifecycle, including initial planning and requirements (Part 1), the use of templates (Part 2), and setting up essential core network services (Part 3). vmexplorer +2

Subsequent steps detail the deployment of ESXi hosts (Part 4) and configuring the VCF installer with necessary VLAN segmentation (Part 5). To optimize the setup, the guide covers creating an offline repository for VCF components (Part 6), followed by the deployment of VCF 9.0.1 (Part 7).vmexplorer

Finally, the series outlines critical “day-two” operations, such as licensing the environment (Part 8) and managing the proper shutdown and startup procedures for the nested infrastructure (Part 9). This approach creates a fully functional, reproducible lab for testing the latest VMware technologies. For the full series, visit VMExplorer.

What can I run on BOM1? I have successfully deployed a simple VCF deployment, but I don’t recommend running VCF Automation on this BOM. See the Best of VMX section for a 9 part series.

What VCF 9 products are running in BOM1? Initial components include: VCSA, VCF Operations, VCF Collector, NSX Manager, Fleet Manager, and SDDC Manager all running on the 3 x Nested ESX Hosts.

What are your plans for BOM2? Currently, under development but I would like to see if I could push the full VCF stack to it.

What can I run on BOM2? Under development, updates soon.

Are you running both BOMs configurations? No I’m only running one at a time. Currently, running BOM2.

Do I really need this much hardware? No you don’t. The parts listed on my BOM is just how I did it. I used some parts I had on hand and some I bought used. My recommendation is use what you have and upgrade when you need to.

What should I do to help with performance? Invest in highspeed disk, CPU cores, and RAM. I highly recommend lots of properly deployed NVMe disks for your nested ESX hosts. Make performance adjustments to Windows 11.

What do I need for multiple NVMe Drives? If you plan to use multiple NVMe drives into a single PCIe slot you’ll need a motherboard that supports bifurcation OR you’ll need an PCIe NVMe adapter that will support it. Not all NVMe adapters are the same, so do your research before buying.

VMware Workstation Gen 9: Part 9 Shutting down and starting up the environment

Deploying the VCF 9 environment on to Workstation was a great learning process. However, I use my server for other purposes and rarely run it 24/7. After its initial deployment, my first task is shutting down the environment, backing it up, and then starting it up. In this blog post I’ll document how I accomplish this.

NOTE:

- License should be completed for a VCF 9 environment first before performing the steps below. If not, the last step, vSAN Shutdown will cause an error. There is a simple work around.

- I do fully complete each step before moving to the next. Some steps can take some time to complete.

How to shutdown my VCF Environment.

My main reference for VCF 9 Shut down procedures is the VCF 9 Documentation on techdocs.broadcom.com (See REF URLs below). The section on “Shutdown and Startup of VMware Cloud Foundation” is well detailed and I have placed the main URL in the reference URLs below. For my environment I need to focus on shutting down my Management Domains as it also houses my Workload VMs.

Here is the order in which I shutdown my environment. This may change over time as I add other components.

Note – it is advised to complete each step fully before proceeding to the next step.

| Shutdown Order | SDDC Component |

|---|---|

| In vCenter, shutdown all non-essential guest VM’s | |

| 1 – Not needed, not deployed yet | VCF Automation |

| 2 – Not needed, not deployed yet | VCF Operations for Networks |

| 3 – From VCSA234, locate a VCF Operations collector appliance.(opscollectorapplaince) – Right-click the appliance and select Power > Shut down Guest OS. – In the confirmation dialog box, click Yes. – Wait for it to fully power off | VCF Operations collector |

| 4 – Not needed, not deployed yet | VCF Operations for logs |

| 5 – Not needed, not deployed yet | VCF Identity Broker |

| 6 – From vcsa234, in the VMs and Templates inventory, locate the VCF Operations fleet management appliance (fleetmgmtappliance.nested.local) – Right-click the VCF Operations fleet management appliance and select Power > Shut down Guest OS. – In the confirmation dialog box, click Yes. – Wait for it to fully power off | VCF Operations fleet management |

| 7 – You shut down VCF Operations by first taking the cluster offline and then shutting down the appliances of the VCF Operations cluster. – Log in to the VCF Operations administration UI at the https://vcfcops.nested.local/admin URL as the admin local user. – Take the VCF Operations cluster offline. On the System status page, click Take cluster offline. – In the Take cluster offline dialog box, provide the reason for the shutdown and click OK. – Wait for the Cluster status to read Offline. This operation might take about an hour to complete. (With no data mine took <10 mins) – Log in to vCenter for the management domain at https://vcsa234.nested.local/ui as a user with the Administrator role. – There could be other options for shutting down this appliance. Using Broadcom KB 341964 as a reference, I determined my next step is to simply Right-click the vfccops appliance and select Power > Shut down Guest OS. – In the VMs and Templates inventory, locate a VCF Operations appliance. – Right-click the appliance and select Power > Shut down Guest OS. – In the confirmation dialog box, click Yes. – This operations takes several minutes to complete. – Wait for it to fully power off | VCF Operations |

| 8 – Not Needed, not deployed yet | VMware Live Site Recovery for the management domain |

| 9 – Not Needed, not deployed yet | NSX Edge nodes |

| 10 – I continue shutting down the NSX infrastructure in the management domain and a workload domain by shutting down the one-node NSX Manager by using the vSphere Client. – Log in to vCenter for the management domain at https://vcsa234.nested.local/ui as a user with the Administrator role. – Identify the vCenter instance that runs NSX Manager. – In the VMs and Templates inventory, locate the NSX Manager (nsxmgr.nested.local) appliance. – Right-click the NSX Manager appliance and select Power > Shut down Guest OS. – In the confirmation dialog box, click Yes. – This operation takes several minutes to complete. – Wait for it to fully power off | NSX Manager |

| 11 – Shut down the SDDC Manager appliance in the management domain by using the vSphere Client. – Log in to vCenter for the management domain at https://vcsa234.nested.local/ui as a user with the Administrator role. – In the VMs and templates inventory, expand the management domain vCenter Server tree and expand the management domain data center. – Right-click the SDDC Manager appliance (SDDCMGR108.nested.local) and click Power > Shut down Guest OS. – In the confirmation dialog box, click Yes. – This operation takes several minutes to complete. – Wait for it to fully power off | SDDC Manager |

| 12 – You use the vSAN shutdown cluster wizard in the vSphere Client to shut down gracefully the vSAN clusters in a management domain. The wizard shuts down the vSAN storage and the ESX hosts added to the cluster. – Identify the cluster that hosts the management vCenter for this management domain. – This cluster must be shut down last. – Log in to vCenter for the management domain at https://vcsa234.nested.local/ui as a user with the Administrator role. – For a vSAN cluster, verify the vSAN health and resynchronization status. – In the Hosts and Clusters inventory, select the cluster and click the Monitor tab. – In the left pane, navigate to vSAN Skyline health and verify the status of each vSAN health check category. – In the left pane, under vSAN Resyncing objects, verify that all synchronization tasks are complete. – Shut down the vSAN cluster. – In the inventory, right-click the vSAN cluster and select vSAN > Shutdown cluster. – In the Shutdown Cluster wizard, verify that all pre-checks are green and click Next. – Review the vCenter Server notice and click Next. – Enter a reason for performing the shutdown, and click Shutdown. – Briefly monitor the progress of the vSAN shutdown in vCenter. Eventually, VCSA will be shutdown and connectivity to it will be lost. I then monitor the shut down of my ESX host in Workstation. – The shutdown operation is complete after all ESX hosts are stopped. | Shut Down vSAN and the ESX Hosts in the Management Domain OR Manually Shut Down and Restart the vSAN Cluster If vSAN Fails to shutdown due to a license issue, then under the vSAN Cluster > Configure > Services, choose ‘Resume Shutdown’ (Fig-3) |

| Next the ESX hosts will power off and then I can do a graceful shutdown of my Windows server AD230. In Workstation, simply right click on this VM > Power > Shutdown Guest. Once all Workstation VM’s are powered off, I can run a backup or exit Workstation and power off my server. | Power off AD230 |

(Fig-3)

Backing up my VCF Environment

With my environment fully shut down, now I can start the backup process. See my blog Backing up Workstation VMs with PowerShell for more details.

How to restart my VCF Environment.

| Startup Order | SDDC Component |

|---|---|

| PRE-STEP: – Power on my Workstation server and start Workstation. – In Workstation power on my AD230 VM and ensure / verify all the core services (AD, DNS, NTP, and RAS) are working okay. Start up the VCF Cluster: 1 – One at a time power on each ESX Host. – vCenter is started automatically. Wait until vCenter is running and the vSphere Client is available again. – Log in to vCenter at https://vcsa234.nested.local/ui as a user with the Administrator role. – Restart the vSAN cluster. In the Hosts and Clusters inventory, right-click the vSAN cluster and select vSAN Restart cluster. – In the Restart Cluster dialog box, click Restart. – Choose the vSAN cluster > Configure > vSAN > Services to see the vSAN Services page. This will display information about the restart process. – After the cluster has been restarted, check the vSAN health service and resynchronization status, and resolve any outstanding issues. Select the cluster and click the Monitor tab. – In the left pane, under vSAN > Resyncing objects, verify that all synchronization tasks are complete. – In the left pane, navigate to vSAN Skyline health and verify the status of each vSAN health check category. | Start vSAN and the ESX Hosts in the Management DomainStart ESX Hosts with NFS or Fibre Channel Storage in the Management Domain |

| 2 – From vcsa234 locate the sddcmgr108 appliance. – In the VMs and templates inventory, Right Click on the SDDC Manager appliance > Power > Power On. – Wait for this vm to boot. Check it by going to https://sddcmgr108.nested.local – As its getting ready you may see “VMware Cloud Foundation is initializing…” – Eventually you’ll be prompted by the SDDC Manager page. – Exit this page. | SDDC Manager |

| 3 – From the VCSA234 locate the nsxmgr VM then Right-click, select Power > Power on. – This operation takes several minutes to complete until the NSX Manager cluster becomes fully operational again and its user interface – accessible. – Log in to NSX Manager for the management domain at https://nsxmgr.nested.local as admin. – Verify the system status of NSX Manager cluster. – On the main navigation bar, click System. – In the left pane, navigate to Configuration > Appliances. – On the Appliances page, verify that the NSX Manager cluster has a Stable status and all NSX Manager nodes are available. Notes — Give it time. – You may see the Cluster status go from Unavailable > Degraded, ultimately you want it to show Available. – In the Node under Service Status you can click on the # next to Degraded. This will pop up the Appliance details and will show you which item are degraded. – If you click on Alarms, you can see which alarms might need addressed | NSX Manager |

| 4 – Not Needed, not deployed yet | NSX Edge |

| 5 – Not Needed, not deployed yet | VMware Live Site Recovery |

| 6 – From vcsa234, locate vcfops.nested.lcoal appliance. – Following the order described in Broadcom KB 341964. – For my environment I simply Right-click on the appliance and select Power > Power On. – Log in to the VCF Operations administration UI at the https://vcfops.nested.lcoal/admin URL as the admin local user. – You may see ‘Retrieving Cluster Status’ , give it time. Mine took about <2mins – On the System status page, Under Cluster Status, click Bring Cluster Online. – You may see ‘Retrieving Cluster Status’ , give it time. Mine took about <2mins Notes — Give it time. – This operation might take about an hour to complete. – Took <15 mins to come Online – Cluster Status update may read: ‘Going Online’ – To the right of the node name, all of the other columns continue to update, eventually showing ‘Running’ and ‘Online’ – Cluster Status will eventually go to ‘Online’ | VCF Operations |

| 7 – From vcsa234 locate the VCF Operations fleet management appliance (fleetmgmtappliance.nested.local) Right-click the VCF Operations fleet management appliance and select Power > Power On. – In the confirmation dialog box, click Yes. – Allow it to boot Note – Direct access to VCF Ops Fleet Management appliance is disabled. Go to VCF Operations > Fleet Mgmt > Lifecycle > VCF Management for appliance management. | VCF Operations fleet management |

| 8 – Not Needed, not deployed yet | VCF Identity Broker |

| 9 – Not Needed, not deployed yet | VCF Operations for logs |

| 10 – From vcsa234, locate a VCF Operations collector appliance. (opscollectorappliance) Right-click the VCF Operations collector appliance and select Power > Power On. In the configuration dialog box, click Yes. | VCF Operations collector |

| 11 – Not Needed, not deployed yet | VCF Operations for Networks |

| 12 – Not Needed, not deployed yet | VCF Automation |

REF:

VMware Workstation Gen 9: Part 7 Deploying VCF 9.0.1

Now that I have set up an VCF 9 Offline depot and downloaded the installation media its time to move on to installing VCF 9 on my Workstation environment. In this blog all document the steps I took to complete this.

PRE-Steps

1) One of the more important steps is making sure I backup my environment and delete any VM snapshots. This way my environment is ready for deployment.

2) Make sure your Windows 11 PC power plan is set to High Performance and does not put the computer to sleep.

3) Next since my hosts are brand new they need their self-signed certificates updated. See the following URL’s.

- VCF Installer fails to add hosts during deployment due to hostname mismatch with subject alternative name

- Regenerate the Self-Signed Certificate on ESX Hosts

4) I didn’t setup all of DNS names ahead of time, I prefer to do it as I’m going through the VCF installer. However, I test all my current DNS settings, and test the newly entered ones as I go.

5) Review the Planning and Resource Workbook.

6) Ensure the NTP Service is running on each of your hosts.

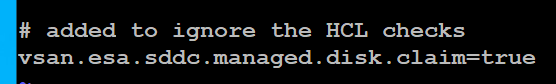

7) The VCF Installer 9.0.1 has some extra features to allow non-vSAN certified disks to pass the validation section. However, nested hosts will fail the HCL checks. Simply add the line below to the /etc/vmware/vcf/domainmanager/application-prod.properties and then restart the SDDC Domain Manager services with the command: systemctl restart domainmanager

This allows me Acknowledge the errors and move the deployment forward.

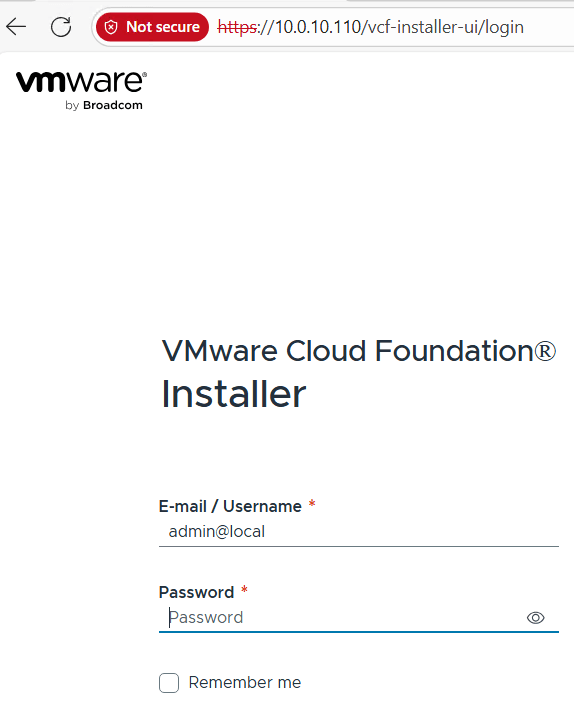

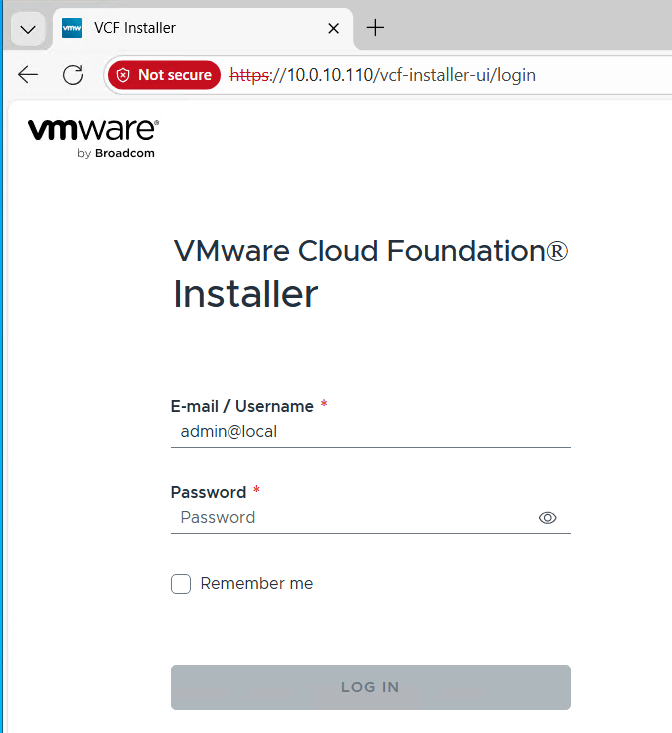

Installing VCF 9 with the VCF Installer

I log into the VCF Installer.

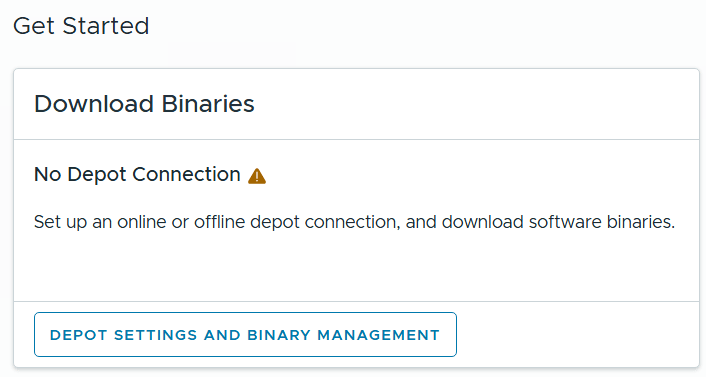

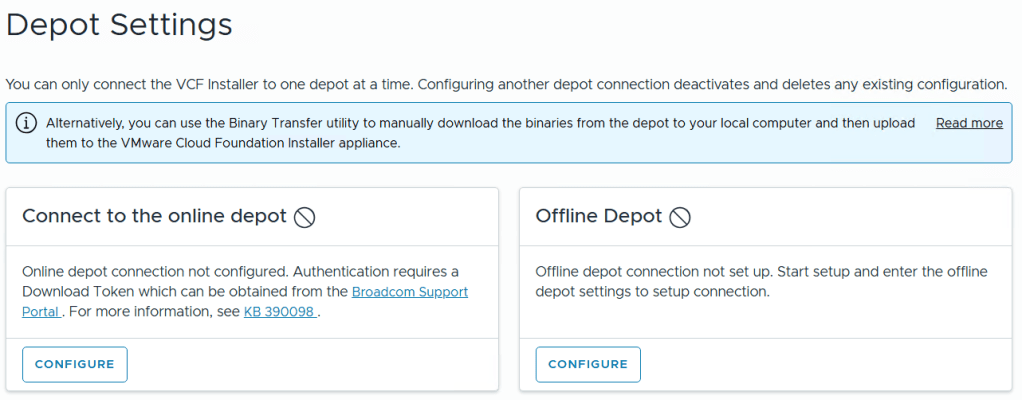

I click on ‘Depot Settings and Binary Management’

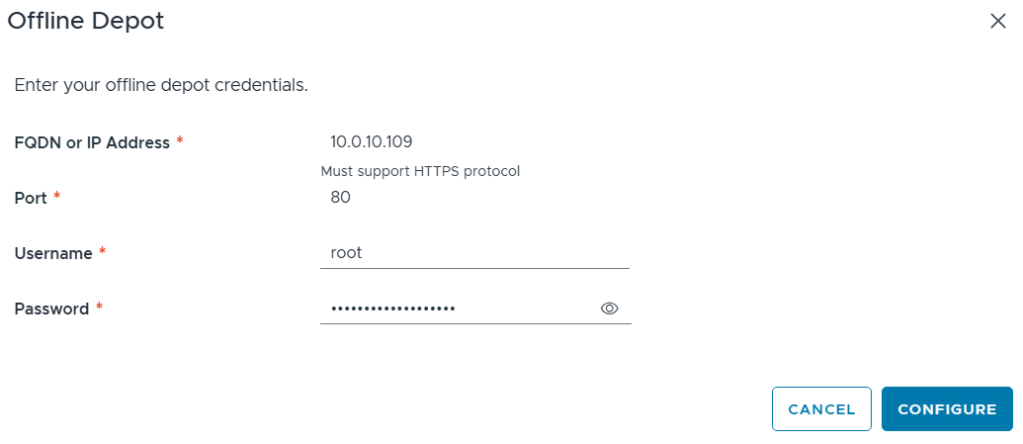

I click on ‘Configure’ under Offline Depot and then click Configure.

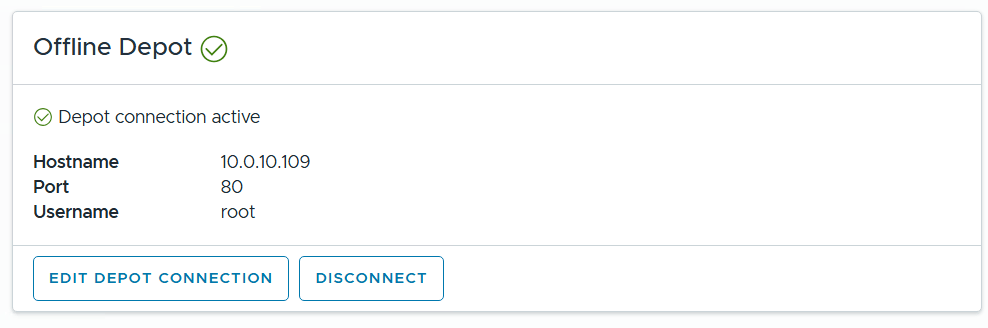

I confirm the Offline Depot Connection if active.

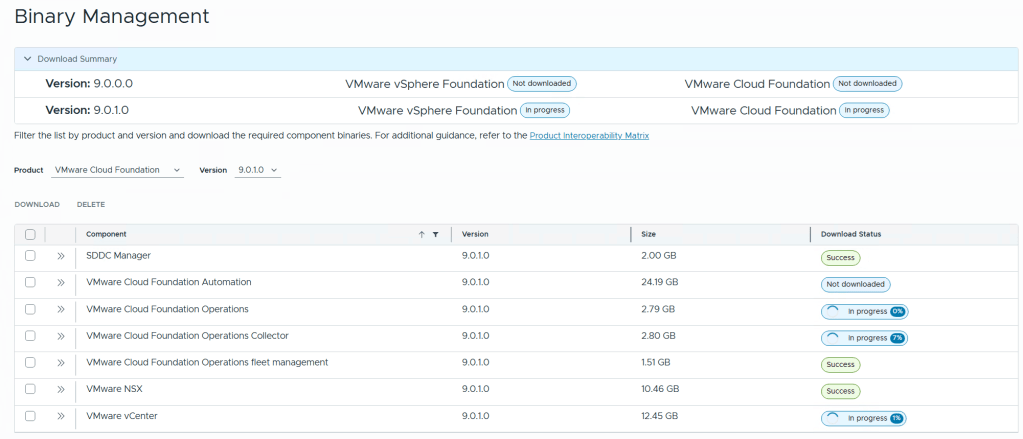

I chose ‘9.0.1.0’ next to version, select all except for VMware Cloud Automation, then click on Download.

Allow the downloads to complete.

All selected components should state “Success” and the Download Summary for VCF should state “Partially Downloaded” when they are finished.

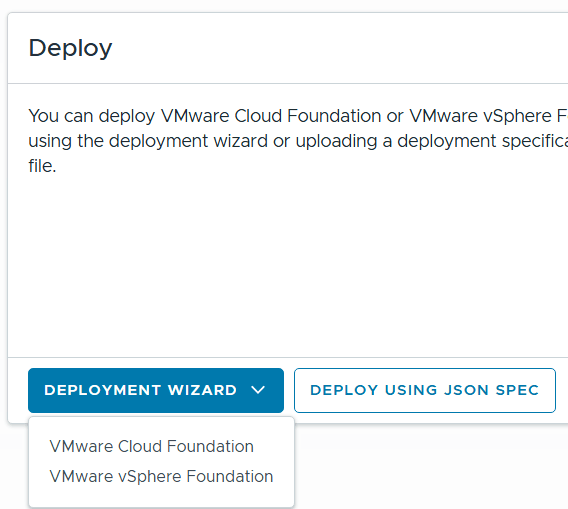

Click return home and choose VCF under Deployment Wizard.

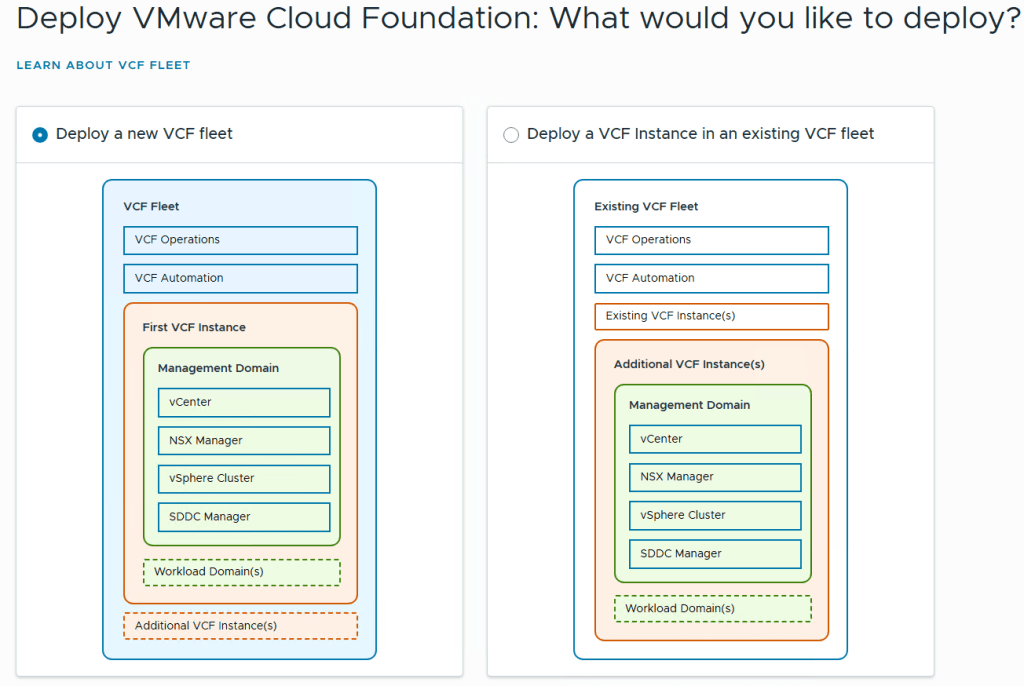

This is my first deployment so I’ll choose ‘Deploy a new VCF Fleet’

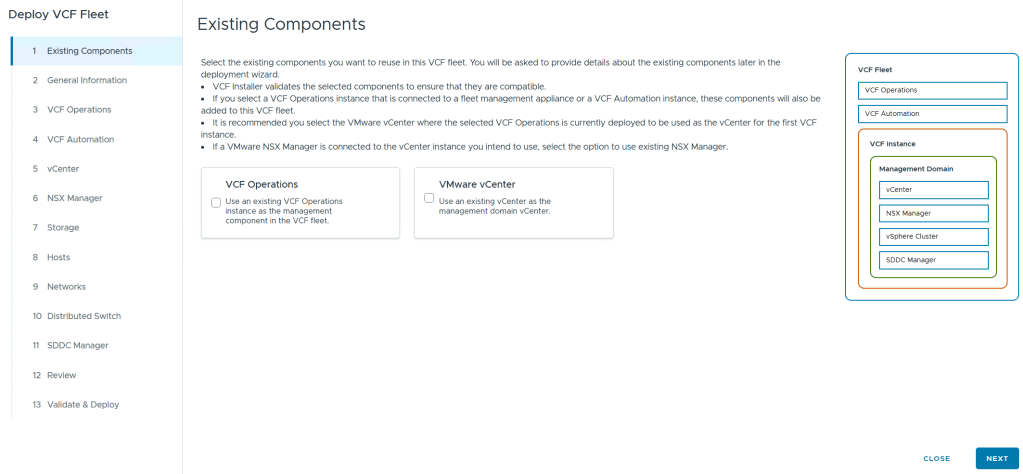

The Deploy VCF Fleet Wizard starts and I’ll input all the information for my deployment.

For Existing Components I simply choose next as I don’t have any.

I filled in the following information around my environment, choose simple deployment and clicked on next.

I filled out the VCF Operations information and created their DNS records. Once complete I clicked on next.

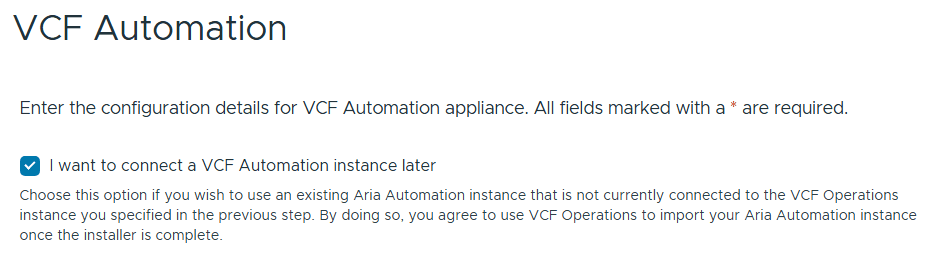

I chose to “I want to connect a VCF Automation instance later” can chose next.

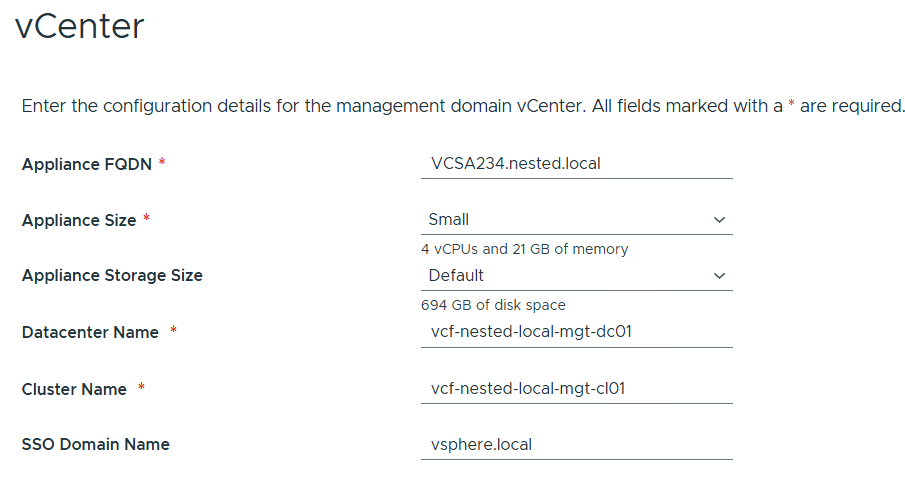

Filled out the information for vCenter

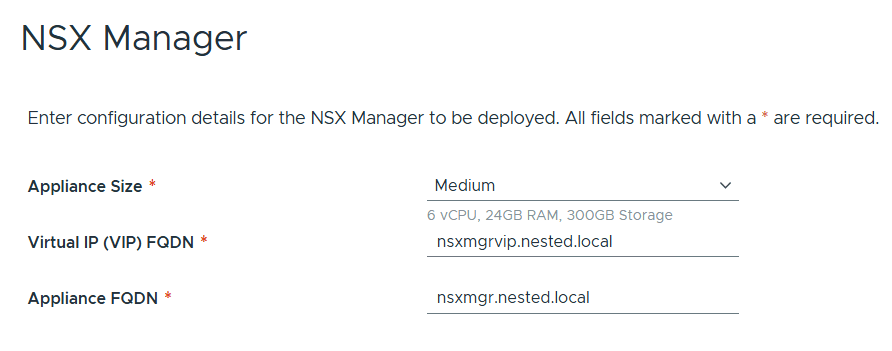

Entered the details for NSX Manager.

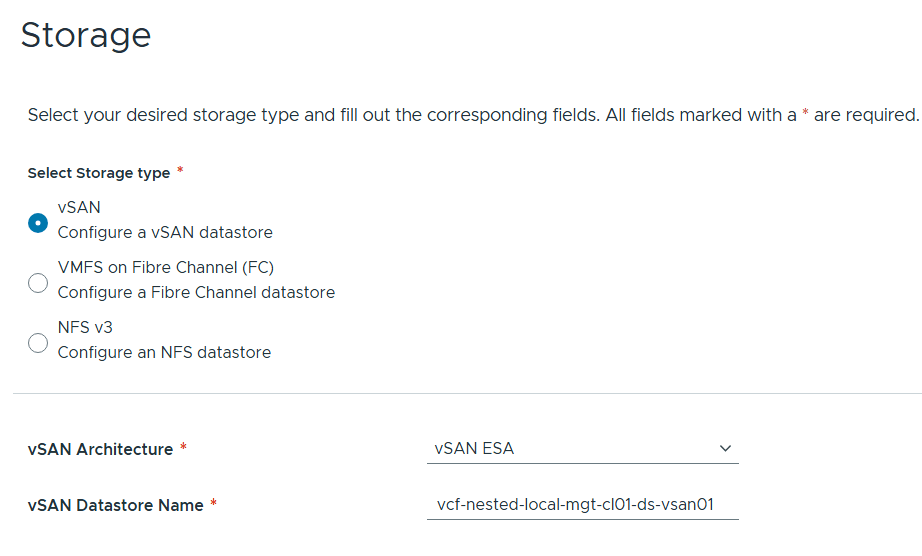

Left the storage items as default.

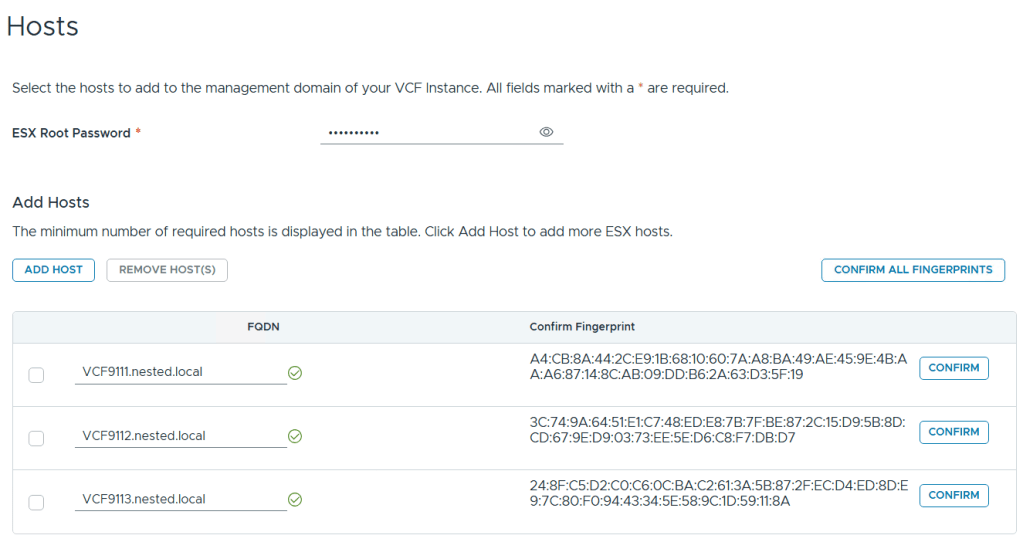

Added in my 3 x ESX 9 Hosts, confirmed all fingerprints, and clicked on next.

Note: if you skipped the Pre-requisite for the self-signed host certificates, you may want to go back and update it before proceeding with this step.

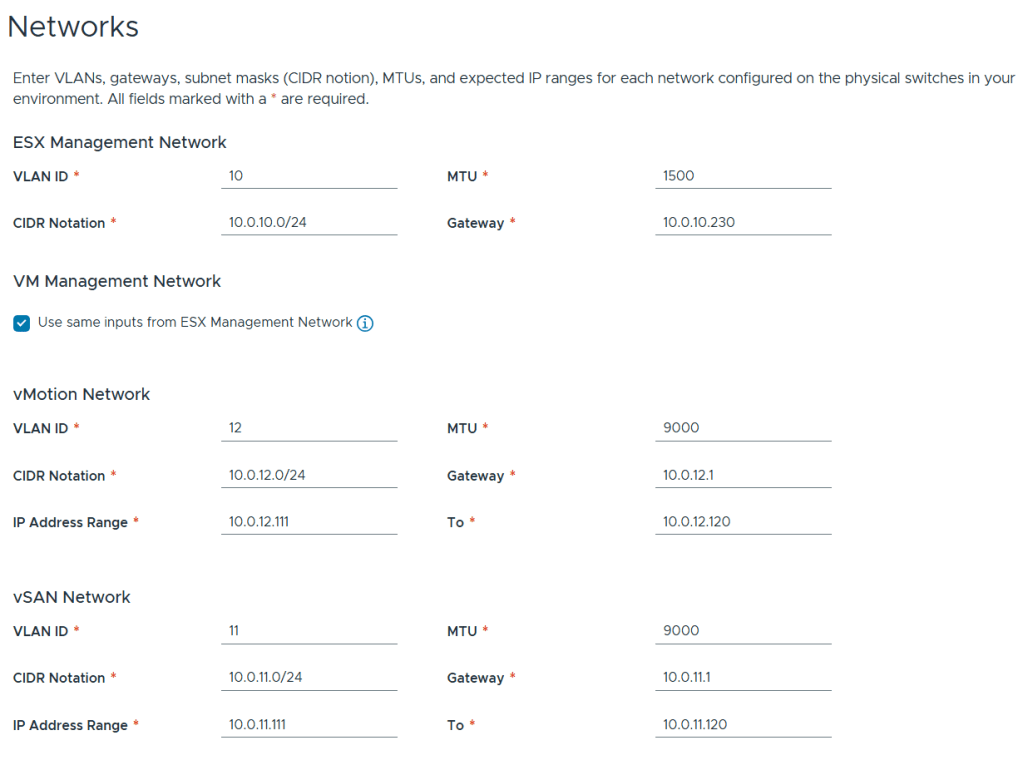

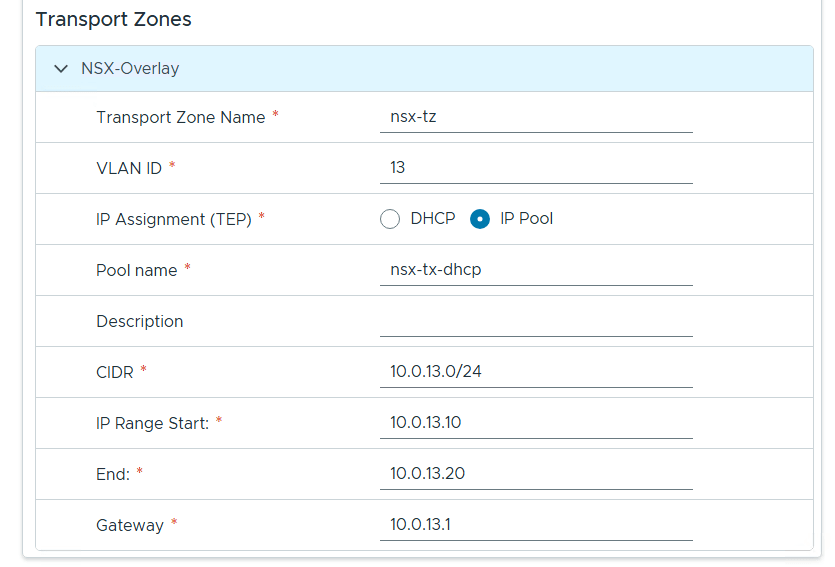

Filled out the network information based on our VLAN plan.

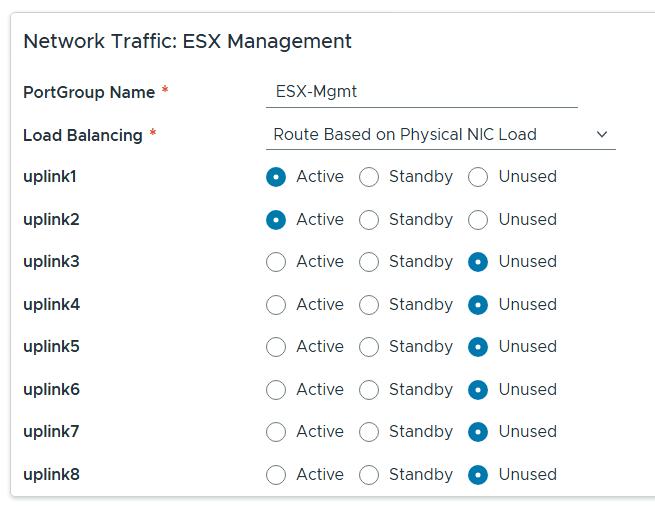

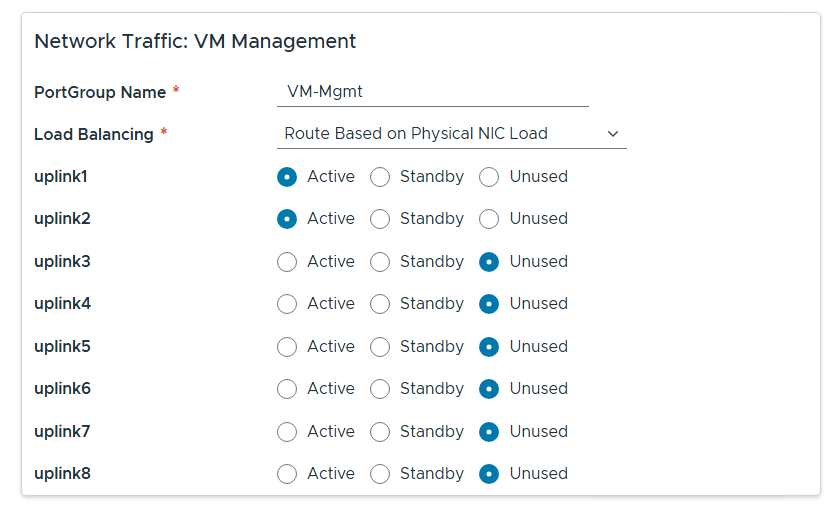

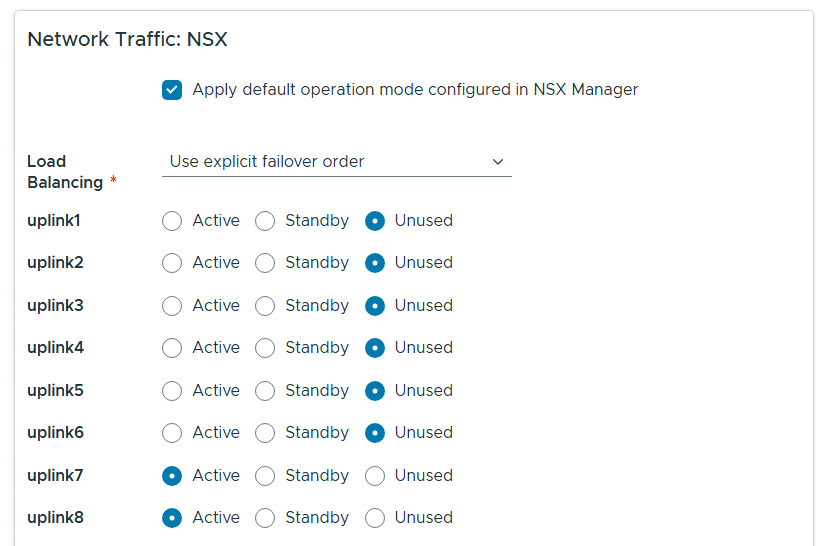

For Distributed Switch click on Select for Custom Switch Configuration, MTU 9000, 8 Uplinks and chose all services, then scroll down.

Renamed each port group and chose the following network adapters, chose their networks, updated NSX settings then chose next.

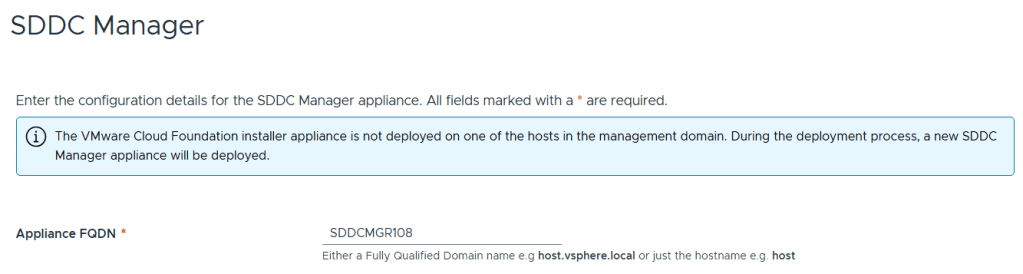

Entered the name of the new SDDC Manager and updated it’s name in DNS, then clicked on next.

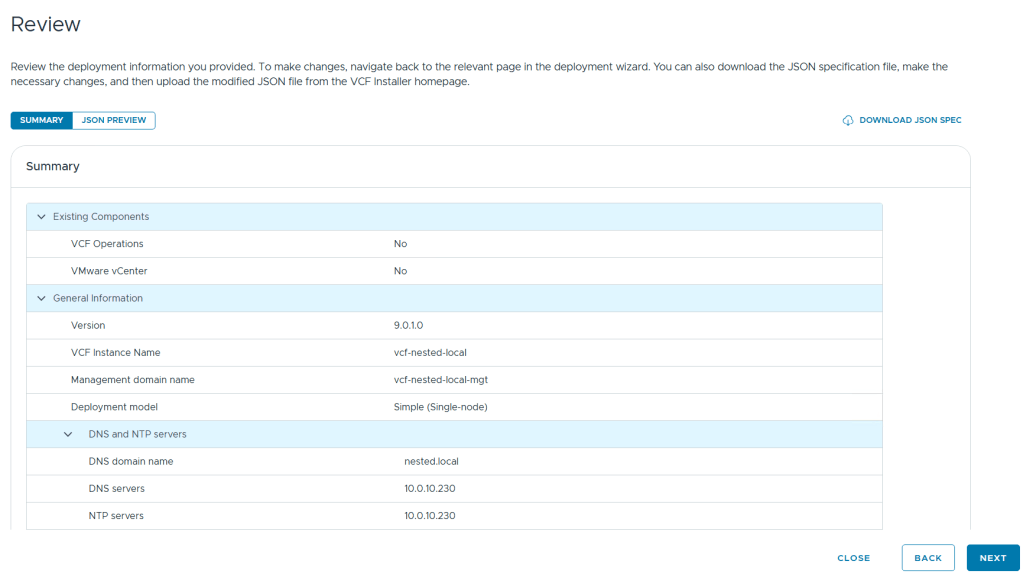

Reviewed the deployment information and chose next.

TIP – Download this information as a JSON Spec, can save you a lot of typing if you have to deploy again.

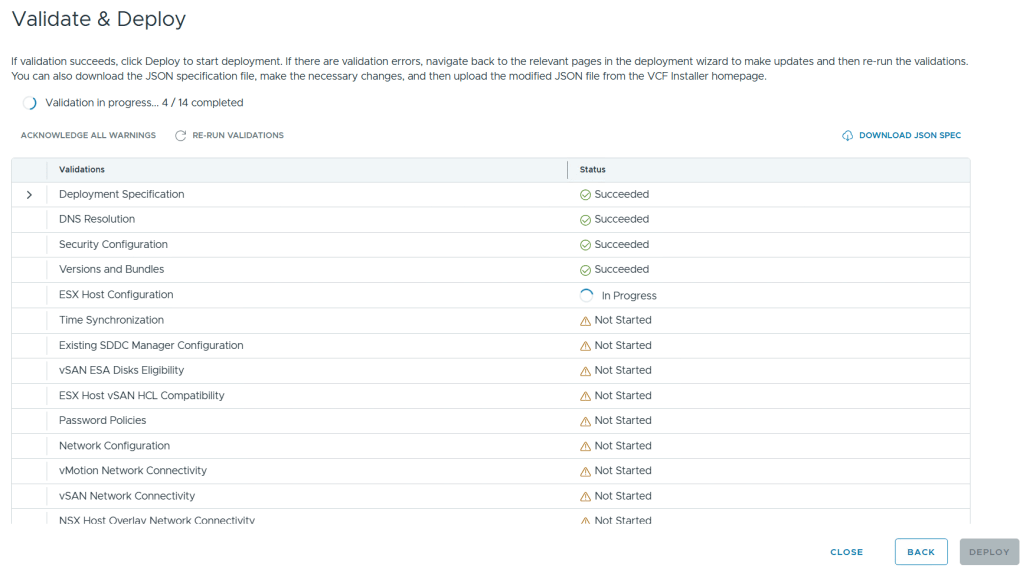

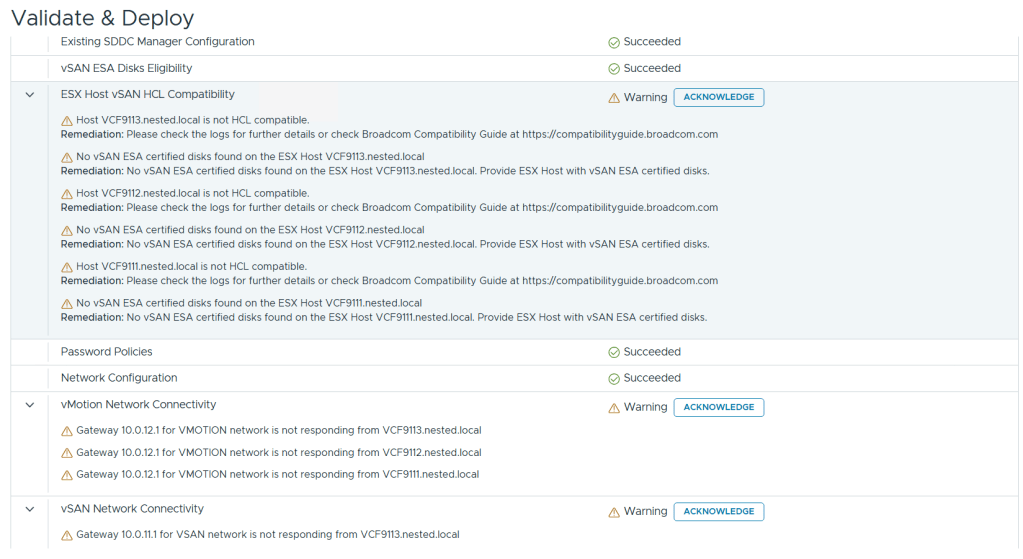

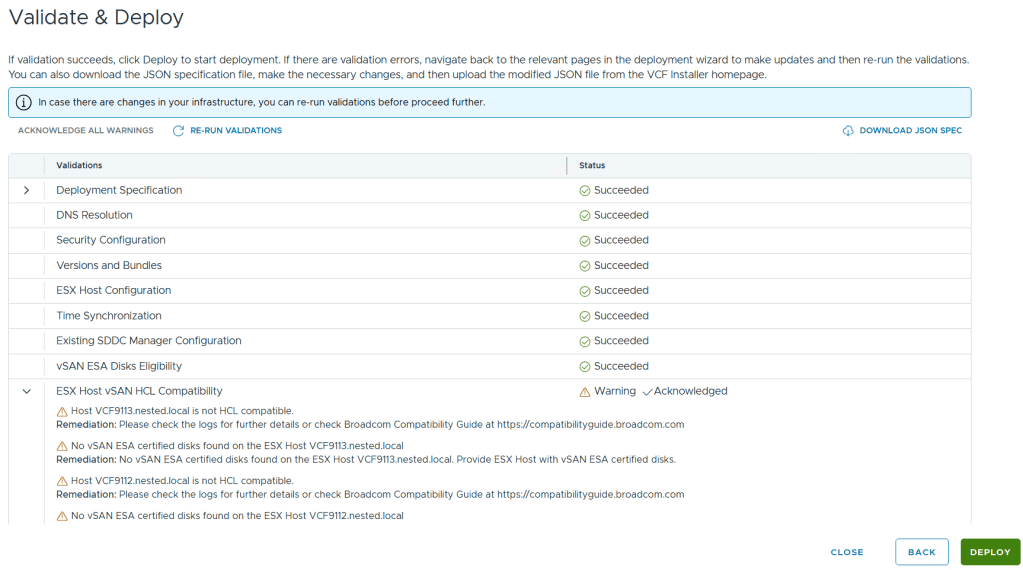

Allow it to validate the deployment information.

I reviewed the validation warnings, at the top click on “Acknowledge all Warnings” and click ‘DEPLOY’ to move to the next step.

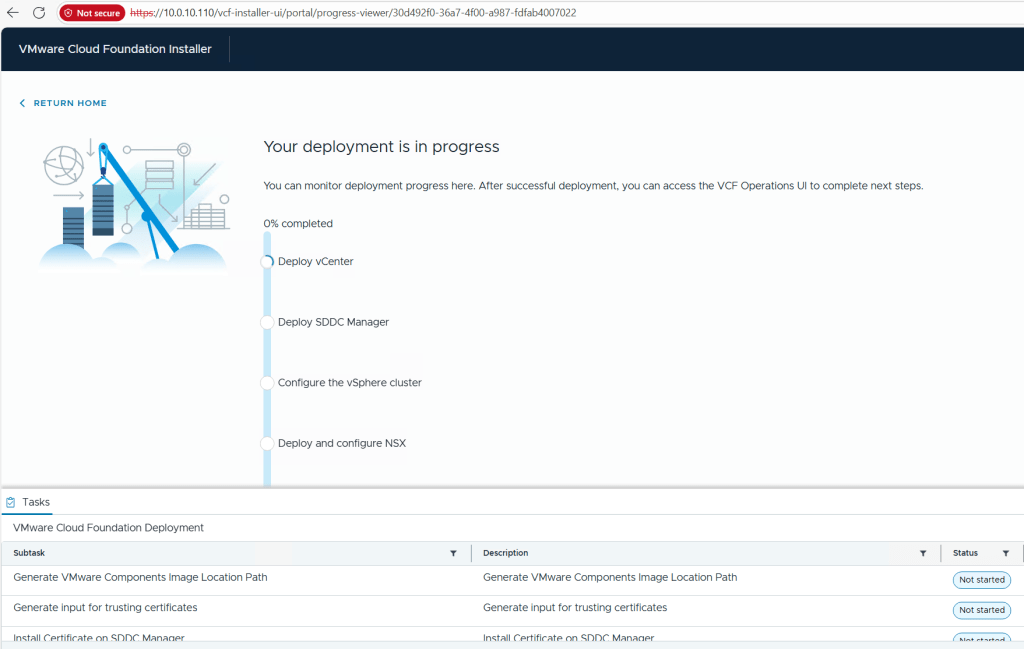

Allow the deployment to complete.

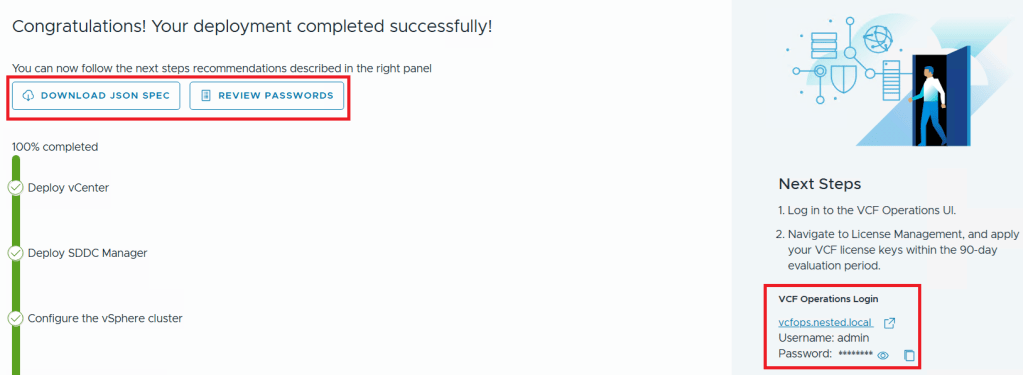

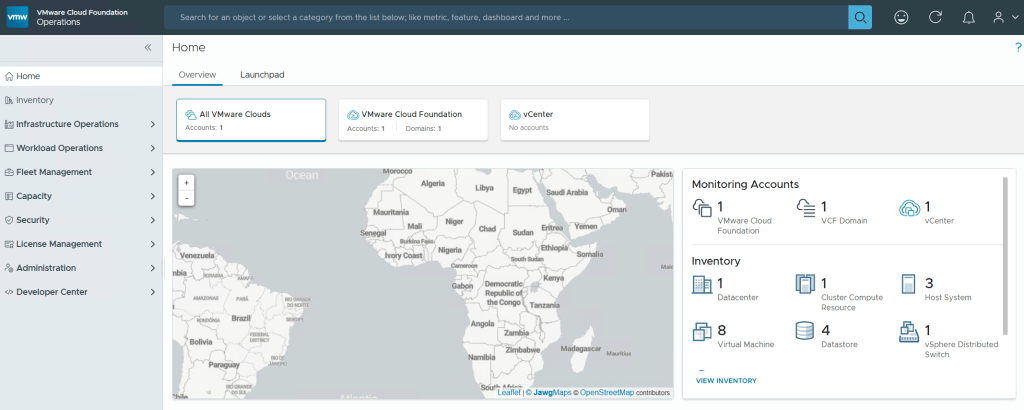

Once completed, I download the JSON SPEC, Review and document the passwords, (Fig-1) and then log into VCF Operations. (Fig-2)

(Fig-1)

(Fig-2)

Now that I have a VCF 9.0.1 deployment complete I can move on to Day N tasks. Thanks for reading and reach out if you have any questions.

VMware Workstation Gen 9: Part 6 VCF Offline Depot

To deploy VCF 9 the VCF Installer needs access to the VCF installation media or binaries. This is done by enabling Depot Options in the VCF Installer. For users to move to the next part, they will need to complete this step using resources available to them. In this blog article I’m going to supply some resources to help users perform these functions.

Why only supply resources? When it comes to downloading and accessing VCF 9 installation media, as a Broadcom/VMware employee, we are not granted the same access as users. I have an internal process to access the installation media. These processes are not publicly available nor would they be helpful to users. This is why I’m supplying information and resources to help users through this step.

What are the Depot choices in the VCF Installer?

Users have 2 options. 1) Connect to an online depot or 2) Off Line Depot

What are the requirements for the 2 Depot options?

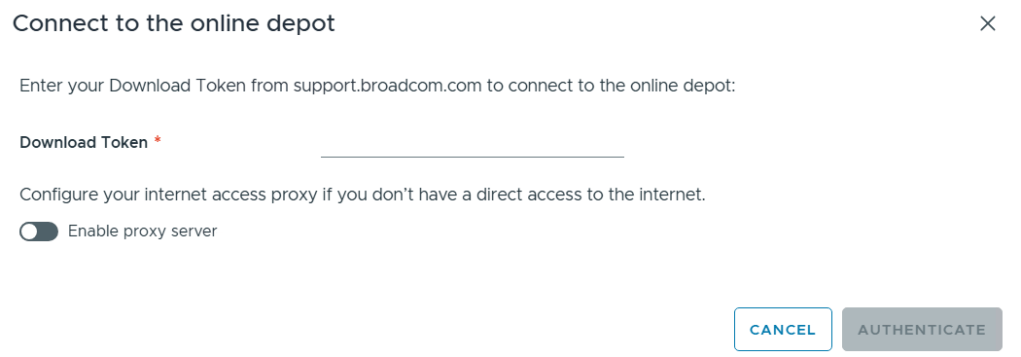

1) Connect to an online depot — Users need to have an entitled support.broadcom.com account and a download token. Once their token is authenticated they are enabled to download.

See These URL’s for more information:

2) Offline Depot – This option may be more common for users building out Home labs.

See these URLs for more information:

- Set Up an Offline Depot Web Server for VMware Cloud Foundation

- Set Up an Offline Depot Web Server for VMware Cloud Foundation << Use this method if you want to setup https on the Photon OS.

- How to deploy VVF/VCF 9.0 using VMUG Advantage & VCP-VCF Certification Entitlement

- Setting up a VCF 9.0 Offline Depot

I’ll be using the Offline Depot method to download my binaries and in the next part I’ll be deploying VCF 9.0.1.

VMware Workstation Gen 9: Part 5 Deploying the VCF Installer with VLANs

The VCF Installer (aka SDDC Manager Appliance) is the appliance that will allow me to deploy VCF on to my newly created ESX hosts. The VCF Installer can be deployed on to a ESX Host or directly on Workstation. There are a couple of challenges with this deployment in my Home lab and in this blog post I’ll cover how I overcame this. It should be noted, the modifications below are strictly for my home lab use.

Challenge 1: VLAN Support

By default the VCF Installer doesn’t support VLANS. It’s a funny quandary as VCF 9 requires VLANS. Most production environments will allow you to deploy the VCF Installer and be able to route to a vSphere environment. However, my Workstation Home Lab uses LAN Segments which are local to Workstation. To communicate over LAN Segments all VMs must have a VLAN ID. To overcome this issue I’ll need to add VLAN support to the VCF Installer.

Challenge 2: Size Requirements

The installer takes up a massive 400+ GB of disk space, 16GB of RAM, and 4 vCPUs. The current configuration of my ESX hosts don’t have a datastore large enough to deploy it to, plus vSAN is not set up. To overcome this issue I’ll need to deploy it as a Workstation VM and attach it to the correct LAN Segment.

In the steps below I’ll show you how I added a VLAN to the VCF Installer, deployed it directly on Workstation, and ensure it’s communicating with my ESX Hosts.

Deploy the VCF Installer

Download the VCF Installer OVA and place the file in a location where Workstation can access it.

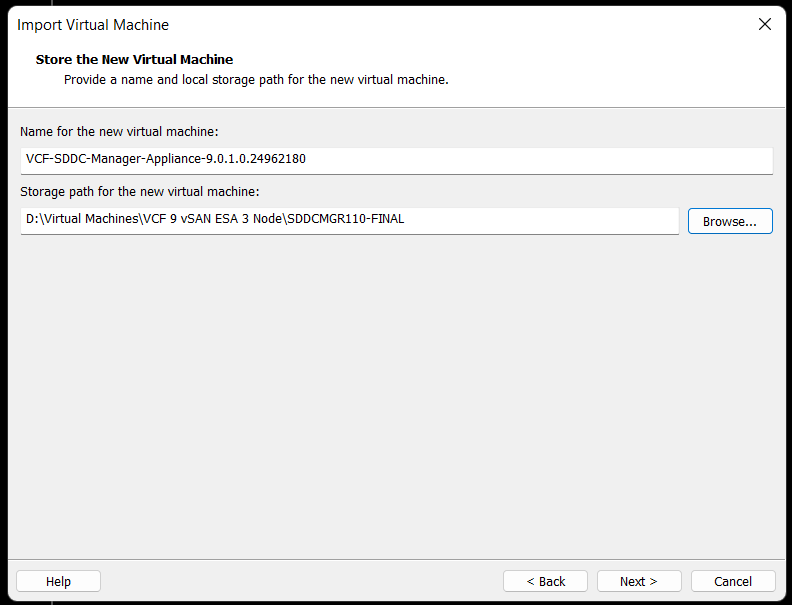

In Workstation click on File > Open. Choose the location of your OVA file and click open.

Check the Accept box > Next

Choose your location for the VCF Installer Appliance to be deployed. Additionally, you can change the name of the VM. Then click Next.

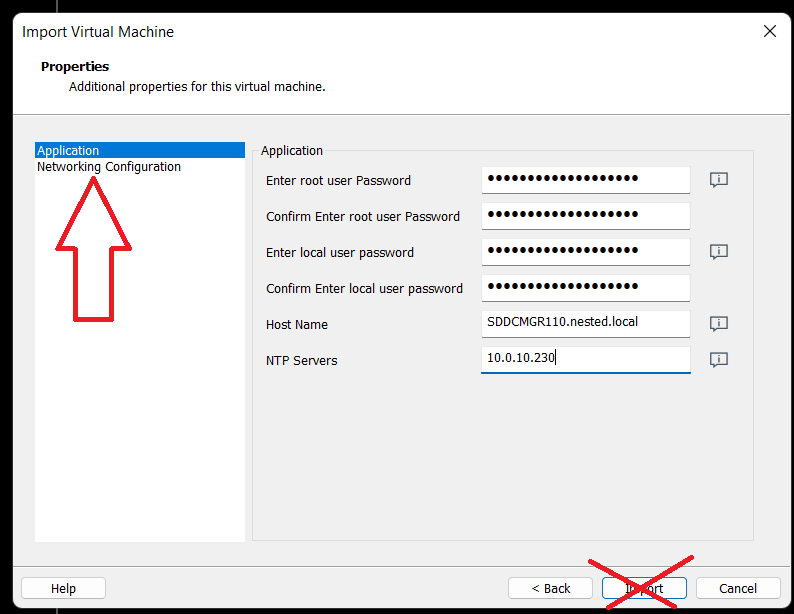

Fill in the passwords, hostname, and NTP Server. Do not click on Import at this time. Click on ‘Network Configuration’.

Enter the network configuration and click on import.

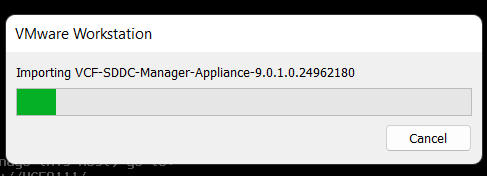

Allow the import to complete.

Allow the VM to boot.

Change the VCF Installer Network Adapter Settings to match the correct LAN Segment. In this case I choose 10 VLAN Management.

Enable VLAN support in the VCF Installer

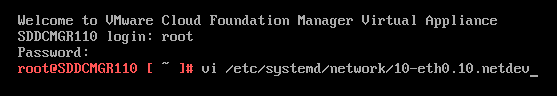

1) Login as root and create the following file.

vi /etc/systemd/network/10-eth0.10.netdev

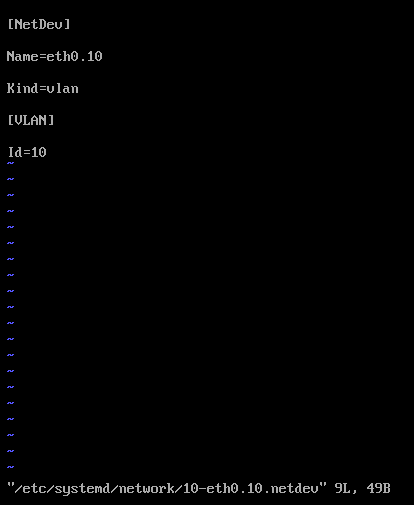

Press Insert the add the following

[NetDev]

Name=eth0.10

Kind=vlan

[VLAN]

Id=10

Press Escape, Press :, Enter wq! and press enter to save

2) Create the following file.

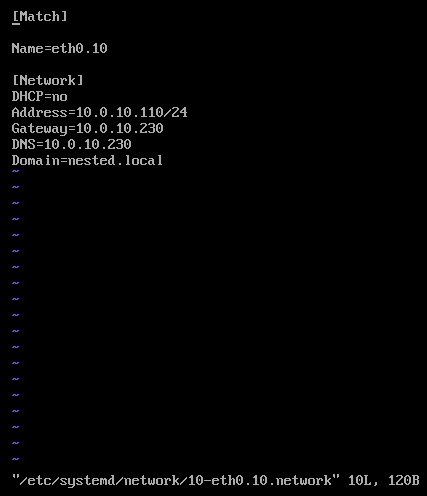

vi /etc/systemd/network/10-eth0.10.network

Press insert and add the following

[Match]

Name=eth0.10

[Network]

DHCP=no

Address=10.0.10.110/24

Gateway=10.0.10.230

DNS=10.0.10.230

Domain=nested.local

Press Escape, Press :, Enter wq! and press enter to save

3) Modify the original network file

vi /etc/systemd/network/10-eth0.network

Press Escape, Press Insert, and remove the static IP address configuration and change the configuration as following:

[Match]

Name=eth0

[Network]

VLAN=eth0.10

Press Escape, Press :, Enter wq! and press enter to save

4) Update the permissions to the newly created files

chmod 644 /etc/systemd/network/10-eth0.10.netdev

chmod 644 /etc/systemd/network/10-eth0.10.network

chmod 644 /etc/systemd/network/10-eth0.network

5) Restart services or restart the vm.

systemctl restart systemd-networkd

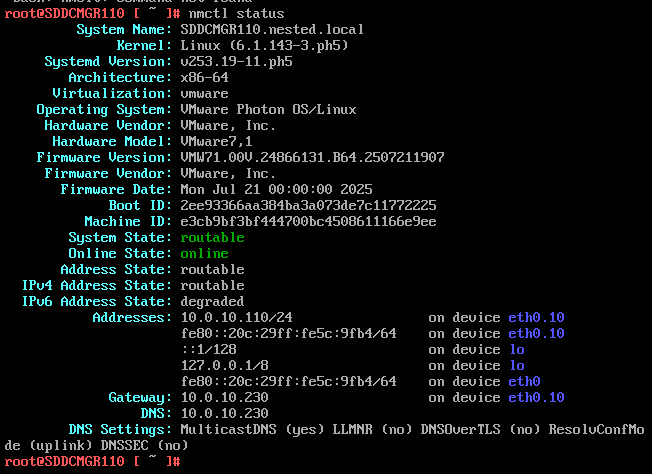

6) Check the network status of the newly created network eth0.10

nmctl status

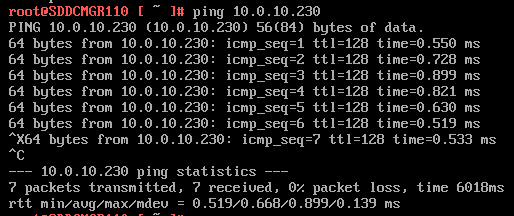

7) Do a ping test from the VCF Installer appliance.

Note – The firewall needs to be adjusted to allow other devices to ping the VCF Installer appliance.

Ping 10.0.10.230

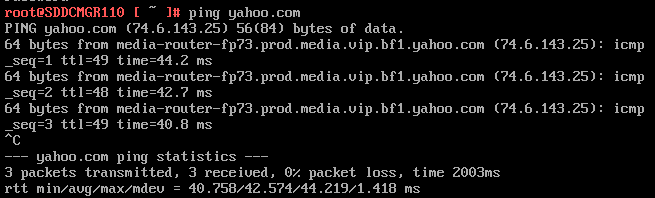

Next I do ping to an internet location to confirm this appliance can route to the internet.

8) Allow SSH access to the VCF Installer Appliance

Follow this BLOG to allow SSH Access.

From the Windows AD server or other device on the same network, putty into the VCF Installer Appliance.

Adjust the VCF Installer Firewall to allow inbound traffic to the new adapter

Note – Might be a good time to make a snapshot of this VM.

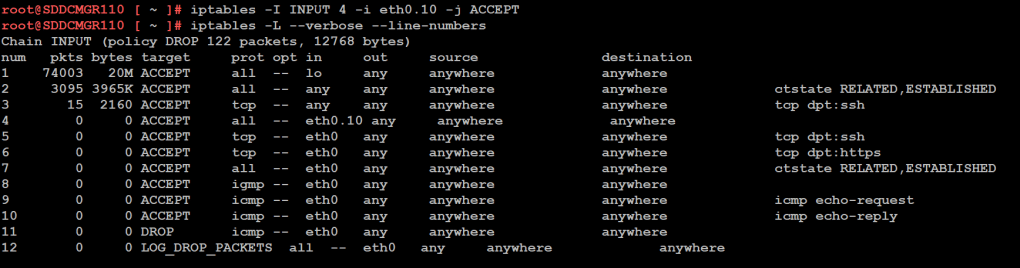

1) From SSH check the firewall rules for the VCF Installer with the following command.

iptables -L –verbose –line-numbers

From this output I can see that eth0 is set up to allow access to https, ping, and other services. However, there are no rules for the eth0.10 adapter. I’ll need to adjust the firewall to allow this traffic.

Next I insert a new rule allowing all traffic to flow through e0.10 and check the rule list.

iptables -I INPUT 4 -i eth0.10 -j ACCEPT

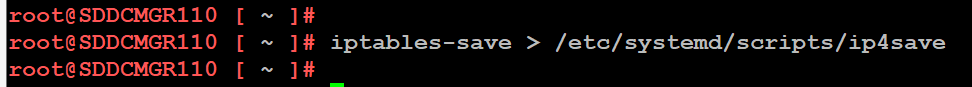

The firewall rules are not static. To make the current firewall rules stay static I need to save the rules.

Save Config Commands

Restart and make sure you can now access the VCF Installer webpage, and I do a ping test again just to be sure.

Now that I got VCF Installer installed and working on VLANs I’m now ready to deploy the VCF Offline Depot tool into my environment and in my next blog post I’ll do just that.