VSAN

VMware Workstation 17 Nested Home Lab Part 2

In Part 2, I go into a physical hardware overview, show how to enable VT-X and VT-D, installation of Windows 11 (which includes a trick to disable the TPM requirement), install of Workstation 17, and review common issues when Hyper V is enabled. At the end of this video #windows11 and #workstation 17 are installed and operational. Coming up in Part 3 we’ll build out or Workstations Networks, start building the Windows 2022 server with AD, DNS, RAS, DHCP, and other services.

#Optane #IntelXeon #Xeon #vExpert #VMware #Cloud #datacenter

VMware Workstation 17 Nested vSAN ESA Overview

In this high level video I give an overview of my #VMware #workstation running 3 x nested ESXi 8 Hosts, vSAN ESA, VCSA, and a Windows 2022 AD. Additionally, I show some early performance results using HCI Bench.

I got some great feedback from my subscribers after posting this video. They were asking for a more detailed series around this build. You can find this 8 Part Series under Best of VMX > ‘VMware Workstation Generation 8 : Complete Software Build Series’.

For more information around my VMware Workstation Generation 8 Build check out my latest BOM here

How to upgrade a Dell T7820 to a U.2 Backplane

In this video I show how I upgraded my Dell T7820 SATA backplane to a U.2 backplane. I’m doing this upgrade to enable support for 2 x #intel #Optane drives. I’ll be using these #Dell #T7820 Workstations for my Next Generation #homelab where I’ll need 4 x Intel Optane drives to support #VMware #vsan ESA.

Part Installed in this Video: (XN8TT) Dell Precision T7820 T5820 U.2 NVME Solid State Drive Backplane Kit found used on Ebay.

For more information around My Next Generation 8 Home Lab based on the Dell T7820 check out my blog series at https://vmexplorer.com/blog-series/

First Look GEN8 ESXi/vSAN ESA 8 Home Lab (Part 1)

I’m kicking off my next generation home lab with this first look in to my choice for an ESXi/vSAN 8 host. There will be more videos to come as this series evolves!

Home Lab Generation 7: Upgrading and Replacing a vSAN 7 Cache Disk

In this video I go over some of the rational and the steps I took to replace the vSAN 7 2 x 200GB SSD SAS cache disks with a 512GB NVMe flash device.

*Products in this video*

Sabrent 512 Rocket – https://www.sabrent.com/product/SB-ROCKET-512/512gb-rocket-nvme-pcie-m-2-2280-internal-ssd-high-performance-solid-state-drive/#description

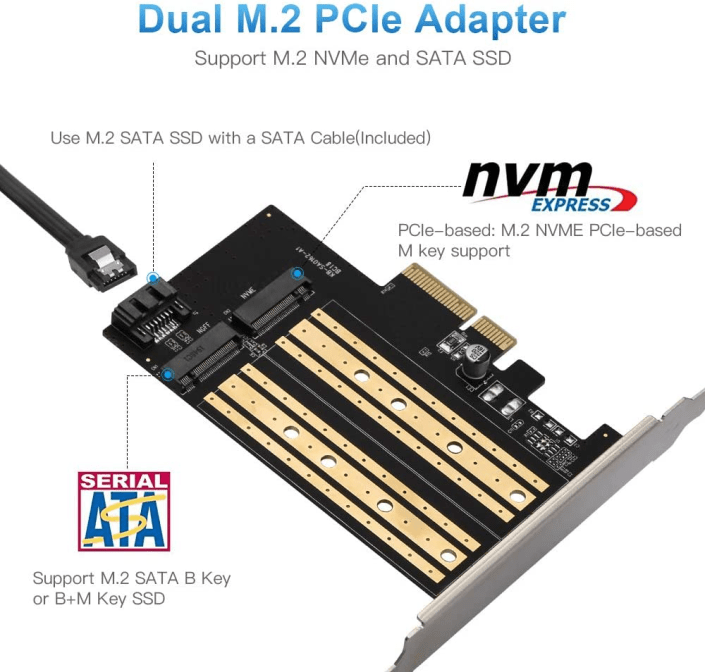

Dual M.2 PCIe Adapter Card for NVMe/SATA – https://www.amazon.com/gp/product/B08MZGN1C5

Home Lab Generation 7: Part 1 – Change Rational for software and hardware changes

Well its that time of year again, time to deploy new changes, upgrades, and add some new hardware. I’ll be updating my ESXi hosts and vCenter Server to the latest vSphere 7 Update 3a from 7U2d. Additionally, I’ll be swapping out the IBM 5210 JBOD for a Dell HBA330+ and lastly I’ll change my boot device to a more reliable and persistent disk. I have 3 x ESXi hosts with VSAN, vDS switches, and NSX-T. If you want to better understand my environment a bit better check out this page on my blog. In this 2 part blog I’ll go through the steps I took to update my home lab and some of the rational behind it.

There are two main parts to the blog:

- Part 1 – Change Rational for software and hardware changes – In this part I’ll explain some of my thoughts around why I’m making these software and hardware changes.

- Part 2 – Installation and Upgrade Steps – These are the high level steps I took to change and upgrade my Home lab

Part 1 – Change Rational for software and hardware changes:

There are three key changes that I plan to make to my environment:

- One – Update to vSphere 7U3a

- vSphere 7U3 has brought many new changes to vSphere including many needed features updates to vCenter server and ESXi. Additionally, there have been serval important bug fixes and corrections that vSphere 7U3 and 7U3a will address. For more information on the updates with vSphere 7U3 please see the “vSphere 7 Update 3 – What’s New” by Bob Plankers. For even more information check out the release notes.

- Part of my rational in upgrading is to prepare to talk with my customers around the benefits of this update. I always test out the latest updates on Workstation first then migrate those learnings in to Home Lab.

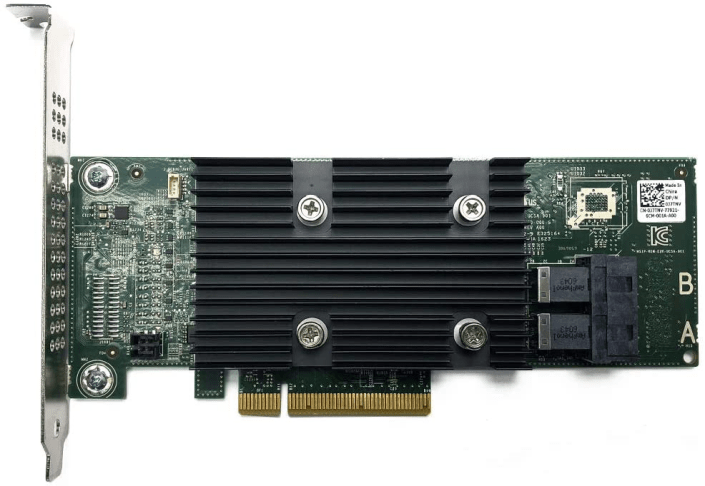

- Two – Change out the IBM 5210 JBOD

- The IBM 5210 JBOD is a carry over component from my vSphere 6.x vSAN environment. It worked well with vSphere 6.x and 7U1. However, starting in 7U2 it started to exhibit stuck IO issues and the occasional PSOD. This card was only certified with vSphere/vSAN 6.x and at some point the cache module became a requirement. My choices at this point are to update this controller with a cache module (~$50 each) and hope it works better or make a change. In this case I decided to make a change to the Dell HBA330 (~$70 each). The HBA330 is a JBOD controller that Dell pretty much worked with VMware to create for vSAN. It is on the vSphere/vSAN 7U3 HCL and should have a long life there too. Additionally, the HBA330 edge connectors (Mini SAS SFF-8643) line up with the my existing SAS break-out cables. When I compare the benefits of the Dell HBA330 to upgrading the cache module for the IBM 5210 the HBA330 was the clear choice. The trick is finding a HBA330 that is cost effective and comes with a full sized slot cover. Its a bit tricky but you can find them on eBay, just have to look a bit harder.

- Three – Change my boot disk

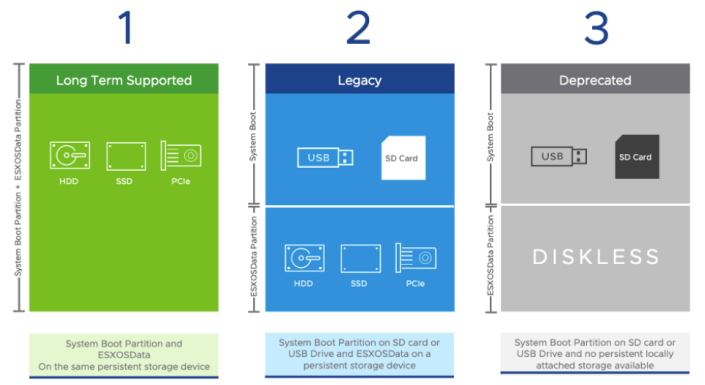

- Last September-2021, VMware announced boot from USB is going to change and customers were advised to plan ahead for these upcoming changes. My current hosts are using cheap SanDisk USB 64GB memory sticks. Its something I would never recommend for a production environment, but for a Home Lab these worked okay. I originally chose them during my Home Lab Gen 5 updates as I need to do testing with USB booted Hosts. Now that VMware has deprecated support for USB/SD devices it’s time to make a change. Point of clarity: the word deprecated can mean different things to different people. However, in the software industry deprecated means “discourage the use of (something, such as a software product) in favor of a newer or better alternative”. vSphere 7 is in a deprecated mode when it comes to USB/SD booted hosts, they are still supported, and customers are highly advised to plan ahead. As of this writing, legacy (legacy is a fancy word for vSphere.NEXT) USB hosts will require a persistent disk and eventually (Long Term Supported) USB/SD booted hosts will no longer be supported. Customers should seek guidance from VMware when making these changes.

-

- The requirement to be in a “Long Term Supported” mode is to have a ESXi host be booted from HDD, SSD, or a PCIe device. In my case, I didn’t want to add more disks to my system and chose to go with a PCIe SSD/NVMe card. I chose this PCIe device that will support M.2 (SATA SSD) and NMVe devices in one slot and I decided to go with a Kingston A400 240G Internal SSD M.2 as my boot disk. The A400 with 240GB should be more than enough to boot the ESXi hosts and keep up with its disk demands going forward.

Final thoughts and a important warning. Making changes that affect your current environment are never easy but are sometimes necessary. With a little planning it can make the journey a bit easier. I’ll be testing these changes over the next few months and will post up if issues occur. However, a bit of warning – adding new devices to an environment can directly impact your ability to migrate or upgrade your hosts. Due to the hardware decisions I have made a direct ESXi upgrade is not possible and I’ll have to back out my current hosts from vCenter Server plus other software and do a new installation. However, those details and more will be in Part 2 – Installation and Upgrade Steps.

Opportunity for vendor improvement – If backup vendors like Synology, asustor, Veeam, Veritas, naviko, and Arcoins could really shine. If they could backup and restore a ESXi host to dislike hardware or boot disks this would be a huge improvement for VI Admin, especially when they have tens of thousands of hosts the need to change from their USB to persistent disks. This is not a new ask, VI admins have been asking for this option for years, now maybe these companies will listen as many users and their hosts are going to be affected by these upcoming requirements.

Home Lab Generation 7: Updating from Gen 5 to Gen 7

Not to long ago I updated my Gen 4 Home Lab to Gen 5 and I posted many blogs and video around this. The Gen 5 Lab ran well for vSphere 6.7 deployments but moving into vSphere 7.0 I had a few issues adapting it. Mostly these issues were with the design of the Jingsha Motherboard. I noted most of these challenges in the Gen 5 wrap up video. Additionally, I had some new networking requirements mainly around adding multiple Intel NIC ports and Home Lab Gen 5 was not going to adapt well or would be very costly to adapt. These combined adaptions forced my hand to migrate to what I’m calling Home Lab Gen 7. Wait a minute, what happen to Home Lab Gen 6? I decided to align my Home Lab Generation numbers to match vSphere release number, so I skipped Gen 6 to align.

First: I review my design goals:

- Be able to run vSphere 7.x and vSAN Environment

- Reuse as much as possible from Gen 5 Home lab, this will keep costs down

- Choose products that bring value to the goals, are cost effective, and if they are on the VMware HCL that a plus but not necessary for a home lab

- Keep networking (vSAN / FT) on 10Gbe MikroTik Switch

- Support 4 x Intel Gbe Networks

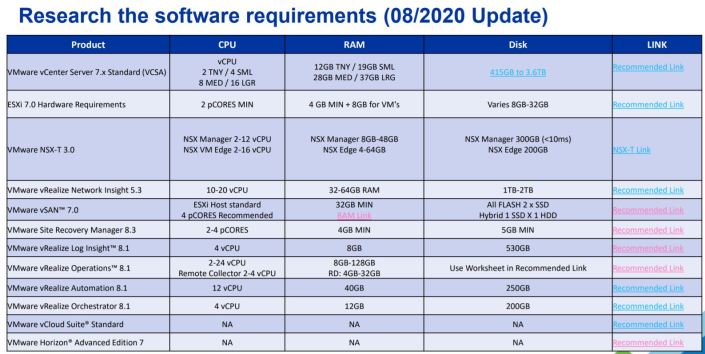

- Ensure there will be enough CPU cores and RAM to be able to support multiple VMware products (ESXi, VCSA, vSAN, vRO, vRA, NSX, LogInsight)

- Be able to fit the the environment into 3 ESXi Hosts

- The environment should run well, but doesn’t have to be a production level environment

Second – Evaluate Software, Hardware, and VM requirements:

My calculated numbers from my Gen 5 build will stay rather static for Gen 7. The only update for Gen 7 is to use the updated requirements table which can be found here >> ‘HOME LABS: A DEFINITIVE GUIDE’

Third – Home Lab Design Considerations

This too will be very similar to Gen 5, but I do review this table and made any last changes to my design

Four – Choosing Hardware

Based on my estimations above I’m going to need a very flexible Mobo, supporting lots of RAM, good network connectivity, and should be as compatible as possible with my Gen 5 hardware. I’ve reused many parts from Gen 5 but the main change came with the Supermicro Motherboard and the addition of 2TB SAS HDD listed below.

Note: I’ve listed the newer items in Italics all other parts I’ve carried over from Gen 5.

Overview:

- My Gen 7 Home Lab is based on vSphere 7 (VCSA, ESXi, and vSAN) and it contains 3 x ESXi Hosts, 1 x Windows 10 Workstation, 4 x Cisco Switches, 1 x MikroTik 10gbe Switch, 2 x APC UPS

ESXi Hosts:

- Case:

- Rosewill RISE Glow EATX (Newegg $54)

- Motherboard:

- Supermicro X9DRD-7LN4F-JBOD (Ebay $159)

- Mobo Stands: 4mm Nylon Plastic Pillar (Amazon $8)

- CPU:

- CPU: Xeon E5-2640 v2 8 Cores / 16 HT (Ebay $30 each)

- CPU Cooler: DEEPCOOL GAMMAXX 400 (Amazon $19)

- CPU Cooler Bracket: Rectangle Socket 2011 CPU Cooler Mounting Bracket (Ebay $16)

- RAM:

- 128GB DDR3 ECC RAM (Ebay $170)

- Disks:

- 64GB USB Thumb Drive (Boot)

- 2 x 200 SAS SSD (vSAN Cache)

- 2 x 2TB SAS HDD (vSAN Capacity – See this post)

- 1 x 2TB SATA (Extra Space)

- SAS Controller:

- 1 x IBM 5210 JBOD (Ebay)

- CableCreation Internal Mini SAS SFF-8643 to (4) 29pin SFF-8482 (Amazon $18)

- Network:

- Motherboard Integrated i350 1gbe 4 Port

- 1 x MellanoxConnectX3 Dual Port (HP INFINIBAND 4X DDR PCI-E HCA CARD 452372-001)

- Power Supply:

- Antec Earthwatts 500-600 Watt (Adapters needed to support case and motherboard connections)

- Adapter: Dual 8(4+4) Pin Male for Motherboard Power Adapter Cable (Amazon $11)

- Adapter: LP4 Molex Male to ATX 4 pin Male Auxiliary (Amazon $11)

- Power Supply Extension Cable: StarTech.com 8in 24 Pin ATX 2.01 Power Extension Cable (Amazon $9)

- Antec Earthwatts 500-600 Watt (Adapters needed to support case and motherboard connections)

Network:

- Core VM Switches:

- 2 x Cisco 3650 (WS-C3560CG-8TC-S 8 Gigabit Ports, 2 Uplink)

- 2 x Cisco 2960 (WS-C2960G-8TC-L)

- 10gbe Network:

- 1 x MikroTik 10gbe CN309 (Used for vSAN and Replication Network)

- 2 ea. x HP 684517-001 Twinax SFP 10gbe 0.5m DAC Cable (Ebay)

- 2 ea. x MELLANOX QSFP/SFP ADAPTER 655874-B21 MAM1Q00A-QSA (Ebay)

Battery Backup UPS:

- 2 x APC NS1250

Windows 10 Workstation:

- Case: Phanteks Enthoo Pro series PH-ES614PC_BK Black Steel

- Motherboard: MSI PRO Z390-A PRO

- CPU: Intel Core i7-8700

- RAM: 64GB DDR4 RAM

- 1TB NVMe

Thanks for reading, please do reach out if you have any questions.

If you like my ‘no-nonsense’ videos and blogs that get straight to the point… then post a comment or let me know… Else, I’ll start posting really boring content!

GA Release VMware PowerCLI 12.1.0 | Announcement, information, and links

VMware announced the GA Releases of the following: VMware PowerCLI 12.1.0

See the base table for all the technical enablement links including a VMworld 2020 session and new Hands On Lab

| Release Overview | |||||||||||||

VMware PowerCLI is a command-line and scripting tool built on Windows PowerShell, and provides more than 700 cmdlets for managing and automating vSphere, VMware Cloud Director, vRealize Operations Manager, vSAN, NSX-T, VMware Cloud Services, VMware Cloud on AWS, VMware HCX, VMware Site Recovery Manager, and VMware Horizon environments. VMware PowerCLI is a command-line and scripting tool built on Windows PowerShell, and provides more than 700 cmdlets for managing and automating vSphere, VMware Cloud Director, vRealize Operations Manager, vSAN, NSX-T, VMware Cloud Services, VMware Cloud on AWS, VMware HCX, VMware Site Recovery Manager, and VMware Horizon environments.

|

|||||||||||||

| What’s New | |||||||||||||

| VMware PowerCLI 12.1.0 introduces the following new features, changes, and improvements:

Added cmdlets for

New Features

Added support for

|

|||||||||||||

| Upgrade Considerations | |||||||||||||

Ensure the following software is present on your system

|

|||||||||||||

| Updated Components | |||||||||||||

In VMware PowerCLI 12.1.0, the following modules have been updated:

|

|||||||||||||

| Enablement Links | |||||||||||||

| Release Notes | Click Here | What’s New in This Release | Resolved Issues | Known Issues | ||||||||||||

| docs.vmware.com/pCLI | Introduction | Installing | Configuring | cmdlet Reference | ||||||||||||

| Compatibility Information | Interoperability Matrix | Upgrade Path Matrix | ||||||||||||

| Blogs & Infolinks | VMware What’s New pCLI vRLCM | VMware What’s New pCLI with AWS | PM’s Blog pCLI SSO | ||||||||||||

| Download | Click Here | ||||||||||||

| VMworld 2020 Sessions | PowerCLI: Into the Deep [HCP1286] | ||||||||||||

| Hands On Labs | HOL-2111-04-SDC – VMware vSphere Automation – PowerCLI | ||||||||||||

Home Lab Generation 7: Upgrading vSAN 7 Hybrid capacity step by step

My GEN5 Home Lab is ever expanding and the space demands on the vSAN cluster were becoming more apparent. This past weekend I updated my vSAN 7 cluster capacity disks from 6 x 600GB SAS HDD to 6 x 2TB SAS HDD and it went very smoothly. Below are my notes and the order I followed around this upgrade. Additionally, I created a video blog (link further below) around these steps. Lastly, I can’t stress this enough – this is my home lab and not a production environment. The steps in this blog/video are just how I went about it and are not intended for any other purpose.

Current Cluster:

- 3 x ESXi 7.0 Hosts (Supermicro X9DRD-7LN4F-JBOD, Dual E5 Xeon, 128GB RAM, 64GB USB Boot)

- vSAN Storage is:

- 600GB SAS Capacity HDD

- 200GB SAS Cache SDD

- 2 Disk Groups per host (1 x 200GB SSD + 1 x 600GB HDD)

- IBM 5210 HBA Disk Controller

- vSAN Datastore Capacity: ~3.5TB

- Amount Allocated: ~3.7TB

- Amount in use: ~1.3TB

Proposed Change:

- Keep the 6 x 200GB SAS Cache SDD Drives

- Remove 6 x 600GB HDD Capacity Disk from hosts

- Replace with 6 x 2TB HDD Capacity Disks

- Upgraded vSAN Datastore ~11TB

Upgrade Notes:

- I choose to backup (via clone to offsite storage) and power off most of my VMs

- I clicked on the Cluster > Configure > vSAN > Disk Management

- I selected the one host I wanted to work with and then the Disk group I wanted to work with

- I located one of the capacity disks (600GB) and clicked on it

- I noted its NAA ID (will need later)

- I then clicked on “Pre-check Data Migration” and choose ‘full data migration’

- The test completed successfully

- Back at the Disk Management screen I clicked on the HDD I am working with

- Next I clicked on the ellipse dots and choose ‘remove’

- A new window appeared and for vSAN Data Migration I choose ‘Full Data Migration’ then clicked remove

- I monitored the progress in ‘Recent Tasks’

- Depending on how much data needed to be migrated, and if there were other objects being resynced it could take a bit of time per drive. For me this was ~30-90 mins per drive

- Once the data migration was complete, I went to my host and found the WWN# of the physical disk that matched the NAA ID from Step 5

- While the system was still running, removed disk from the chassis, and replaced it with the new 2TB HDD

- Back at vCenter Server I clicked on the Host on the Cluster > Configure > Storage > Storage Devices

- I made sure the new 2TB drive was present

- I clicked on the 2TB drive, choose ‘erase partitions’ and choose OK

- I clicked on the Cluster > Configure > vSAN > Disk Management > ‘Claim Unused Disks’

- A new Window appeared and I choose ‘Capacity’ for the 2TB HDD, ‘Cache’ for the 200GB SDD drives, and choose OK

- Recent Task showed the disk being added

- When it was done I clicked on the newly added disk group and ensured it was in a health state

- I repeated this process until all the new HDDs were added

Final Outcome:

- After upgrade the vSAN Storage is:

- 2TB SAS Capacity HDD

- 200GB SAS Cache SDD

- 2 Disk Groups per host (1 x 200GB SSD + 1 x 2TB HDD)

- IBM 5210 HBA Disk Controller

- vSAN Datastore is ~11.7TB

Notes & other thoughts:

- I was able complete the upgrade in this order due to the nature my home lab components. Mainly because I’m running a SAS Storage HBA that is just a JBOD controller supporting Hot-Pluggable drives.

- Make sure you run the data migration pre-checks and follow any advice it has. This came in very handy.

- If you don’t have enough space to fully evacuate a capacity drive you will either have to add more storage or completely remove VM’s from the cluster.

- Checking Cluster>Monitor>vSAN>Resyncing Objects, gave me a good idea when I should start my next migration. I look for it to be complete before I start. If you have an very active cluster this maybe harder to achieve.

- Checking the vSAN Cluster Health should be done, especially the Cluster > Monitor > Skyline Health > Data > vSAN Object Health, any issues in these areas should be looked into prior to migration

- Not always, but mostly, the disk NAA ID reported in vCenter Server/vSAN usually coincides with the WWN Number on the HDD

- By changing my HDDs from 600GB SAS 10K to 2TB SAS 7.2K there will be a performance hit. However, my lab needed more space and 10k-15K drives were just out of my budget.

- Can’t recommend this reference Link from VMware enough: Expanding and Managing a vSAN Cluster

Video Blog:

Various Photos:

|

|

If you like my ‘no-nonsense’ videos and blogs that get straight to the point… then post a comment or let me know… Else, I’ll start posting really boring content!

Create an ESXi installation ISO with custom drivers in 9 easy steps!

Video Posted on Updated on

One of the challenges in running a VMware based home lab is the ability to work with old / inexpensive hardware but run latest software. Its a balance that is sometimes frustrating, but when it works it is very rewarding. Most recently I decided to move to 10Gbe from my InfiniBand 40Gb network. Part of this transition was to create an ESXi ISO with the latest build (6.7U3) and appropriate network card drivers. In this video blog post I’ll show 9 easy steps to create your own customized ESXi ISO and how to pin point IO Cards on the vmware HCL.

** Update 06/22/2022 ** If you are looking to do USB NICs with ESXi check out the new fling (USB Network Native Driver for ESXi) that helps with this. This Fling supports the most popular USB network adapter chipsets ASIX USB 2.0 gigabit network ASIX88178a, ASIX USB 3.0 gigabit network ASIX88179, Realtek USB 3.0 gigabit network RTL8152/RTL8153 and Aquantia AQC111U. https://flings.vmware.com/usb-network-native-driver-for-esxi

NOTE – Flings are NOT supported by VMware

** Update 03/06/2020 ** Though I had good luck with the HP 593742-001 NC523SFP DUAL PORT SFP+ 10Gb card in my Gen 4 Home Lab, I found it faulty when running in my Gen 5 Home Lab. Could be I was using a PCIe x4 slot in Gen 4, or it could be the card runs to hot to touch. For now this card was removed from VMware HCL, HP has advisories out about it, and after doing some poking around there seem to be lots of issues with it. I’m looking for a replacement and may go with the HP NC550SFP. However, this doesn’t mean the steps in this video are only for this card, the steps in this video help you to better understand how to add drivers into an ISO.

Here are the written steps I took from my video blog. If you are looking for more detail, watch the video.

Before you start – make sure you have PowerCLI installed, have download these files, and have placed these files in c:\tmp.

- Download driver –

- LSI Driver: https://my.vmware.com/group/vmware/details?downloadGroup=DT-ESXI60-QLOGIC-QLCNIC-61191&productId=491

- Note: Extract the offline bundle from this package

- Download ESXi –

- ESXi Update ZIP File: vmware.com/downloads

- Note: make sure you download the Update ZIP file and not the ESXi ISO file

I started up PowerCLI and did the following commands:

1) Add the ESXi Update ZIP file to the depot:

Add-EsxSoftwareDepot C:\tmp\update-from-esxi6.7-6.7_update03.zip

2) Add the LSI Offline Bundle ZIP file to the depot:

Add-EsxSoftwareDepot ‘C:\tmp\qlcnic-esx55-6.1.191-offline_bundle-2845912.zip’

3) Make sure the files from step 1 and 2 are in the depot:

Get-EsxSoftwareDepot

4) Show the Profile names from update-from-esxi6.7-6.7_update03. The default command only shows part of the name. To correct this and see the full name use the ‘| select name’

Get-EsxImageProfile | select name

5) Create a clone profile to start working with.

New-EsxImageProfile -cloneprofile ESXi-6.7.0-20190802001-standard -Name ESXi-6.7.0-20190802001-standard-QLogic -Vendor QLogic

6) Validate the LSI driver is loaded in the local depot. It should match the driver from step 2. Make sure you note the name and version number columns. We’ll need to combine these two with a space in the next step.

Get-EsxSoftwarePackage -Vendor q*

7) Add the software package to the cloned profile. Tip: For ‘SoftwarePackage:’ you should enter the ‘name’ space ‘version number’ from step 6. If you just use the short name it might not work.

Add-EsxSoftwarePackage

ImageProfile: ESXi-6.7.0-20190802001-standard-QLogic

SoftwarePackage[0]: net-qlcnic 6.1.191-1OEM.600.0.0.2494585

8) Optional: Compare the profiles, to see differences, and ensure the driver file is in the profile.

Get-EsxImageProfile | select name << Run this if you need a reminder on the profile names

Compare-EsxImageProfile -ComparisonProfile ESXi-6.7.0-20190802001-standard-QLogic -ReferenceProfile ESXi-6.7.0-20190802001-standard

9) Create the ISO

Export-EsxImageProfile -ImageProfile “ESXi-6.7.0-20190802001-standard-QLogic” -ExportToIso -FilePath c:\tmp\ESXi-6.7.0-20190802001-standard-QLogic.iso

That’s it! If you like my ‘no-nonsense’ videos and blogs that get straight to the point… then post a comment or let me know… Else, I’ll start posting boring video blogs!

Cross vSAN Cluster support for FT