Storage

Home Lab Generation 7: Part 1 – Change Rational for software and hardware changes

Well its that time of year again, time to deploy new changes, upgrades, and add some new hardware. I’ll be updating my ESXi hosts and vCenter Server to the latest vSphere 7 Update 3a from 7U2d. Additionally, I’ll be swapping out the IBM 5210 JBOD for a Dell HBA330+ and lastly I’ll change my boot device to a more reliable and persistent disk. I have 3 x ESXi hosts with VSAN, vDS switches, and NSX-T. If you want to better understand my environment a bit better check out this page on my blog. In this 2 part blog I’ll go through the steps I took to update my home lab and some of the rational behind it.

There are two main parts to the blog:

- Part 1 – Change Rational for software and hardware changes – In this part I’ll explain some of my thoughts around why I’m making these software and hardware changes.

- Part 2 – Installation and Upgrade Steps – These are the high level steps I took to change and upgrade my Home lab

Part 1 – Change Rational for software and hardware changes:

There are three key changes that I plan to make to my environment:

- One – Update to vSphere 7U3a

- vSphere 7U3 has brought many new changes to vSphere including many needed features updates to vCenter server and ESXi. Additionally, there have been serval important bug fixes and corrections that vSphere 7U3 and 7U3a will address. For more information on the updates with vSphere 7U3 please see the “vSphere 7 Update 3 – What’s New” by Bob Plankers. For even more information check out the release notes.

- Part of my rational in upgrading is to prepare to talk with my customers around the benefits of this update. I always test out the latest updates on Workstation first then migrate those learnings in to Home Lab.

- Two – Change out the IBM 5210 JBOD

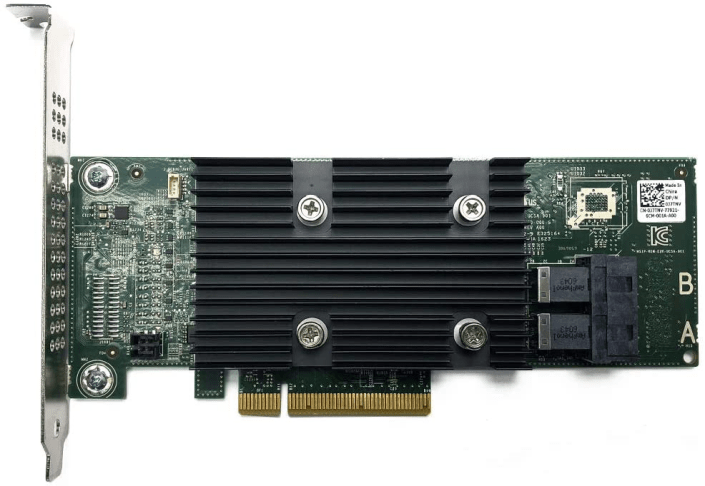

- The IBM 5210 JBOD is a carry over component from my vSphere 6.x vSAN environment. It worked well with vSphere 6.x and 7U1. However, starting in 7U2 it started to exhibit stuck IO issues and the occasional PSOD. This card was only certified with vSphere/vSAN 6.x and at some point the cache module became a requirement. My choices at this point are to update this controller with a cache module (~$50 each) and hope it works better or make a change. In this case I decided to make a change to the Dell HBA330 (~$70 each). The HBA330 is a JBOD controller that Dell pretty much worked with VMware to create for vSAN. It is on the vSphere/vSAN 7U3 HCL and should have a long life there too. Additionally, the HBA330 edge connectors (Mini SAS SFF-8643) line up with the my existing SAS break-out cables. When I compare the benefits of the Dell HBA330 to upgrading the cache module for the IBM 5210 the HBA330 was the clear choice. The trick is finding a HBA330 that is cost effective and comes with a full sized slot cover. Its a bit tricky but you can find them on eBay, just have to look a bit harder.

- Three – Change my boot disk

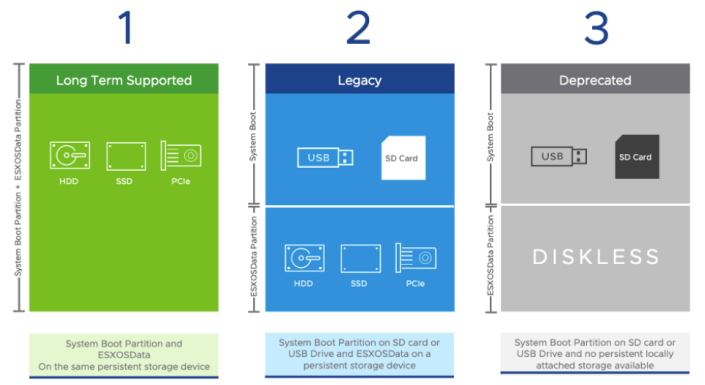

- Last September-2021, VMware announced boot from USB is going to change and customers were advised to plan ahead for these upcoming changes. My current hosts are using cheap SanDisk USB 64GB memory sticks. Its something I would never recommend for a production environment, but for a Home Lab these worked okay. I originally chose them during my Home Lab Gen 5 updates as I need to do testing with USB booted Hosts. Now that VMware has deprecated support for USB/SD devices it’s time to make a change. Point of clarity: the word deprecated can mean different things to different people. However, in the software industry deprecated means “discourage the use of (something, such as a software product) in favor of a newer or better alternative”. vSphere 7 is in a deprecated mode when it comes to USB/SD booted hosts, they are still supported, and customers are highly advised to plan ahead. As of this writing, legacy (legacy is a fancy word for vSphere.NEXT) USB hosts will require a persistent disk and eventually (Long Term Supported) USB/SD booted hosts will no longer be supported. Customers should seek guidance from VMware when making these changes.

-

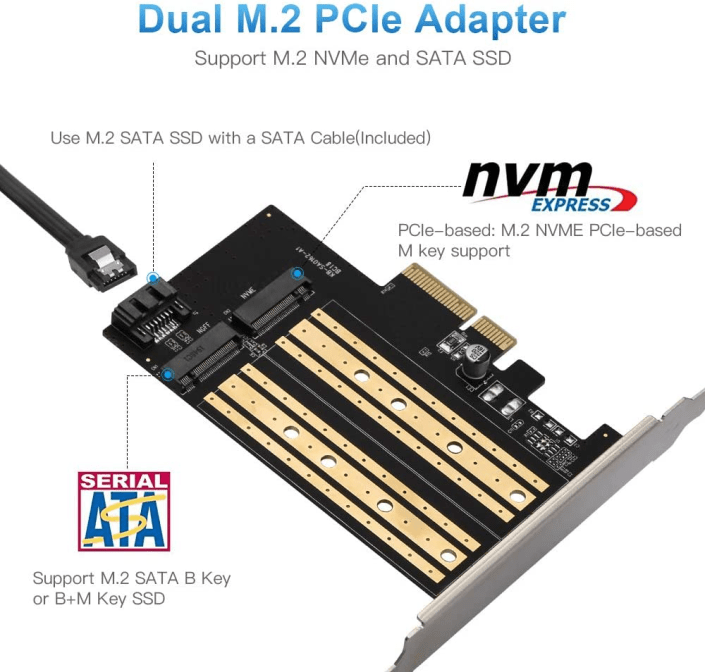

- The requirement to be in a “Long Term Supported” mode is to have a ESXi host be booted from HDD, SSD, or a PCIe device. In my case, I didn’t want to add more disks to my system and chose to go with a PCIe SSD/NVMe card. I chose this PCIe device that will support M.2 (SATA SSD) and NMVe devices in one slot and I decided to go with a Kingston A400 240G Internal SSD M.2 as my boot disk. The A400 with 240GB should be more than enough to boot the ESXi hosts and keep up with its disk demands going forward.

Final thoughts and a important warning. Making changes that affect your current environment are never easy but are sometimes necessary. With a little planning it can make the journey a bit easier. I’ll be testing these changes over the next few months and will post up if issues occur. However, a bit of warning – adding new devices to an environment can directly impact your ability to migrate or upgrade your hosts. Due to the hardware decisions I have made a direct ESXi upgrade is not possible and I’ll have to back out my current hosts from vCenter Server plus other software and do a new installation. However, those details and more will be in Part 2 – Installation and Upgrade Steps.

Opportunity for vendor improvement – If backup vendors like Synology, asustor, Veeam, Veritas, naviko, and Arcoins could really shine. If they could backup and restore a ESXi host to dislike hardware or boot disks this would be a huge improvement for VI Admin, especially when they have tens of thousands of hosts the need to change from their USB to persistent disks. This is not a new ask, VI admins have been asking for this option for years, now maybe these companies will listen as many users and their hosts are going to be affected by these upcoming requirements.

Quick NAS Topics: USB WiFi on the DRIVESTOR 2 Pro

During this Quick NAS Topic I review how to install and test a supported USB WiFi Network device into the DRIVESTOR 2 Pro.

** Products / Links Seen in this Video **

DRIVESTOR 2 PRO

asustor – https://www.asustor.com/en/product?p_id=72 and https://www.asustor.com/datasheet?p_id=72

OR Amazon – https://www.amazon.com/Asustor-Drivestor-Pro-AS3302T-Attached/dp/B08ZD7R72P

ASUS USB-AC56 https://www.asus.com/Networking-IoT-Servers/Adapters/All-series/USBAC56/

** Other Links **

https://www.asustor.com/service/compatibility

https://www.asustor.com/service/wifi?id=wifi

https://downloadgb.asustor.com/download/docs/User_Guide/ADM40/ASUSTOR_NAS_USER_GUIDE_ENU_4.0.0.0304.pdf

https://www.asustor.com/online/College

More details go to: vmexplorer.com

Quick NAS Topics: Expanding the Capacity of your NAS with the DRIVESTOR 2 Pro

During this video I demonstrate the steps to expand the asustor DRIVESTOR 2 Pro running on ADM Ver. 4.0.0.RKV2. The initial volume is RAID1 500GB and I expand it into a RAID1 3TB volume. Though this video doesn’t cover backing up your data, users should ensure a proper backup is completed prior to doing these steps.

** Products / Links Seen in this Video **

Further Instructions can be found here:

https://www.asustor.com/online/College_topic?topic=352#raid21

DRIVESTOR 2 PRO:

asustor – https://www.asustor.com/en/product?p_id=72 and https://www.asustor.com/datasheet?p_id=72 Amazon – https://www.amazon.com/Asustor-Drivestor-Pro-AS3302T-Attached/dp/B08ZD7R72P

Quick NAS Topics: One Touch Backup with the LOCKERSTOR 10

In this quick video I go through the basics of setting up the One Touch Backup with the LOCKERSTOR 10 on ADM 3.5.x.

10Gbe NAS Home Lab Part 6 Initial Setup Synology 1621+

In this video I start to setup the DS1621+. I cover where to find the basic information on this NAS and its alignment to the VMware HCL. From there I demonstrate the initial setup of the DS1621+.

** URLS Seen in this Video **

10Gbe NAS Home Lab Part 5 Initial Setup DRIVESTOR 2 Pro and LOCKERSTOR 10

In this super long video I review over some of the basic key features with the DRIVESTOR 2 Pro (AS3302T) and LOCKERSTOR 10 (AS6510T). From there I demonstrate the initial setup of both NAS devices. Finally, I install Asustor Control Center and review the VMware HCL around Asustor products.

** Products / Links Seen in this Video **

DRIVESTOR 2 PRO

asustor – https://www.asustor.com/en/product?p_id=72 and https://www.asustor.com/datasheet?p_id=72

Amazon – https://www.amazon.com/Asustor-Drivestor-Pro-AS3302T-Attached/dp/B08ZD7R72P

LOCKERSTOR10

asustor – https://www.asustor.com/product?p_id=64 and https://www.asustor.com/datasheet?p_id=64

Amazon – https://www.amazon.com/Asustor-Lockerstor-Enterprise-Attached-Quad-Core/dp/B07Y2BJWLT/

10Gbe NAS Home Lab Part 4: asustor DRIVESTOR 2 PRO and LOCKERSTOR10 Teardown

In part 4 of this series I tear down the asustor DRIVESTOR 2 PRO and LOCKERSTOR10. These are demo non-warranty units which allow me to tear them down. I highly recommend you work with asustor prior to any disassembly and warranty information.

** Products Seen in this Video **

DRIVESTOR 2 PRO

asustor – https://www.asustor.com/en/product?p_id=72

Amazon – https://www.amazon.com/Asustor-Drivestor-Pro-AS3302T-Attached/dp/B08ZD7R72P

LOCKERSTOR10

asustor – https://www.asustor.com/product?p_id=64

Amazon – https://www.amazon.com/Asustor-Lockerstor-Enterprise-Attached-Quad-Core/dp/B07Y2BJWLT/

10Gbe NAS Home Lab Part 3: asustor DRIVESTOR 2 PRO and LOCKERSTOR10 Unboxing

In part 3 of this series asustor joins the 10Gbe NAS Home Lab build! In this video I take a first look and unbox the asustor DRIVESTOR 2 PRO and LOCKERSTOR10. I also go over some of their features.

** Products Seen in this Video **

DRIVESTOR 2 PRO

asustor – https://www.asustor.com/en/product?p_id=72

Amazon – https://www.amazon.com/Asustor-Drivestor-Pro-AS3302T-Attached/dp/B08ZD7R72P

LOCKERSTOR10

asustor – https://www.asustor.com/product?p_id=64

Amazon – https://www.amazon.com/Asustor-Lockerstor-Enterprise-Attached-Quad-Core/dp/B07Y2BJWLT/

10Gbe NAS Home Lab Part 2: Dissecting the Synology DiskStation DS1621+

In part 2 of this series I dissect the Synology DiskStation DS1621+. This is a pretty long video – best to watch it at 2x speed :) Note: This was a loaner unit and I do not recommend others doing this as it may void the warranty. I made this video to simply show others what the insides look like. Reach out if you have questions.

** PART Seen in this Video **

1x Synology 6 bay NAS DiskStation DS1621+ https://www.amazon.com/Synology-Bay-DiskStation-DS1621-Diskless/dp/B08HYQJJ62

2x Synology M.2 2280 NVMe SSD SNV3400 800GB https://www.amazon.com/Synology-2280-NVMe-SNV3400-800GB/dp/B08WLJYY76/

10Gbe NAS Home Lab Part 1: Parts Overview and Synology DS1621+

In this new video series I’ll be testing serval NAS products with in my NAS test lab.

My plan is to setup a new 10GBe network with 2 x Windows 10 PCs w/10gbe NICs, Laptops, Cell Phones, VMware ESXi and various other devices to see how they perform with the different NAS devices. Additionally, I’ll be going over the NAS devices and their software options too. A big part of my Home Network is the use of PLEX. Seeing how these devices handle PLEX and their other built in apps should make for some interesting content.

It all starts with this blog and in this initial video I go over some of the parts I’ve assembled for my NAS development lab. As the series progresses I plan to enhance the lab and how the devices interact with it.

** Advisement **

- 07/30/21: I am just starting to work with these components and set them up. Everything tells me they should work together. However, I have not tested them together.

- Any products that I blog/vblog about may or may not work – YOU ultimately assume all risk

** PARTS Seen in this Video **

- 1x Synology 6 bay NAS DiskStation DS1621+ https://www.amazon.com/Synology-Bay-DiskStation-DS1621-Diskless/dp/B08HYQJJ62

- 2x Synology M.2 2280 NVMe SSD SNV3400 800GB https://www.amazon.com/Synology-2280-NVMe-SNV3400-800GB/dp/B08WLJYY76/

- 1x Synology 10Gb Ethernet Adapter 2 SFP+ Ports (E10G21-F2), Black https://www.amazon.com/Synology-Ethernet-Adapter-Ports-E10G21-F2/dp/B08WLJQYL2

- 10x Cable Matters 5-Pack Snagless Short Cat6A (SSTP, SFTP) Shielded Ethernet Cable in Black 7 ft https://www.amazon.com/gp/product/B00HEM5FEI

- 2x ASUS XG-C100C 10G Network Adapter Pci-E X4 Card with Single RJ-45 Port https://www.amazon.com/gp/product/B072N84DG6/

- 1x MikroTik 9-Port Desktop Switch, 1 Gigabit Ethernet Port, 8 SFP+ 10Gbps Ports (CRS309-1G-8S+IN) https://www.amazon.com/gp/product/B07NFXN4SS/

- 8x FLYPROFiber 10GBase-T SFP+ to RJ45 for MikroTik https://www.amazon.com/gp/product/B08FXBFZP8/

- 10x HP 684517-001 TWINAX SFP+ 10GBE 0.5M DAC Cable Assy 611980001 4N6H4-01 https://www.ebay.com/itm/264745339079?ssPageName=STRK%3AMEBIDX%3AIT&_trksid=p2060353.m2749.l2649

- ← Previous

- 1

- 2

- 3

- …

- 6

- Next →