ESXi

VMware Workstation 17 Nested Home Lab Part 2

In Part 2, I go into a physical hardware overview, show how to enable VT-X and VT-D, installation of Windows 11 (which includes a trick to disable the TPM requirement), install of Workstation 17, and review common issues when Hyper V is enabled. At the end of this video #windows11 and #workstation 17 are installed and operational. Coming up in Part 3 we’ll build out or Workstations Networks, start building the Windows 2022 server with AD, DNS, RAS, DHCP, and other services.

#Optane #IntelXeon #Xeon #vExpert #VMware #Cloud #datacenter

VMware Workstation 17 Nested vSAN ESA Overview

In this high level video I give an overview of my #VMware #workstation running 3 x nested ESXi 8 Hosts, vSAN ESA, VCSA, and a Windows 2022 AD. Additionally, I show some early performance results using HCI Bench.

I got some great feedback from my subscribers after posting this video. They were asking for a more detailed series around this build. You can find this 8 Part Series under Best of VMX > ‘VMware Workstation Generation 8 : Complete Software Build Series’.

For more information around my VMware Workstation Generation 8 Build check out my latest BOM here

First Look GEN8 ESXi/vSAN ESA 8 Home Lab (Part 1)

I’m kicking off my next generation home lab with this first look in to my choice for an ESXi/vSAN 8 host. There will be more videos to come as this series evolves!

Will ESXi 8 install on to the ASRockRack EPC621D8A motherboard?

In this video I show how I installed ESXi 8 on to the ASRockRack EPC621D8A motherboard and discuss some of the caveats of doing so.

Links in the video:

ASRockRack EPC621D8A motherboard: https://www.asrockrack.com/general/productdetail.asp?Model=EPC621D8A#specifications

VMware Comptibility Guide: https://www.vmware.com/resources/compatibility/detail.php?deviceCategory=server&productid=47030&deviceCategory=server&details=1&partner=600&page=1&display_interval=10&sortColumn=Partner&sortOrder=Asc

H3C NIC-GE-4P-360T-L3 NIC: https://www.vmware.com/resources/compatibility/detail.php?deviceCategory=io&productid=50861&deviceCategory=io&details=1&VID=8086&DID=37d1&SVID=8086&page=1&display_interval=10&sortColumn=Partner&sortOrder=Asc

Quick NAS Topics: Serial USB Server with the LOCKERSTOR 10

In this Quick NAS Topic video I go over how to install VirutalHere USB Server on the LOCKERSTOR 10 and its client on my Windows 10 PC. This enables the client to establish a link to the a USB NULL Model Cable which is connected directly into the NAS. Once established I’m able to use putty to create a serial SSH connection.

** Products in this Video **

- LOCKERSTOR 10 https://www.asustor.com/en/product?p_id=64

- Mikrotik CRS309-1G-8S+IN https://mikrotik.com/product/crs309_1g_8s_in

- VirtualHere https://www.virtualhere.com/home

- StarTech.com USB to Serial RS232 Adapter https://www.amazon.com/USB-Serial-Adapter-Modem-9-pin/dp/B008634VJY?th=1

Tips for installing Windows 7 x32 SP1 on Workstation 16.1.2

This past weekend I needed to install Windows 7 x32 to support some older software. After installing Windows 7 x32 I noticed VMware tools is grayed out. I then tried to install VMware tools manually but it failed. There are a few tricks to overcome this issue and in this blog I’ll cover the steps I took to resolve.

So what changed and why all these extra steps?

You may recall that Workstation 16.0.0 could install Windows 7SP1 x32 without any additional intervention. Starting September 2019, Microsoft added SHA-2 algorithm requirements for driver signing. As Workstation 16 released updates it too included updated VMtools that were complaint with the Microsoft SHA-2 requirements. So if you deploy the Windows 7 SP1 x32 ISO (which doesn’t have the SHA-2 patch) the vmtools install will fail because it cannot validate the drivers. For a bit more information See VMware KB 78655.

What are options to fix this?

By default Windows 7×32 SP1 doesn’t include the needed SHA-2 updates. Users have 2 options when doing new installs.

Option 1: Create an updated Windows 7SP1 ISO by slip streaming in the Convenience Rollup Patch (More details here) and then use this slipstreamed ISO to do the install on Workstation. From there you should be able to install VMTools.

Option 2: After Windows 7 SP1 installation is complete, manually install the SHA-2 update, and then install VMtools. See steps below.

Steps for Option 2:

- First I created a new Workstation VM. When creating it I made sure the ISO path pointed to the Windows 7 SP1 ISO and Workstation adjusted the VM hardware to be compatible with Windows 7 SP1. I allowed the OS installation to complete.

- After the OS was installed I applied the following MS Patch.

- VMware Tools requires Windows 7 SP1 to have KB4474419 update installed, See https://kb.vmware.com/s/article/78708

- I downloaded this patch (2019-09 Security Update for Windows 7 for x86-based Systems (KB4474419)) directly to the newly create Windows 7 VM and installed it.

- Download TIP – To download this update, I needed to right click on the *.msu link > choose save link as > pushed the up arrow next to ‘Discard’ and choose ‘Keep’ > and saved it to a folder

- After the rebooted, I went into Workstation and did the following:

- Right clicked on the VM > Settings > CD/DVD

- Made sure ‘Devices status’ was check for connected and connect at power on

- Clicked on ‘Use ISO Image’ > Browse

- Browsed to this folder ‘C:\Program Files (x86)\VMware\VMware Workstation’

- Choose ‘windows.iso’

- Choose OK to closed the VM Settings

- Back in the Windows 7 VM I went into File Explorer opened up the CD and ran setup.exe

- From there I followed the default steps to install VM Tools and rebooted

- Screenshot of the final outcome

Home Lab Generation 7: Part 2 – New Hardware and Software Updates

In the final part of this 2 part series, I’ll be documenting the steps I took to update my Home Lab Generation 7 with the new hardware and software changes. There’s quite a bit of change going on and these steps worked well for my environment.Pre-Update-Steps:

- Check Product Interoperability Matrix (VCSA, ESXi, NSX, vRNI, VRLI)

- Check VMware Compatibility Guide (Network Cards, JBOD)

- Ensure the vSAN Cluster is in a health state

- Backup VM’s

- Ensure your passwords are updated

- Document Basic Host settings (Network, vmks, NTP, etc.)

- Backup VCSA via the Management Console > Backup

- NOTE: I used /n software SFTP server to receive backup files

Steps to update vCenter Server from 7U2d (7.0.2.00500) to 7U3a (7.0.3.00100):

- Downloaded VCSA 7U3a VMware-vCenter-Server-Appliance-7.0.3.00100-18778458-patch-FP.iso

- Use WinSCP to connect to an ESXi host and upload the update/patch to vSAN ISO-Images Folder

- Mount the ISO from step 1 to VCSA 7U2d VM

- NOTE: A reboot of the VCSA my be necessary for it to recognize the attached ISO

- Went to VCSA Management Console > Update > Check Updates should auto-start

- NOTE: It might fail to find the ISO. If so, choose CD ROM to detect the ISO

- Expanded the Version > Run Pre-Update checks

- Once it passed pre-checks, choose Stage and Install > Accept the Terms > Next

- Check ‘I have backed up vCenter Server…’

- NOTE: Clicking on ‘go to Backup’ will Exit out and you’ll have to start over

- Click Finish and allow it to complete

- Once done log back into the Management console > Summary and validate the Version

- Lastly, detach the datastore ISO, I simple choose ‘Client Device’

Change Boot USB to SSD and upgrade to ESXi 7U3 on Host at a time:

- Remove Host from NSX-T Manager (Follow these steps)

- In vCenter Server

- Put Host 1 in Maintenance Mode Ensure Accessibility (better if you can evacuate all data | run pre-check validation)

- Shut down the host

- Remove Host from Inventory (NOTE: Wait for host to go to not responding first)

- On the HOST

- Precautionary step – Turn off the power supply on the host, helps with the onboard management ability to detect changes

- Remove the old USB boot device

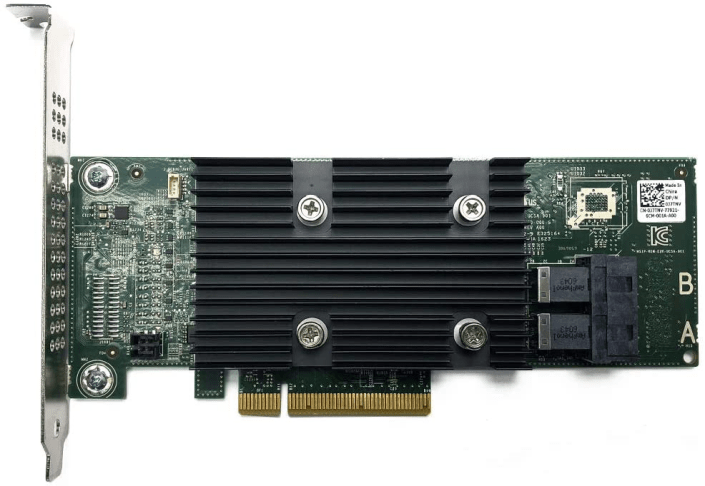

- Install Dell HBA330 and M.2/NVMe PCIe Card w/ 240GB SSD into the Host

- Power On the Host and validate firmware is updated (Mobo, Disk, Network, etc.)

- Here is how I updated the Dell HBA330 Firmware (SEE VIDEO HERE)

- During boot ensure the Dell HBA330 POST screen displays (optional hit CTRL-C to view its options)

- In the Host BIOS Update the boot disk to the new SSD Card

- ESXi Install

- Boot the host to ESXi 7.0U3 ISO (I used SuperMicro Virtual Media to boot from)

- Install ESXi to the SSD Card, Remove ISO, Reboot

- Update Host boot order in BIOS for the SSD Card and boot host

- In the ESXi DUCI, configure host with correct IPv4/VLAN, DNS, Host Name, enable SSH/Shell, disable IPv6 and reboot

- From this ESXi host and from another connected device, validate you can ping the Host IP and its DNS name

- Add Host to the Datacenter (not vSAN Cluster)

- Ensure Host is in Maintenance mode and validate health

- Erase all partitions on vSAN Devices (Host > Configure > Storage Devices > Select devices > Erase Partitions)

- Rename the new SSD datastore (Storage > R-Click on datastore > Rename)

- Add Host to Cluster (but do not add to vSAN)

- Add Host to vDS Networking, could be multiple vDS switches (Networking > Target vDS > Add Manage Hosts > Add Hosts > Migrate VMKernel)

- Complete the Host configuration settings (NTP, vmks)

- Create vSAN Disk Groups (Cluster > Configure > vSAN > Disk Management)

- Monitor and allow to complete, vSAN Replication Objects (Cluster > Monitor > vSAN > Resyncing Objects)

- Extract a new Host Profile and use it to build out the other hosts in the cluster

- ESXi Install – Additional Hosts

- Repeat Steps 1, 2, 3, and only Steps 4.1-4.10

- Attach Host Profile created in Step 4.15

- Check Host Profile Compliance

- Edit and update Host Customizations

- Remediate the host (the remediation will to a pre-check too)

- Optional validate host settings

- Exit Host from Maintenance mode

- Before starting next host ensure vSAN Resyncing Objects is completed

Other Notes / Thoughts:

Host Profiles: You may be thinking “why didn’t he use ESXi Backup/Restore or Host Profiles to simply this migration vs. doing all these steps?”. Actually, at first I did try both but they didn’t work due to the add/changes of PCIe devices and upgrade of the ESXi OS. Backup/Restore and Host Profiles really like things to not change for them to work with out error. Now there are adjustments one could make and I tried to adjust them but in the end I wasn’t able to get them to adjust to the new hosts. They were just the wrong tool for the first part of this job. However, Host Profiles did work well post installation after all the changes were made. vSAN Erase Partitions Step 4.8: This step can be optional it just depends on the environment. In-fact I skipped this step on the last host and vSAN imported the disks with out issue. Granted most of my vm’s are powered off, which means the vSAN replicas are not changing. In an environment where there are a lot of powered on VM’s vSAN doing step 4.8 might be best. Again, it just depends on the environment state. If you like my ‘no-nonsense’ videos and blogs that get straight to the point… then post a comment or let me know… Else, I’ll start posting really boring content!Home Lab Generation 7: Updating the Dell HBA330 firmware without a Dell Server

In this quick video I review how I updated the Dell HBA330 firmware using a Windows 10 PC.

This video was made as a supplement to my 2 Part blog post around updating my Home Lab Generation 7.

See:

Firmware >> https://www.dell.com/support/home/en-ng/drivers/driversdetails?driverid=tf1m6

Quick NAS Topics Changing Storage Pool from RAID 1 to RAID5 with the Synology 1621+

In this not so Quick NAS topic I cover how to expand a RAID 1 volume and migrate it to a RAID 5 storage pool with the Synology 1621+. Along the way we find a disk that has some bad sectors, run an extended test and then finalize the migration.

** Products / Links Seen in this Video **

Synology DiskStation DS1621+ — https://www.synology.com/en-us/products/DS1621+

Home Lab Generation 7: Part 1 – Change Rational for software and hardware changes

Well its that time of year again, time to deploy new changes, upgrades, and add some new hardware. I’ll be updating my ESXi hosts and vCenter Server to the latest vSphere 7 Update 3a from 7U2d. Additionally, I’ll be swapping out the IBM 5210 JBOD for a Dell HBA330+ and lastly I’ll change my boot device to a more reliable and persistent disk. I have 3 x ESXi hosts with VSAN, vDS switches, and NSX-T. If you want to better understand my environment a bit better check out this page on my blog. In this 2 part blog I’ll go through the steps I took to update my home lab and some of the rational behind it.

There are two main parts to the blog:

- Part 1 – Change Rational for software and hardware changes – In this part I’ll explain some of my thoughts around why I’m making these software and hardware changes.

- Part 2 – Installation and Upgrade Steps – These are the high level steps I took to change and upgrade my Home lab

Part 1 – Change Rational for software and hardware changes:

There are three key changes that I plan to make to my environment:

- One – Update to vSphere 7U3a

- vSphere 7U3 has brought many new changes to vSphere including many needed features updates to vCenter server and ESXi. Additionally, there have been serval important bug fixes and corrections that vSphere 7U3 and 7U3a will address. For more information on the updates with vSphere 7U3 please see the “vSphere 7 Update 3 – What’s New” by Bob Plankers. For even more information check out the release notes.

- Part of my rational in upgrading is to prepare to talk with my customers around the benefits of this update. I always test out the latest updates on Workstation first then migrate those learnings in to Home Lab.

- Two – Change out the IBM 5210 JBOD

- The IBM 5210 JBOD is a carry over component from my vSphere 6.x vSAN environment. It worked well with vSphere 6.x and 7U1. However, starting in 7U2 it started to exhibit stuck IO issues and the occasional PSOD. This card was only certified with vSphere/vSAN 6.x and at some point the cache module became a requirement. My choices at this point are to update this controller with a cache module (~$50 each) and hope it works better or make a change. In this case I decided to make a change to the Dell HBA330 (~$70 each). The HBA330 is a JBOD controller that Dell pretty much worked with VMware to create for vSAN. It is on the vSphere/vSAN 7U3 HCL and should have a long life there too. Additionally, the HBA330 edge connectors (Mini SAS SFF-8643) line up with the my existing SAS break-out cables. When I compare the benefits of the Dell HBA330 to upgrading the cache module for the IBM 5210 the HBA330 was the clear choice. The trick is finding a HBA330 that is cost effective and comes with a full sized slot cover. Its a bit tricky but you can find them on eBay, just have to look a bit harder.

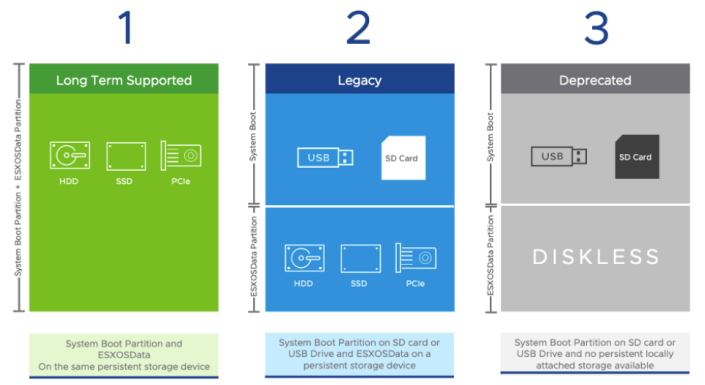

- Three – Change my boot disk

- Last September-2021, VMware announced boot from USB is going to change and customers were advised to plan ahead for these upcoming changes. My current hosts are using cheap SanDisk USB 64GB memory sticks. Its something I would never recommend for a production environment, but for a Home Lab these worked okay. I originally chose them during my Home Lab Gen 5 updates as I need to do testing with USB booted Hosts. Now that VMware has deprecated support for USB/SD devices it’s time to make a change. Point of clarity: the word deprecated can mean different things to different people. However, in the software industry deprecated means “discourage the use of (something, such as a software product) in favor of a newer or better alternative”. vSphere 7 is in a deprecated mode when it comes to USB/SD booted hosts, they are still supported, and customers are highly advised to plan ahead. As of this writing, legacy (legacy is a fancy word for vSphere.NEXT) USB hosts will require a persistent disk and eventually (Long Term Supported) USB/SD booted hosts will no longer be supported. Customers should seek guidance from VMware when making these changes.

-

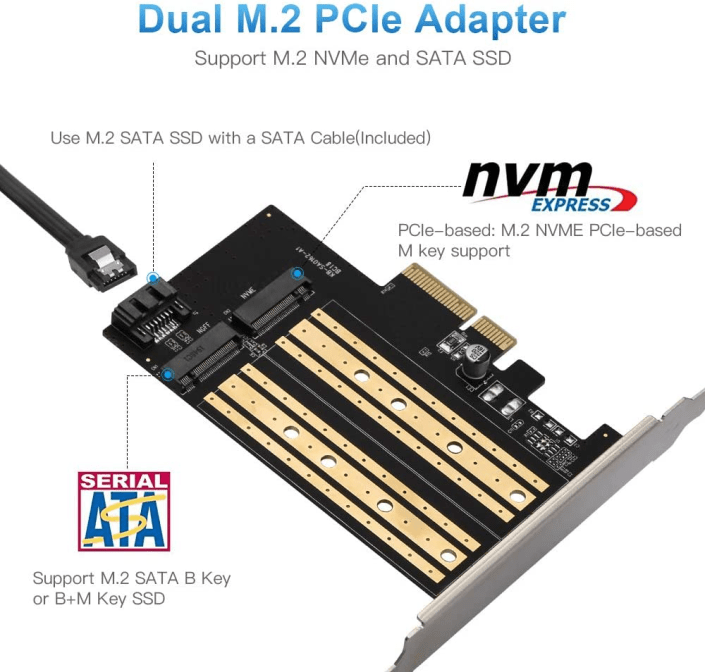

- The requirement to be in a “Long Term Supported” mode is to have a ESXi host be booted from HDD, SSD, or a PCIe device. In my case, I didn’t want to add more disks to my system and chose to go with a PCIe SSD/NVMe card. I chose this PCIe device that will support M.2 (SATA SSD) and NMVe devices in one slot and I decided to go with a Kingston A400 240G Internal SSD M.2 as my boot disk. The A400 with 240GB should be more than enough to boot the ESXi hosts and keep up with its disk demands going forward.

Final thoughts and a important warning. Making changes that affect your current environment are never easy but are sometimes necessary. With a little planning it can make the journey a bit easier. I’ll be testing these changes over the next few months and will post up if issues occur. However, a bit of warning – adding new devices to an environment can directly impact your ability to migrate or upgrade your hosts. Due to the hardware decisions I have made a direct ESXi upgrade is not possible and I’ll have to back out my current hosts from vCenter Server plus other software and do a new installation. However, those details and more will be in Part 2 – Installation and Upgrade Steps.

Opportunity for vendor improvement – If backup vendors like Synology, asustor, Veeam, Veritas, naviko, and Arcoins could really shine. If they could backup and restore a ESXi host to dislike hardware or boot disks this would be a huge improvement for VI Admin, especially when they have tens of thousands of hosts the need to change from their USB to persistent disks. This is not a new ask, VI admins have been asking for this option for years, now maybe these companies will listen as many users and their hosts are going to be affected by these upcoming requirements.