Month: December 2020

Possible Security issues with #solarwinds #loggly and #Trojan #BrowserAssistant PS

Lately, I haven’t had much involvement with malware, trojans, and virus’. However, most recently Norton Family started to alert me to a few websites I didn’t recognize on one of my personal PC’s. Norton reported these three sites: loggly.com | pads289.net | sun346.net Something I also noticed was all three sites were posting at the same date/time, and the Pads/Sun sites had the same ID number in their URL (see pic below). This behavior just seemed odd. I didn’t initially recognize any of these sites, but a quick search revealed loggly.com was a solarwinds product. My mind started to wander, could this be related to their recent security issues? Just to be clear, this post isn’t about any current issues with solarwinds, VMware, or others. These issues were located on my personal network. I’m posting this information as I know many of us are working from home, have kids doing online school, and the last thing we need is a pesky virus slowing things down.

I use Norton Family on all of my personal PC and the first thing I did was block the sites on the affected PC and the via Internet firewall.

Next, I started searching the Inet to see what I could find out on these three sites. Multiple security sites of these URLs turned up no warnings, no black lists, whois seemed normal, just pretty much nothing alarming. In fact, I was even running Sophos UTM Home Firewall, and it never alerted on this either. If I went directly to these sites it resulted in a blank page. Additionally, the PC seemed to run normal, no popups, or redirection of sites. Really it had no issues at all except it just kept going to these odd sites.

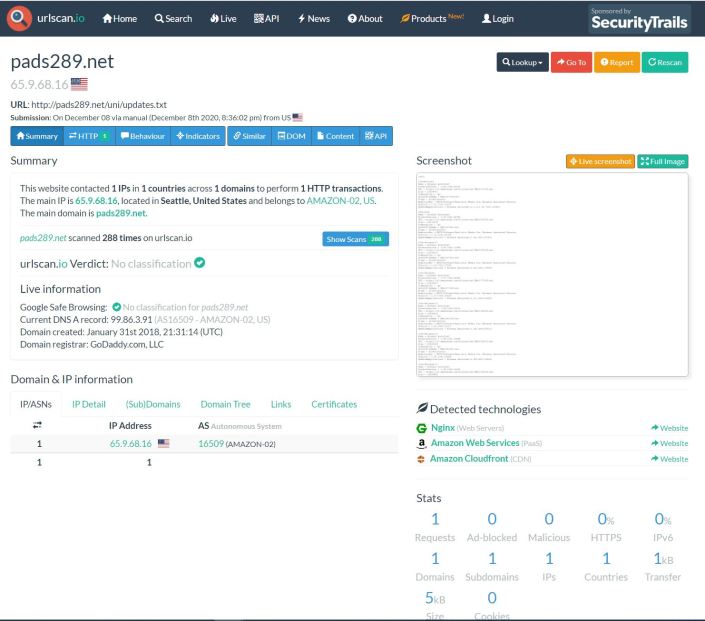

That’s when I found urlscan.io. I pointed it at one of the sites and I noticed there were several update.txt files.

When I clicked on the update.txt it brought me to this screen where I could view the text file via the screenshot.

One thing I noticed about the text file was ‘Realistic Media Inc.’ and ‘Browser Assistant’, and MSI installable. These things seemed like a programs that could be installed on a PC.

Looking at the installed programs on the affected PC and I found a match.

A quick search, and sure enough lots of hits on this Trojan.

Next I ran Microsoft Safety Scanner, it removed some of it, and then I uninstalled the ‘Browser Assistant’ program.

Lastly, I sent an email into AWS and Solarwinds asking them to look into this issue.

Within 24 hours Amazon Responded with: “The security concern that you have reported is specific to a customer application and / or how an AWS customer has chosen to use an AWS product or service. To be clear, the security concern you have reported cannot be resolved by AWS but must be addressed by the customer, who may not be aware of or be following our recommended security best practices. We have passed your security concern on to the specific customer for their awareness and potential mitigation.”

Within 24 hours Solarwinds responded with: They are working with me to see if there are any issues with this.

Summary:

This pattern for Trojans or Mal/ad-ware probably isn’t new to security folks but either way I hope this blog helps you to better understand odd behavior on your personal network.

Thanks for reading and please do reach out if you have any questions.

Reference Links / Tools:

If you like my ‘no-nonsense’ videos and blogs that get straight to the point… then post a comment or let me know… Else, I’ll start posting really boring content!

GA Release #VMware #vSphere + #vSAN 7.0 Update 1c/P02 | Announcement, information, and links

Announcing GA Releases of the following

- VMware vSphere 7.0 Update 1c/P02 (Including Tanzu)

- VMware vSAN™ 7.0 Update 1c/P02

Note: The included ESXi patch pertains to the Low severity Security Advisory for VMSA-2020-0029 & CVE-2020-3999

See the base table for all the technical enablement links.

| Release Overview | ||||

| vCenter Server 7.0 Update 1c | ISO Build 1732751

ESXi 7.0 Update 1c | ISO Build 17325551 |

||||

| What’s New vCenter | ||||

|

||||

| What’s New vSphere With Tanzu | ||||

Supervisor Cluster

· Newly created Supervisor Clusters uses this new topology automatically. · Existing Supervisor Clusters are migrated to this new topology during an upgrade

Tanzu Kubernetes Grid Service for vSphere

Missing new default VM Classes introduced in vSphere 7.0 U1

The selected value must fit the purpose of your system. For example, a system with 1TB of memory must use the minimum of 69 GB for system storage. To set the boot option at install time, for example systemMediaSize=small, refer to Enter Boot Options to Start an Installation or Upgrade Script. For more information, see VMware knowledge base article 81166. |

||||

| VMSA-2020-0029 Information for ESXi | ||||

| VMSA-2020-0029 | Low | |||

| CVSSv3 Range | 3.3 | |||

| Issue date: | 12/17/2020 | |||

| CVE numbers: | CVE-2020-3999 | |||

| Synopsis: | VMware ESXi, Workstation, Fusion and Cloud Foundation updates address a denial of service vulnerability (CVE-2020-3999) | |||

| ESXi 7 Patch Info | VMware Patch Release ESXi 7.0 ESXi70U1c-17325551 | |||

| This section derives from our full VMware Security Advisory VMSA-2020-0029 covering ESXi only. It is accurate at the time of creation and it is recommended you reference the full VMSA for expanded or updated information. | ||||

| What’s New vSAN | ||||

vSAN 7.0 Update 1c/P02 includes the following summarized fixes as documented within the Resolved Sections for vCenter & ESXi

|

||||

| Technical Enablement | |

| Release Notes vCenter | Click Here | What’s New | Patches Contained in this Release | Product Support Notices | Resolved Issues | Known Issues |

| Release Notes ESXi | Click Here | What’s New | Patches Contained in this Release | Product Support Notices | Resolved Issues | Known Issues |

| Release Notes vSAN 7.0 U1 | Click Here | What’s New | VMware vSAN Community | Upgrades for This Release | Limitations | Known Issues |

| Release Notes Tanzu | Click Here | What’s New | Learn About vSphere with Tanzu | Known Issues |

| docs.vmware.com/vSphere | vCenter Server Upgrade | ESXi Upgrade | Upgrading vSAN Cluster | Tanzu Configuration & Management |

| Download | Click Here |

| Compatibility Information | ports.vmware.com/vSphere 7 + vSAN | Configuration Maximums vSphere 7 | Compatibility Matrix | Interoperability |

| VMSA Reference | VMSA-2020-0029 | VMware Patch Release ESXi 7.0 ESXi70U1c-17325551 |

GA Release VMware NSX Data Center for vSphere 6.4.9 | Announcement, information, and links

Announcing GA Releases of the following

- VMware NSX Data Center for vSphere 6.4.9 (See the base table for all the technical enablement links.)

| Release Overview |

| VMware NSX Data Center for vSphere 6.4.9 | Build 17267008

NSX for vSphere 6.4 End Of General Support Was Extended to 01/16/2022 |

| What’s New |

NSX Data Center for vSphere 6.4.9 adds usability enhancements and addresses a number of specific customer bugs.

|

| Minimum Supported Versions & Depreciated Notes |

| VMware declares minimum supported versions, this content has been simplified, please view the full details in the Versions, System Requirements, and Installation section.

For vSphere 6.5: Recommended: 6.5 Update 3 Build Number 14020092. VMware Product Interoperability Matrix | NSX-V 6.4.9 & vSphere 6.5 For vSphere 6.7: Recommended: 6.7 Update 2 For vSphere 7, Update 1 is now supported Note vSphere 6.0 has reached End of General Support and is not supported with NSX 6.4.7 onwards. Guest Introspection for Windows It is recommended that you upgrade VMware Tools to 10.3.10 before upgrading NSX for vSphere. End of Life and End of Support Warnings For information about NSX and other VMware products that must be upgraded soon, please consult the VMware Lifecycle Product Matrix.

General Behavior Changes If you have more than one vSphere Distributed Switch, and if VXLAN is configured on one of them, you must connect any Distributed Logical Router interfaces to port groups on that vSphere Distributed Switch. Starting in NSX 6.4.1, this configuration is enforced in the UI and API. In earlier releases, you were not prevented from creating an invalid configuration. If you upgrade to NSX 6.4.1 or later and have incorrectly connected DLR interfaces, you will need to take action to resolve this. See the Upgrade Notes for details. In NSX 6.4.7, the following functionality is deprecated in vSphere Client 7.0:

For the complete list of NSX installation prerequisites, see the System Requirements for NSX section in the NSX Installation Guide. For installation instructions, see the NSX Installation Guide or the NSX Cross-vCenter Installation Guide. Also refer to the complete Deprecated and Discontinued Functionality for all depreciated features, API Removals and Behavior Changes |

| General Upgrade Considerations |

For more information, notes and considerations for upgrading please see the Upgrade Notes & FIPS Compliance section.

POST https://<nsmanager>/api/2.0/si/service/<service-id>/servicedeploymentspec/versioneddeploymentspec |

| Upgrade Consideration for NSX Components |

Support for VM Hardware version 11 for NSX components

NSX Manager Upgrade

Controller Upgrade

When the controllers are deleted, this also deletes any associated DRS anti-affinity rules. You must create new anti-affinity rules in vCenter to prevent the new controller VMs from residing on the same host. See Upgrade the NSX Controller Cluster for more information on controller upgrades. Host Cluster Upgrade

NSX Edge Upgrade

PUT /api/4.0/edges/{edgeId} or PUT /api/4.0/edges/{edgeId}/interfaces/{index}. See the NSX API Guide for more information.

To avoid such upgrade failures, perform the following steps before you upgrade an ESG: The following resource reservations are used by the NSX Manager if you have not explicitly set values at the time of install or upgrade.

<applicationProfile> <name>https-profile</name> <insertXForwardedFor>false</insertXForwardedFor> <sslPassthrough>false</sslPassthrough> <template>HTTPS</template> <serverSslEnabled>true</serverSslEnabled> <clientSsl> <ciphers>AES128-SHA:AES256-SHA:ECDHE-ECDSA-AES256-SHA</ciphers> <clientAuth>ignore</clientAuth> <serviceCertificate>certificate-4</serviceCertificate> </clientSsl> <serverSsl> <ciphers>AES128-SHA:AES256-SHA:ECDHE-ECDSA-AES256-SHA</ciphers> <serviceCertificate>certificate-4</serviceCertificate> </serverSsl> … </applicationProfile>

{ “expected” : null, “extension” : “ssl-version=10”, “send” : null, “maxRetries” : 2, “name” : “sm_vrops”, “url” : “/suite-api/api/deployment/node/status”, “timeout” : 5, “type” : “https”, “receive” : null, “interval” : 60, “method” : “GET” }

|

| Technical Enablement | |

| Release Notes | Click Here | What’s New | Versions, System Requirements, and Installation | Deprecated and Discontinued Functionality

Upgrade Notes | FIPS Compliance | Resolved Issues | Known Issues |

| docs.vmware.com/nsx-v | Installation | Cross-vCenter Installation | Administration | Upgrade | Troubleshooting | Logging & System Events

API Guide | vSphere CLI Guide | vSphere Configuration Maximums |

| Networking Documentation | Transport Zones | Logical Switches | Configuring Hardware Gateway | L2 Bridges | Routing | Logical Firewall

Firewall Scenarios | Identity Firewall Overview | Working with Active Directory Domains | Using SpoofGuard Virtual Private Networks (VPN) | Logical Load Balancer | Other Edge Services |

| Compatibility Information | Interoperability Matrix | Configuration Maximums | ports.vmware.com/NSX-V |

| Download | Click Here |

| VMware HOLs | HOL-2103-01-NET – VMware NSX for vSphere Advanced Topics |