ESX

Free Instructional Video: vCloud Director Concepts and Architecture

The following URL will take you to 13 recorded sessions. They deliver an overview of VMware vCloud Director concepts and architecture, installation, creating Provider Resources, creating Organizations, creating and populating Catalogs, building a vApp, creating vShield Edge Firewall Rules, creating site-to-site VPNs, and more. Enjoy!

http://mylearn.vmware.com/mgrReg/plan.cfm?plan=36740&ui=www_edu

Home Lab – VMware ESXi 5.1 with iSCSI and freeNAS

Recently I updated my home lab with a freeNAS server (post here). In this post, I will cover my iSCSI setup with freeNAS and ESXi 5.1.

Keep this in mind when reading – This Post is about my home lab. My Home Lab is not a high-performance production environment, its intent is to allow me to test and validate virtualization software. Some of the choices I have made here you might question, but keep in mind I’ve made these choices because they fit my environment and its intent.

Overall Hardware…

Click on these links for more information on my lab setup…

- ESXi Hosts – 2 x ESXi 5.1, iCore 7, USB Boot, 32GB RAM, 5 x NICS

- freeNAS SAN – freeNAS 8.3.0, 5 x 2TB SATA III, 8GB RAM, Zotac M880G-ITX Mobo

- Networking – Netgear GSM7324 with several VLAN and Routing setup

Here are the overall goals…

- Setup iSCSI connection from my ESXi Hosts to my freeNAS server

- Use the SYBS Dual NIC to make balanced connections to my freeNAS server

- Enable Balancing or teaming where I can

- Support a CIFS Connection

Here is basic setup…

freeNAS Settings

Create 3 networks on separate VLANs – 1 for CIFS, 2 x for iSCSI < No need for freeNAS teaming

CIFS

The CIFS settings are simple. I followed the freeNAS guide and set up a CIFS share.

iSCSI

Create 2 x iSCSI LUNS 500GB each

Setup the basic iSCSI Settings under “Servers > iSCSI”

- I used this doc to help with the iSCSI setup

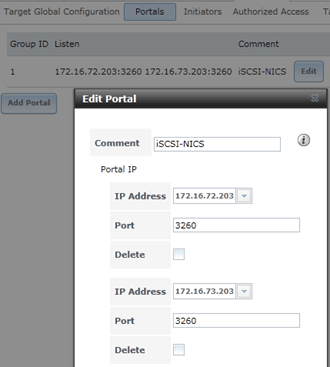

- The only exception is – Enable both of the iSCSI network adapters in the “Portals” area

ESXi Settings

Setup your iSCSI vSwitch and attach two dedicated NICS

Setup two VMKernel Ports for iSCSI connections

Ensure that the First VMKernel Port group (iSCSI72) goes to ONLY vmnic0 and vice versa for iSCSI73

Enable the iSCSI LUNs by following the standard VMware instructions

Note – Ensure you bind BOTH iSCSI VMKernel Ports

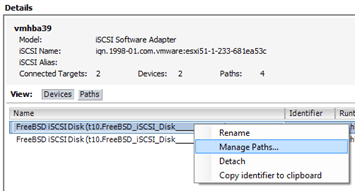

Once you have your connectivity working, it’s time to setup round robin for path management.

Right click on one of the LUNS, choose ‘Manage Paths…’

Change the path selection on both the LUNS to ‘Round Robin’

Tip – After the fact if you make changes to your iSCSI settings, then ensure you check your path selection as it may go back to default

Notes and other Thoughts…

Browser Cache Issues — I had issues with freeNAS updating information on their web interface, even after reboots of the NAS and my PC. I moved to Firefox and all issues went away. I then cleared my cache in IE and these issues were gone.

Jumbo Frames — Can I use Jumbo Frames with the SYBA Dual NICs SY-PEX24028? – Short Answer is NO I was unable to get them to work in ESXi 5.1. SYBA Tech support stated the MAX Jumbo frames for this card is 7168 and it supports Windows OS’s only. I could get ESXi to accept a 4096 frame size but nothing larger. However, when enabled none of the LUNS would connect, once I moved the frame size back to 1500 everything worked perfectly. I beat this up pretty hard, adjusting all types of ESXi, networking, and freeNAS settings but in the end, I decided the 7% boost that Jumbo frames offer wasn’t worth the time or effort.

Summary…

These settings will enable my 2 ESXi Hosts to balance their connections to my iSCSI LUNS hosted by freeNAS server without the use of freeNAS Networking Teaming or aggregation. By far it is the simplest way to setup and the out of the box performance works well.

My advice is — go simple with these settings for your home lab and save your time to beat up more important issues like “how do I shutdown windows 8” J

I hope you found this post useful and if you have further questions or comments feel free to post up or reach out to me.

Great vSphere 5.1 Upgrades KB’s & Articles

A few of my fellow TAM’s put together this list of great KB’s / Articles that may help you in the process of upgrading to vSphere 5.1 – Enjoy!

vCenter 5.1:

vSphere 5.1 Misc:

Single Sign On Specific:

Home Lab – freeNAS build with LIAN LI PC-Q25, and Zotac M880G-ITX

I’ve decided to repurpose my IOMega IX4 and build out a freeNAS server for my ever growing home lab. In this blog post I’m not going to get in to the reasons why I choose freeNAS, trust me I ran through lot of open source NAS software, but rather on the actual hardware build of the NAS device.

Here are the hardware components I choose to build my freeNAS box with…

- LIAN LI PC-Q25 Case – NewEgg ~$120, it goes on sale from time to time…

- Cooler Master 500W PS – ValleySeek ~$34, on sale

Zotac M880G-ITX – Valleyseek ~$203<< 10/07/2013 This MOBO had BIOS issues with detecting HDDs. I moved to a ASRock FM2A85X-ITX- SYBA Dual NIC SY-PEX24028 – NewEgg ~$37

- 8GB Corsair RAM – I owned this bought a Frys in a 16GB Kit for $49

- 5 x Seagate ST2000DM001 2TB SATAIII – Superbiiz ~$89, onsale and free shipping

- 1 x Corsair 60GB SSD SATAIII – I owned this bought at Frys for ~$69

Tip – Watch for sales on all these items, the prices go up and down daily…

Factors in choosing this hardware…

- Case – the Lian LI case supports 7 Hard disks (5 being hotswap) in a small and very quiet case, Need I say more…

- Power supply – Usually I go with a Antec Power supply, however this time I’m tight on budget so I went with a Cooler Master 80PLUS rated Power supply

- Motherboard – The case and the NAS software I choose really drove the Mobo selection, I played with a bunch of Open soruce NAS software on VM’s, once I made my choice on the case and choosing freeNAS it was simple as finding one that fit both. However 2 options I was keen on – 1) 6 SATA III Ports (To support all the Hard disks), 2) PCIex1 slot (to support the Dual Port NIC). Note – I removed the onboard Wireless NIC and the antenna, no need for them on this NAS device

- NIC – the SYBA Dual NIC I have used in both of my ESXi hosts, they run on the Realtek 8111e chipset and have served me well. The Mobo I choose has the same chipset and they should integrate well into my environment.

- RAM – 8GB of RAM, since I will have ~7TB of usable space with freeNAS, the general rule of thumb is to use 1GB of RAM per 1TB of storage, 8GB should be enough.

- Hard Disks – I choose the hard disks mainly on Price, speed, and size. These hard disks are NOT rated above RAID 1 however I believe they will serve my needs accordingly. If you looking for HIGH performance and duty cycle HD’s then go with an enterprise class SAS or SATA disk.

- SSD – I’ll use this for cache setup with freeNAS, I just wanted it to be SATA III

Install Issues and PIC’s

What went well…

- Hard disk installs into case went well

- Mobo came up without issue

- freeNAS 8.3.xx installed without issue

Minor Issues….

- Had to modify (actually drill out) the mounting plate on the LIAN LI case to fit the Cooler Master Power supply

- LIAN LI Mobo Mount points were off about a quarter inch, this leaves a gap when installing the NIC card

- LIAN LI case is tight in areas where the Mobo power supply edge connector meets the hard disk tray

PICS…

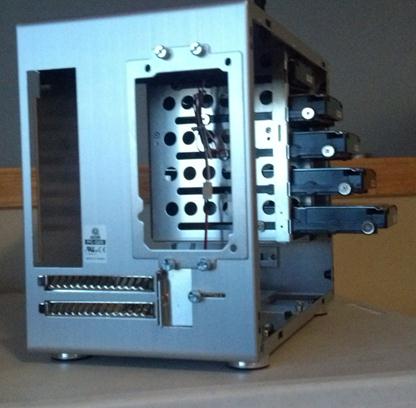

LIAN LI Case

5 Seagate HD’s installed…

Rear view…

Side Panel…

Zotac Mobo with RAM

Removal of the Wireless NIC….

Zotac Mobo installed in case with dual NIC…

Everything Mounted (Except for the SSD)….

Home Lab – More updates to my design

Most recently I posted about adding a Layer 3 switch to my growing home lab. The Netgear Layer 3 switch I added (GSM7324) is preforming quite well in my home lab. In fact it’s quite zippy compared to my older switches and for the price it was worth it. However my ever growing home lab is having some growing pains, 2 to be exact.

In this post I’ll outline the issues, the solutions I’ve chosen, and my new direction for my home lab.

The issues…

Initially my thoughts were I could use my single ESXi Host and Workstation with specific VM’s to do most of my lab needs.

There were two issues I ran into, 1 – Workstation doesn’t support VLANs and 2 – my trusty IOMega IX4 wasn’t preforming very well.

Issue 1 – Workstation VLANs

Plain and simple Workstation doesn’t support VLANs and working with one ESXi Host is prohibiting me from fully using my lab and switch.

Issues 2 – IOMega IX4 Performance

My IOMega IX4 has been a very reliable appliance and it has done its job quite well.

However when I put any type of load on it (More than One or Two VM’s booting) its performance becomes a bit intolerable.

The Solutions…

Issue 1 – Workstation VLANs

I plan to still use Workstation for testing of newer ESXi platforms and various software components

I will install a second ESXi host similar to the one I built earlier this year only both Hosts will have 32GB of RAM.

The second Host will allow me to test more advanced software and develop my home lab further.

Issues 2 – IOMega IX4 Performance

I’ve decided to separate my personal data from my home lab data.

I will use my IX4 for personal needs and build a new NAS for my home lab.

A New Direction…

My intent is to build out a second ESXi Physical Host and ~9TB FreeNAS server so that I can support a vCloud Director lab environment.

vCD will enable me to spin up multiple test labs and continue to do the testing that I need.

So that’s it for now… I’m off to build my second host and my freeNAS server…

VMware Guest Operating System Installation Guide gets an Online Facelift

At some point in your VMware administrator career you discover you need information around the correct settings to deploy a VM properly.

You find that you need to answer questions like –

What is the supported network adapter for my Guest OS?

Are Paravirtualization adapters supported for my Guest OS?

Can I do Hot memory add?

A few years ago the default standard was the Guest Operating System Installation Guide.

It gave you all the information you needed to setup the virtual hardware or confirm what recommend virtual hardware should be by the OS Type.

Recently the compatibility and OS installation guides have come online and they can lead you to best practices around settings and KB’s too.

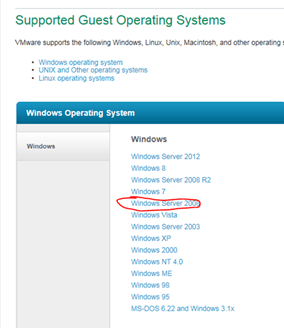

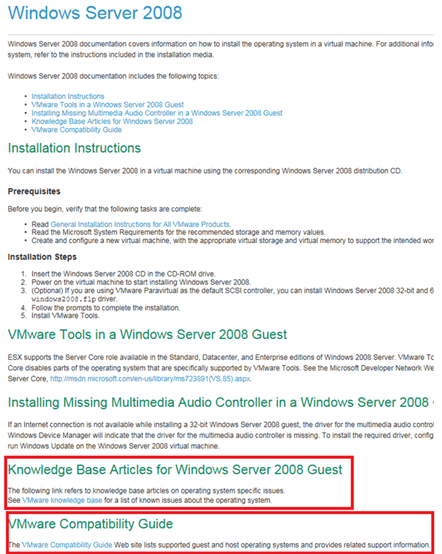

In this blog post I’m going to step you through how to find basic information around a Windows 2008 server.

Start here – http://partnerweb.vmware.com/GOSIG/home.html#other

This link will take you to the Guest Operating System Installation Guide.

Select your OS – In this case I choose Windows 2008 Server

Here are the base install instructions for the Guest OS, note at the bottom the KB Articles and Guest OS Compatibility Guide.

The Guest OS Compatibility Guide can tell you what network drivers etc are support for the guest OS..

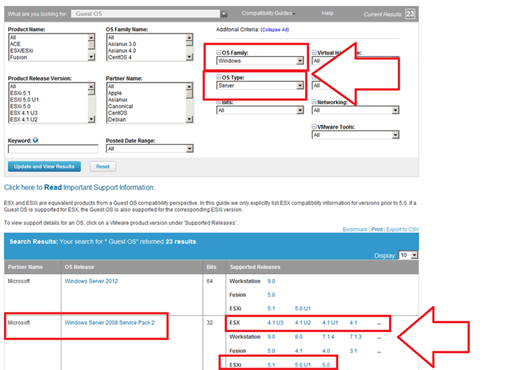

Click here to go to the VMware Compatibility Guide, Select your OS Family, OS Type and choose Update & Review…

Then select your ESX / ESXi version to see the details…

Here are the results… Also from this page you can choose a different product like ESXi 5.0U1 or other…

Home Lab – Adding a Layer 3 Switch to my growing Home Lab

Most recently I expanded my home lab to include a Layer 3 switch.

Why would I choose a Layer 3 switch and what/how would I use it is the topic of this blog post.

Here are my requirements for my home lab –

I would like to setup my home network to support multiple VLANs and control how they route.

This will enable me to control the network traffic and segment my network to allow for different types of testing.

I’d also like to be able to run all of these VM’s on Workstation 9, support remote access, and ESXi Hosts.

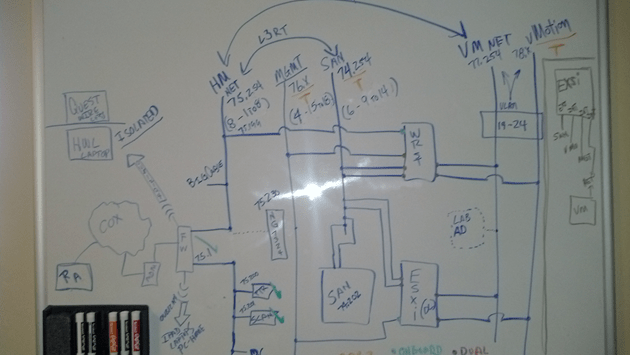

Frist thing I did was come up with a drawing of what I wanted. It included all my wants and needs…

This was my chance to brain storm a bit and I just wrote down everything I wanted or really needed.

From this drawing I came up with this list…

- Support Remote Access

- VLANS

- VLAN Routing

- VLAN Tagging

- ESXi Host with 5 NICs

- Workstation 9 Host with 5 NICs

- Support 5 Different VLANs

- Support Internet Access for VM’s

- Local Storage support for home files

- Printer / Scanner need to be on the network

- I’m going to need a switch with 24 Ports or better

- Design the network so that I can power down the test lab and allow home devices to print and access the Inet.

Second thing – What do I currently have to work with…

- Windows 7×64, Workstation 9, 32GB RAM, iCore 7, 2 x SATA3 2TB 6gbs HD, 2 x SATA3 SSD (60 & 120), 1 NIC

- IO Mega IX4 with Dual NICs

- Older Netgear 16 Port Gig Layer 2 Switch unmanaged

- Netgear N900 with Guest Network Support

Based on these lists I came up with my shopping list…

- I need a Layer 3 Switch to support all this

- I need some Multiport Giga Bit NICs

Let’s start with the switch…Here is what I looked for in a Switch –

Must Have –

- Layer 3 Routing

- VLANs

- VLAN Routing

- Managed

- Quiet – It is a must for home networking as I work from home and am frequently on calls.

- Cost effective – keep it below a few hundred

Nice to have –

- Quality Brand

- Support

- Community behind the product

- Non-Blocking Switch

- OSPF or RIP

Basically most good Layer 3 switches achieve the requirements for 1-4. However these switches usually run in a Data center or Networking closet and are quite loud

I did some looking around for different switches, mostly used Cisco and Extreme Networks. These are switches that I am familiar with and would fit my home lab. However I’ve seen my share of their innards, I know their fans are loud and cannot easily be replaced. When I was at VMworld 2012 I chatted quite a bit with the Netgear folk about their products and I remember talking with them about their products and how they fit SMB to Enterprise quite nicely. I started to look on Ebay and I found an affordable Netgear Switch. I did some research on line and found how others were modifying the fans to help them run more quietly.

My choice was the Netgear GSM7324. It is a 24 Port Layer 3 Managed Switch from 2008. It meets all my must have needs and it fulfilled all of the “Nice To haves”

I also bought the following to support this switch –

Startech Null Modem DB9 to USB to run the CLI on the Switch

Sunon MagLev HA40201V4-0000-C99, 40x20mm,Super Silent FAN for $10 apiece, they fit perfectly and they run the switch at a tolerable noise level

TIP – And this is important… I had to move the PIN outs on these fans to meet the PIN outs on the Switch. If I didn’t it could have damaged the switch…

Next I started looking for Multi-Port Gigabit NICS…

What do I have to work with?

I’m using the Gigabyte Z68XP-UD3 for Workstation 9 and MSI Z68MA-G45B3 for my ESXi 5.x Host.

What are the Must haves for the NIC’s?

- Dual Gigabit

- VLAN support

- Jumbo Frames

- Support for ESXi and Windows 7×64

- I need about 4 of these cards

I choose the SYBA SY-PEX24028. It’s a Dual NIC Gigabit card that meets my requirements. I found it for $39 on Newegg .

Tip – When choosing a network card I needed to ensure the card will fit into my motherboards, not all x1 PCIe slots are the same and when looking at Dual Gigabit NICs most only work in server class hardware.

Summary –

I achieved what I was looking to accomplish and with some good design work I should have a top notch home lab. All in all I spent about ~$400 to upgrade my home lab. Which is not a bad deal considering most Layer 3 switches cost $400+. All my toys have now come in and I’m off to rework my lab…. But that my friend is a different blog post…

Thank you Computer Gods for your divine intervention and BIOS Settings

I’ve been in IT for over 20 years now and in my time I’ve seen some crazy stuff like –

- Grass growing in a Unisys Green Screen terminal that was sent in for repair by a Lumber yard

- A Disney Goofy screen saver on a IBM PS/2 running OS/2 kept bringing down Token Ring every time it went in to screen saver mode.

But this friend is one of the more weird issues I’ve come across….

This all started last March 2012. I bought some more RAM and a pair of 2TB Hitachi HD’s for my Workstation 8 PC. I needed to expand my system and Newegg had a great deal on them. I imaged up my existing Windows 7 OS and pushed it down to the new HD. When the system booted I noticed that is was running very slow. I figured this to be an issue with the image process. So I decided to install from Windows 7 from scratch but I ran into various installation issues and slowness problems. I put my old Samsung HD back in my system and it booted fine. When I plugged the new Hitachi HD as a second HD via SATA or USB the problems started again, basically it was decreased performance, programs not loading, and choppy video. I repeated these same steps with the 2nd Hitachi HD that I bought and it had the same issues.

A bit perplexed at this point I figure I have a pair of bad HD’s or bad HD BIOS. Newegg would not take back the HD’s, so I start working with Hitachi. I tried a firmware HD update, I RMA both HD’s and I still have the same issue. Hitachi sends me different model but slower HD and it works fine. So now I know there is something up with this model of HD.

I start working with Gigabyte – Same deal as Hitachi BIOS Update, RMA for a new System board Revision (Now I’m at a Rev 1.3) and I still have the same issue. I send an HD to Gigabyte in California and they cannot reproduce the problem. I’ll spare you all the details but trust me I try every combination I can think of. At this point I’m now at this for 5 Months, I still cannot use my new HD, and then I discover the following – I put in a PCI (Not PCIe) VGA video card into my system and it works and then it hit me – “I wonder if this is some weird HDMI Video HD conflict problem”

I start working with Gigabyte – Same deal as Hitachi BIOS Update, RMA for a new System board Revision (Now I’m at a Rev 1.3) and I still have the same issue. I send an HD to Gigabyte in California and they cannot reproduce the problem. I’ll spare you all the details but trust me I try every combination I can think of. At this point I’m now at this for 5 Months, I still cannot use my new HD, and then I discover the following – I put in a PCI (Not PCIe) VGA video card into my system and it works and then it hit me – “I wonder if this is some weird HDMI Video HD conflict problem”

I asked Gigabyte if disabling onboard HDMI video might help. They were unsure but I try it anyway and sure enough I found the solution!

It felt like the computer gods had finally shone down on me from above and preformed a PC miracle – hallelujah. 5 plus months of troubleshooting and I finally have a solution.

Here are the overall symptoms….

Observation 1) Windows 7 x64 Enterprise or Professional the installer fails to load or fully complete OR, the installation does complete, but mouse movements are choppy, and then the system locks up or will not boot.

Observation 2) When booting from a different drive I attach the new Hitachi HD to a booted system via USB, then the PC will start to exhibit performance issues.

Here is what I found out….

Any Combination of the following products will result with these symptoms. Change any one out and it works!

1 x Gigabyte Z68XP-UD3 (Rev 1.0 and 1.3)

1 x Hitachi GST Deskstar 5K3000 HDS5C3020ALA632

1 x PCIe Video Card with HDMI Output (I tried the following card with the same Results – ZOTAC ZT-40604-10L GeForce GT 430 and EVGA – GeForce GT 610)

Here is the solution to making them work together….

In the system board BIOS, under Advanced BIOS Settings – Change On Board VGA to ‘Enable if No Ext PEG’

This simple setting disabled the on board HDMI Video and resolved the conflicts with the products not working together.

Summary….

I got to meet some really talented engineers at Hitachi and Gigabyte. All were friendly and worked with me to solve my issue. One person Danny from Gigabyte was the most responsive and talented MoBo engineer I’ve meet. Even though in the end I found my own solution, I wouldn’t have made it there without some of their expert guidance!

ESXi Q&A Boot Options – USB, SD, & HD

Here are some of my notes around boot options for ESXi.

The post covers a lot of information especially around booting to SD or USB.

Enjoy!

What are the Options to install ESXi?

- Interactive ESXi Installation

- Scripted ESXi Installation

- vSphere Auto Deploy ESXi Installation Option – vSphere 5 Only

- Customizing Installations with ESXi Image Builder CLI – vSphere 5 Only

What are the boot media options for ESXi Installs?

The following boot media are supported for the ESXi installer:

- Boot from a CD/DVD

- Boot from a USB flash drive.

- PXE boot from the network. PXE Booting the ESXi Installer

-

Boot from a remote location using a remote management application.

What are the acceptable targets to install/boot ESXi to and are there any dependencies?

ESXi 5.0 supports installing on and booting from the following storage systems:

-

SATA disk drives – SATA disk drives connected behind supported SAS controllers or supported on-board SATA controllers.

- Note -ESXi does not support using local, internal SATA drives on the host server to create VMFS datastores that are shared across multiple ESXi hosts.

- Serial Attached SCSI (SAS) disk drives. Supported for installing ESXi 5.0 and for storing virtual machines on VMFS partitions.

- Dedicated SAN disk on Fibre Channel or iSCSI

- USB devices. Supported for installing ESXi 5.0. For a list of supported USB devices, see the VMware Compatibility Guide at http://www.vmware.com/resources/compatibility.

Storage Requirements for ESXi 5.0 Installation

- Installing ESXi 5.0 requires a boot device that is a minimum of 1GB in size.

- When booting from a local disk or SAN/iSCSI LUN, a 5.2GB disk is required to allow for the creation of the VMFS volume and a 4GB scratch partition on the boot device.

- If a smaller disk or LUN is used, the installer will attempt to allocate a scratch region on a separate local disk.

- If a local disk cannot be found the scratch partition, /scratch, will be located on the ESXi host ramdisk, linked to /tmp/scratch.

-

You can reconfigure /scratch to use a separate disk or LUN. For best performance and memory optimization, VMware recommends that you do not leave /scratch on the ESXi host ramdisk.

- To reconfigure /scratch, see Set the Scratch Partition from the vSphere Client.

- Due to the I/O sensitivity of USB and SD devices the installer does not create a scratch partition on these devices. As such, there is no tangible benefit to using large USB/SD devices as ESXi uses only the first 1GB.

- When installing on USB or SD devices, the installer attempts to allocate a scratch region on an available local disk or datastore.

- If no local disk or datastore is found, /scratch is placed on the ramdisk. You should reconfigure /scratch to use a persistent datastore following the installation.

10 Great things to know about Booting ESXi from USB – http://blogs.vmware.com/esxi/2011/09/booting-esxi-off-usbsd.html <<< This is worth a read should clear up a LOT of questions….

How do we update a USB Boot Key?

It would follow the same procedure as any install or upgrades, to the infrastructure it acts all the same.

Can an ESXi Host access USB devices ie. Can an External USB Hard Disk be connected directly to the ESXi Host for copying of data?

- Yes this can be done, see the KB below – ‘Accessing USB storage and other USB devices from the service console’

- However the technology that supports USB device pass-through from an ESX/ESXi host to a virtual machine does not support simultaneous USB device connections from USB pass-through and from the service console.

- This means the host is in either Pass Through (to the VM) or service console mode.

References –

vSphere 5 Documentation Center (Mainly Under ‘vSphere Installation and Setup’)

Installing ESXi Installable onto a USB drive or SD flash card

USB support for ESX/ESXi 4.1 and ESXi 5.0

VMware support for USB/SD devices used for installing VMware ESXi

Installing ESXi 5.0 on a supported USB flash drive or SD flash card

Accessing USB storage and other USB devices from the service console

Whitebox ESXi 5.x Diskless install

I wanted to build a simple diskless ESXi 5.x server that I could use as an extension to my Workstations 8 LAB.

Here’s the build I completed today….

- Antec Sonata Gen I Case (Own, Buy for ~$59)

- Antec Earth Watts 650 PS (Own, Buy for ~$70)

- MSI Z68MS-G45(B3) Rev 3.0 AKA MS-7676 (currently $59 at Fry’s)

- Intel i7-2600 CPU LGA 1155 (Own, Buy for ~$300)

- 16GB DDR3-1600 Corsair RAM (Own, Buy for ~$80)

- Intel PCIe NIC (Own, Buy for ~$20)

- Super Deluxe VMware 1GB USB Stick (Free!)

- Classy VMware Sticker on front (Free)

Total Build Cost New — $590

My total Cost as I already owned the Hardware – $60 J

ESXi Installation –

- Installed ESXi 5.0 via USB CD ROM to the VMware 1GB USB Stick

- No install issues

- All NIC’s and video recognized

- It’s a very quiet running system that I can use as an extension from my Workstation 8 Home lab…

| Front View with Nice VMware Sticker! |

|

| Rear View with 1GB VMware USB Stick |

|

| System Board with CPU, RAM and NIC – Look Mom no Hard Disks! |

|

| Model Detail on the MSI System board, ESXi reports the Mobo as a MS-7676 |

|