ESXi

vSphere 5.x licensing Matrix

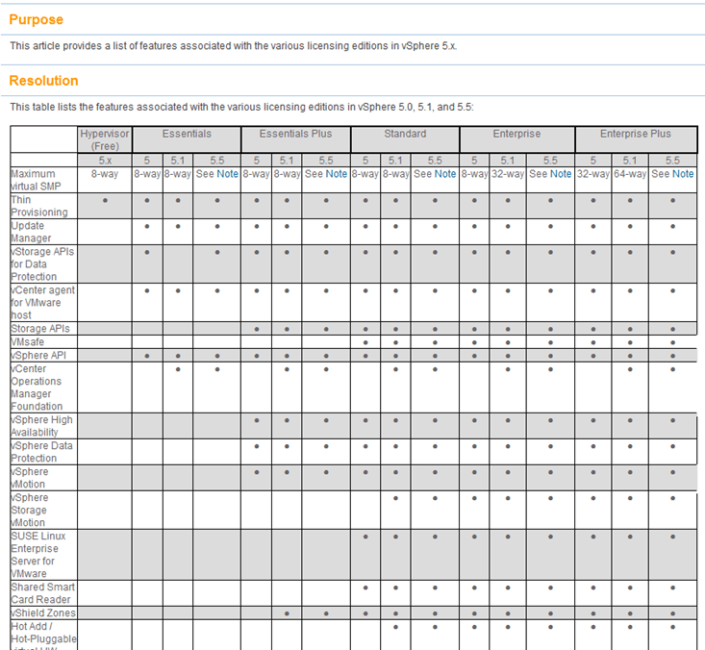

Ever want to compare a full list of the vSphere features to the associated licensing level?

Well now you can… Check out >> http://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=2001113

Here is a quick snapshot of the matrix. Enjoy!

vSphere 5.x licensing Matrix

Ever want to compare a full list of the vSphere features to the associated licensing level?

Well now you can… Check out >> http://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=2001113

Here is a quick snapshot of the matrix. Enjoy!

Top Blogger Voting for 2014 is now open

For many years now Eric Siebert has held voting for the top Virtualization Bloggers. This is no easy task, and this year he has over 300 blogs listed.

If you found any of my blog posts useful, then please, do take a few minutes out of your busy schedule and vote. Every vote really does count, and the higher you rate a blog the more weight it gets in the final tally. Ratings do make a difference, and feedback is great. As any blogger will tell you, frequently writing quality content takes a lot of time.

Please, take a few minutes out of your day and vote. I’ll be voting for bloggers myself, including MIchael Webster and Jason Boche.

Voting is now open, and will continue through 3/17/2014 >> http://vsphere-land.com/news/voting-now-open-for-the-2014-top-vmware-virtualization-blogs.html

Enjoy!

Whitepaper – Security of the VMware vSphere Hypervisor

Folks release recently is the Security of the VMware vSphere Hypervisor.

http://www.vmware.com/files/pdf/techpaper/vmw-wp-secrty-vsphr-hyprvsr-uslet-101.pdf

Enjoy!

VMware Hands On Lab — HOL-SDC-1305

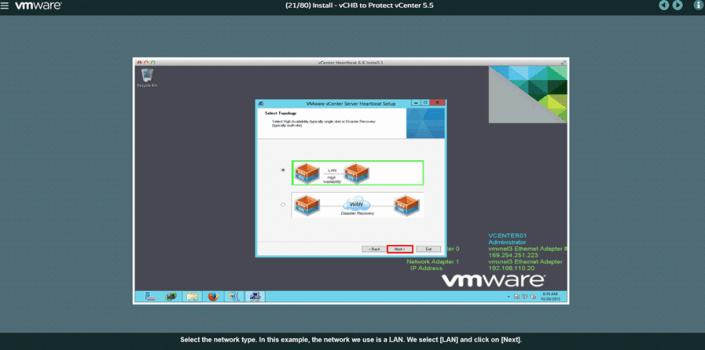

I decided to take the HOL-SDC-1305 lab but only module four tonight. Why only module four? Well, it’s the one all about vCenter Server Heartbeat.

If you have not taken a VMware lab I would highly recommend it as you’ll gain the proper topic knowledge and you’ll get to do some great hands on lab work at the same time.

HOL-SDC-1305 (Module 4) didn’t disappoint me, it was straight to the point, went through the key areas, and was a great overview of the product.

I wanted to see more about requirements, design, and any constraints. However these items were outside the scope of this lab so I can’t really hold it against it.

I attached a quick screen shot of the lab for your enjoyment!

Note – Everyone has access to the VMware Hands on Labs, and it’s FREE to all.

Start here >> https://communities.vmware.com/community/vmtn/resources/how

HOL Labs Main Link >> http://labs.hol.vmware.com/

Enjoy!

VMware Product Walkthroughs

Looking for a step by step guide to some of the top VMware products? Don’t have the time to go through the free VMware Hands offering (http://labs.hol.vmware.com/)? Is you company blocking access to VMware KB TV on Youtube (http://www.youtube.com/user/VMwareKB)?

Then VMware Product walkthrough may be just for you. As of this writing there are 6 production walkthroughs.

These walkthroughs will give you an overview how to use or install the product.

Main Page –

Start Page –

Walkthrough for vCenter Server Heartbeat!

4 Years of VMExplorer Blog

February 2014 marks 4 years I’ve been blogging. Thinking back 4 years vitalization was really starting to ramp up and the IT landscape was changing for us all. I was leading the Phoenix VMUG at that time and we were seeing tremendous growth in attendance.

Flash forward to 2014 and once again our industry is changing for the better. We are going from manual delayed processes to fully automated self-services. Companies who used to be fixed into single offerings are expanding daily, seriously look at Netflix they are now producing their own series for their customers. We have seen the fall and/or decline of many Brick and Motor companies in the retail, entertainment, computer, electronics, and book stores, etc. The ones who could keep their doors open are innovating to stay competitive. One area I find interesting are the local mom-n-pop stores around Video and Books, they seem are making a comeback as the retail giants in their areas close their doors. Yes these places still exist and they have a loyal customer base, but for how long that seems to be the question for them.

Personally, I enjoy change and what it brings. I think change is the zest of life. I enjoy working for a company that embraces change and is the market leader in many of these areas. These buzz words you hear in our industry – Public-Hybrid-Private Cloud, End User Computing, Bring your own devices, XAAS (Anything As a service) etc. these are the conduits for IT change as the company’s we work for continue to innovate. This type of innovation will provide services that we and the customers we serve want, need, and deserve. Keep in mind as some point XAAS will be on your doorstep. Yes I’m talking to you Mr. /Mrs. IT Admin working in SMB market. It’s coming your way too so be ready. Start thinking of Enterprise Technologies and strategies no matter how big or small you are. As these strategies will expand your personal knowledge BUT keep your company competitive.

One last topic INSPIRE. Over the next year I plan to work with a few of my old VMUG leaders to form a group to inspire youth around technologies and engineering. We are working out the details but our beliefs are simple – Help youth in our local community to embrace the technology at a deeper level. We feel this will help them stay on track in school and hopefully choose a career in Technology and engineering. I can still remember the day when I made my choice. It was 1982 and I was playing my good old Atari 2600. I just had to know how it worked and this desire to know naturally led me to my career today.

Next 4 years, yep, I’m looking forward to them and I’m moving forward with Embrace and Inspire as my guides!

Thanks for reading, enjoy, and please feel free to comment!

vSphere 5.5 Product Guide 02-2014 is Released!

Folks this is a must read document packed with all kinds of detailed information around VMware products and how they relate to licensing.

Fair warning – This is document is very detailed, but once you start getting into it lots of gold nuggets can be found.

http://www.vmware.com/files/pdf/vmware-product-guide.pdf

Enjoy!

vExpert 2014 Submissions Now Open!

vExpert 2014 applications are open! I’ve been part of this program since inception, it’s a great community of technical folks who enjoy spreading the word of virtualization!

Please feel free to submit and if you need some tips please post up!

Think you’re one of the best? Have you been supporting and contributing to the VMware Community? Have you excelled and completed the latest certification & accreditation training? If Yes then I would encourage you to apply for the 2014 vExpert Program! http://bit.ly/MtDKff

Each year, we bring together in the vExpert Program the people who have made some of the most important contributions to the VMware community. These are the bloggers, book authors, VMUG leaders, speakers, tool builders, community leaders and general enthusiasts. They work as IT admins and architects for VMware customers, they act as trusted advisors and implementers for VMware partners or as independent consultants, and some work for VMware itself. All of them have the passion and enthusiasm for technology and applying technology to solve problems. They have contributed to the success of us all by sharing their knowledge and expertise over their days, nights, and weekends.

vExperts who participate in the program have access to private betas, free licenses, early access briefings, exclusive events, free access to VMworld conference materials online, and other opportunities to interact with VMware product teams. They also get access to a private community and networking opportunities.

VPN (VMware Partner Network) Path

The VPN Path is for YOU, members of our partner companies, who lead with passion and by example, who are committed to continuous learning through accreditations and certifications and to making their technical knowledge and expertise available to many. This can take shape of event participation, video, IP generation, as well as public speaking engagements. A VMware employee reference is required for VPN Path candidates.

Read more about the 2014 vExpert program at http://bit.ly/MtDKff

@VMwareCertifiedProfessionalVCP

Caching your Windows 8 / 10 Domain Credentials via VPN

I was setting up a fresh Windows 8 Fusion 6 VM last weekend and realized I needed to cache my home domain credentials. I was remote to my home office and the only way I could access the domain was via VPN.

With only the ability to logon locally and then launch the VPN I was prompted for my password and security keys multiple times a day – Not a fun experience. To fix this I really needed my domain user account credentials cached so that I could initially log on to Windows 8 without the VPN, and then launch the VPN connection after logon.

Here is how I solved this issue…

- Logged in with my local account

- Attached to the VPN

- Added my Windows 8 VM to the domain

- Added my domain account to a Local group

- Rebooted (Just adding my Win 8 VM to domain, doesn’t cache my credentials)

- Logged in with my local account

- Attached to the VPN

- Closed all Internet Explorer (IE) windows, held down CTRL+Shift, right click on IE, and finally choose ‘Run as Different User’ (PIC1)

- I typed in my domain user account/password and allowed IE to load << This should cache your credentials (PIC2)

- Close all windows, restart, and I was able to logon with my Domain Account

- Attached to the web based VPN and viola… all is working well

Pic 1 – hold down CTRL+Shift then right click on IE, and finally choose ‘Run as Different User’

PIC 2 – Enter your Credentials

Summary – Using ‘Run as Different User’ ensures you have a local account cached from the domain your attempting to log on to. Your experience may vary depending on your rights as a domain user and the security policies enforced in your domain.

** Update 12/18/2017**

Recently I tired this same process with Windows 10 and it worked like a charm!